Robohub.org

Analysis: Robot learning in the cloud

Cloud robotics, a shorthand for the idea of leveraging the Internet for robots, offers unprecedented opportunities for robot learning. Apart from using the World Wide Web for faster communication or faster computation, a key opportunity is to allow robots to create and collaboratively update shared knowledge repositories. Hosted in a shared cloud storage infrastructure, such knowledge bases for robots could enable robots to cope with the complexities of human environments and offer a simple, powerful way for life-long robot learning.

As part of RoboEarth my colleagues and I have been working towards this goal by creating something like a “Wikipedia for robots”. By sharing knowledge about objects, maps, and actions in a common framework, RoboEarth allows robots with different hardware and software to exchange – and collectively improve – their knowledge.

The goal of the European-Commission-funded initiative is to develop proof-of-concept demonstrations that show how cloud repositories like RoboEarth’s databases can greatly speed up robot learning and how they may ultimately allow robots to perform well beyond their preprogrammed behaviors.

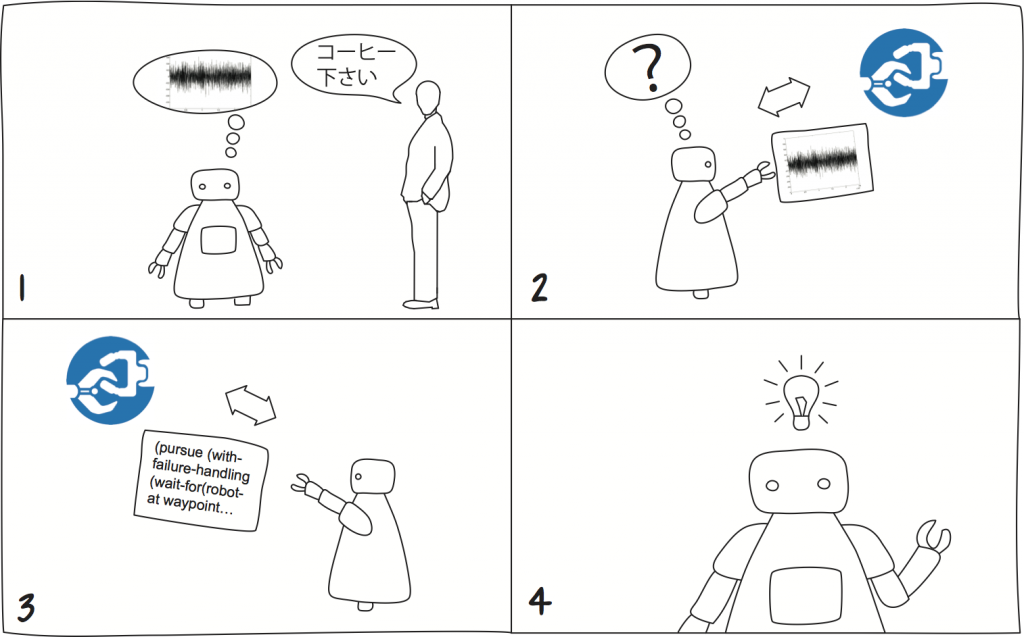

As one of its first demonstrators, the team has shown that robots with different hardware can use RoboEarth to share maps. Another demonstrator allowed a complex service robot to download hardware-independent object models from the RoboEarth database and use them for perception and manipulation. And a third demonstrator has shown how a service robot can identify, download, and use high-level task descriptions to serve a drink.

Since the start of RoboEarth in late 2009, a number of other groups have begun work on allowing robots to use large, online knowledge repositories. Google announced the formation of a Cloud Robotics team at its Google I/O conference last year. Its main thrust is leveraging existing Google Web services. In a joint talk with Willow Garage Inc., the new Google team also introduced the first pure Java implementation of Willow Garage’s Robot Operating System (ROS). The software, currently in alpha testing, is intended to allow integration of Google’s Android operating system for mobile phones with ROS. Finally, Motoman launched its Web-based remote monitoring service, MyMotoman, in August 2011. It not only enables customers to monitor and update their robots’ status via mobile devices, but also uses historical data to enhance its service offering with predictive maintenance trend data on critical situations. Further initiative are in the works, but, unfortunately, few of them are publicly available (the core RoboEarth software packages are available online).

The above examples mark a wider trend toward harnessing Internet technology for robotics. And this is not surprising: Both the personal computing and mobile phone industries are intimately connected to robotics. Personal computers continue to underpin robot software and act as an important enabler by providing ever-increasing computing power. Mobile phone technology, on the other hand, continues to drive down prices for sensors, including cameras, accelerometers, gyroscopes, GPS sensors, as well as for enabling technologies like wireless networks. In addition, personal computers, mobile phones, and tablets are natural entry points for human-robot interaction for developers as well as B2B clients (think of Telepresence robots) and, increasingly, B2C customers (think of toys like Romotive’s Romo). Both fields have benefitted immensely from Internet connectivity, raising the question if the Internet may, in time, have a similar effect on robotics.

I think there is a likely starting point for such a transformation: The performance of solutions to the field of robotics’ main challenge — robot vision — is tightly coupled with the available resources for computation and data storage. Web services that allow outsourcing computation and data storage to the cloud can offer clear cost benefits for connected robots by replacing expensive local processing capacity and battery power with more powerful cloud services, resulting in cheaper, lighter robots.

With its Goggles mobile phone app, Google has an interesting first version of such an image cloud service. Snap a picture with your smartphone, and you will receive related information from Google product search for objects, translation services for text, or geographic information for landmarks within seconds. Although the current system is aimed at humans and has severe limitations for robotics (e.g., it cannot parse scenes; the returned information is not grounded), it points the way to similarly powerful services for robots.

Vision is not the only robot task that could greatly benefit from web services. Speech-to-text-to-speech services could vastly improve human-robot interaction. Optical character recognition could help robots understand text in all its forms. And any roboticist who has used the turn-by-turn directions offered by a navigation system can appreciate how useful a similar service could be for outdoor or indoor robot navigation.

A transformation like the one the Internet heralded in the personal computing and mobile phone industries, however, is unlikely to result from mere adoption and adaptation of old technology. Just as the killer application for mobile phones was not email, it seems unlikely to me that cloud robotics will be driven by an app store. The app store’s business model, with app sales feeding product sales feeding app sales requires a significant number of users and developers of a common platform for a successful kick-start. This seems difficult to achieve for robotics. However, people are trying and have had some first success.

Another candidate for such a transformation is the use of pooled knowledge to improve robot learning. Kiva Systems, in North Reading, Mass., has pioneered this approach for a homogeneous multirobot system (it is no coincidence that one of Kiva Systems’ co-founders is one of my RoboEarth colleagues) and, as mentioned above, RoboEarth has already made first demonstrations for heterogeneous systems.

As you’d expect, not all knowledge robots can learn is easily exchangeable in a joint knowledge repository. Raw trajectory data or sensor and actuator parameters are often too hardware specific to be exchanged successfully. However, a fair amount of knowledge robots learn can be exchanged: For example, maps, CAD models of objects, and articulation models of doors and drawers have been successfully learnt and shared between different robots. A particularly interesting area for learning are links between shared information, such as where is the fridge (map coordinates), what does it look like (object recognition model) and how do I open it (object articulation model). Another are probabilities, such as given that I see a table, bed, and chair, where would I most likely find a pillow? Robots are well-suited for this type of learning not least because, unlike humans, they are capable of rapid, systematic, and accurate data collection. This capability provides unprecedented opportunities for obtaining consistent and comparable data sets as well as for performing large-scale systematic analysis and data mining.

While the future of Cloud Robotics remains uncertain, my RoboEarth colleagues and I agree that human environments are too nuanced and complicated to be summarized within a limited set of specifications. Any solution to program robots to cope with our daily environments is therefore likely to involve some sort of collective robot learning. Sharing knowledge in a cloud infrastructure like RoboEarth allows robots to perform such learning much faster.

Connecting robots worldwide is a challenging task beyond the scope of any individual initiative. RoboEarth is currently collaborating with related research initiatives in Europe and the United States, as well as with a number of companies through its Industrial Advisory Committee. To join the discussion, feel free to have a look at the RoboEarth website and to get in touch via info@roboearth.org.

Note: A previous version of this article appeared in Robotics Trends.

Credits: Images by Carolina Flores // ETH Zurich // RoboEarth.

tags: cloud robotics, ETH Zurich