Robohub.org

CoCoRo: Tracking the development of the world’s largest autonomous underwater swarm

The EU-funded Collective Cognitive Robotics (CoCoRo) project has built the world’s largest swarm of 41 autonomous underwater vehicles (AVs) that show collective cognition. Throughout the first half of 2015 – The Year of CoCoRo – a new video on the latest stage in its development has been uploaded every week, with dozens online to date and more to come! This article provides some insights and background about some of the videos already online.

Carried out by an interdisciplinary and international team of scientists from Austria, Italy, the UK, Belgium and Germany, the CoCoRo project ran from 2011-2014 and has its own YouTube playlist. It offers a novel information platform for the scientific community and anyone interested in how new high-end technologies are developed and what impact they could have on people’s lives.

The footage tracks the development of this unique, autonomous swarm and also demonstrates how it gained the necessary self-awareness to solve tasks such as search missions on the seabed.

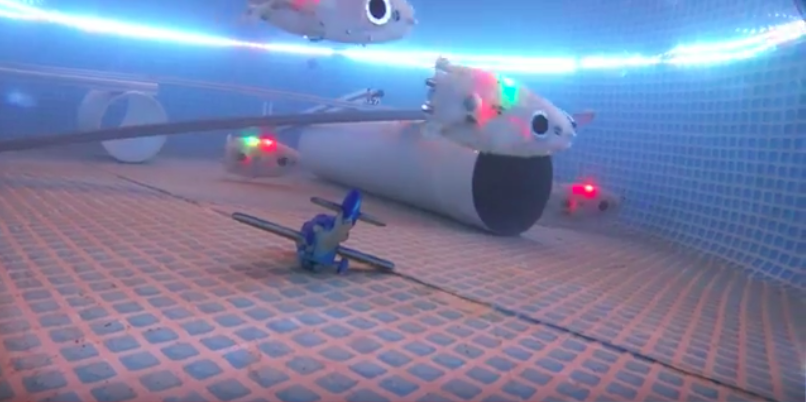

In the videos already online, three different autonomous underwater robots, designed and produced for the project, are introduced: very agile and fast Jeff robots, which search the waterbed, and smaller Lily robots, functioning as communicators to relay the information found by Jeffs to the third robot type: a base-station which floats on the water surface and is able to pass information to scientists on the ground.

The videos also show many different experiments, showcasing the broad functionality of the autonomous swarm. They demonstrate how novel, bio-inspired algorithms, developed by the team, give the swarm a form of self awareness to provide a much broader knowledge base than is in the possession of each single member. This enables the swarm to make collective decisions.

Thus, the videos demonstrate the functionality of the swarm, in collective search missions, as well as the abilities and the specialties and strengths of the different robot types. Below, we look at some of the highlights of this research.

Autonomously driven camera agents, exploring seas, rivers and ponds

The Jeff and Lily robots were tested as autonomous diving robots with cameras mounted to collect material on the underwater ecosystem. Their recorded diving trips provide lively insights into the underwater world: the sea, mountain lakes, ponds and rivers. Although not the main focus of the project, this work developed into a set of interesting spin-off experiments. It is always nice to discover that a tool can also be used something other than what it was originally designed for. In the first set of experiments, an underwater camera was mounted on a Lily robot. The setup was initially tested in a small fishpond, then in a larger Austrian lake and, finally, some mad scientists even threw the poor robot into a whitewater river!

Once it was discovered that the robots could be used as autonomous camera agents, cameras were also mounted on the Jeffs and the research team moved on to larger lakes and more complex habitats to get an inside view.

Blue light and electric sensing for novel AUV underwater communication

The CoCoRo team established a new approach to underwater communication that is showcased in the videos.

In contrast to the majority of underwater swarms, these robots don’t communicate using sonic or echo technology but instead make use of blue-light LEDs, WLAN and potential electric fields. Blue-light blinks are used to keep the swarm together and in the vicinity of the moving base station. Because of this “confinement,” the radio-controlled base-station can pull a whole swarm of Lily robots behind it, much like a tail. In larger water bodies, it is important to confine the robots into specific areas because, if agents are to work efficiently, the swarm normally requires a minimum of connectivity that is achieved only with a critical density; if they’re not confined to a controlled area, robots could get lost and the density could fall below the critical level. Thus, confinement was identified as a key function.

“Electric sense” (coupled potential electric fields) is another important CoCoRo feature. An electrode was placed underwater, beneath the CoCoRo surface station, to generate a pulsing electric field. Jeff robots, with electrodes attached to their outer hulls, are able to sense such fields. In this way, it’s possible to confine the robots to a specific area, around the base station, so that they don’t go astray. Electric-field confinement was tested in a pool, under laboratory conditions, and then in the Italian harbor of Livorno.

Self-charging mechanisms guarantee long-term autonomy in energy

An important goal of the CoCoRo project was to develop an underwater swarm able to achieve long-term autonomy in respect of energy. To this end, a special docking/undocking mechanism was constructed for Jeff robots, on the base station. Later versions of these robots that run out of power will not only be able to dock or undock autonomously there, but also recharge. Several videos demonstrate this docking mechanism in action, both in a pool and in the Mediterranean.

Collective decision making on underwater search missions in highly fragmented areas

Another important aspect of the CoCoRo swarm is that it is able to make collective decisions, the result of a set of bio-inspired algorithms developed for it. To test this ability, a set of collective choice experiments was performed.

The Lily swarm, as a whole, was tasked with choosing a global magnetic optimum over several local optima. To make the experiment even trickier, and the results more clearly interpretable, the scientists constructed a T-Maze offering only a binary choice. In another experiment a similar task was performed in an open field. In each of these settings, the swarm reached a clear-cut decision in favor of the global optimum. In CoCoRo, the ability to make a decision is a property of the collective, not of the individual.

However, habitats are often more structured than a T-maze. To test the capabilities of Jeff robots in highly structured environments, area coverage was achieved through autonomous motion behavior; a specific random walk, with the robots browsing through the habitat, performing a simple program named “correlated random walk with obstacle avoidance.” This consists of a straight-forward motion alternated with infrequent, randomly triggered turns and collision avoidance based on blue-light emission and the robot’s sensing of its reflection. As a result, the first robot is able to traverse the habitat relatively quickly, and subsequent robots even faster. The experiments demonstrate that, here, scalability is key in generating a viable robot swarm. To gain further insight into how Jeff robots navigate through highly fragmented areas, the researchers equipped them with cameras to film their behavior.

In order to further complicate the Jeff robots’ task of searching and navigating, the team constructed a fragmented habitat in a pool, with four compartments separated by medium-height walls. Only one of these contained a magnetic/metallic object, acting as a search target. To complete the experiment, the random-walk based motion program was occasionally interrupted by “jump-over-the-wall” behavior. This allowed the test robot to switch between compartments when it detected a near-collision event via its blue-light LEDs.

The autonomous robot observes its internal magnetic sensor in order to identify whether it is close to the magnetic/metallic target. When it is, it stops, descends and switches on all of its LEDs to mark the spot.

In another experimental video, the scientists demonstrate the use of the search algorithm in pools with increasing volumes of water and with a number of Jeff robots. Building on the previous experiment, social swarm behavior is exhibited as the robots attract each other to the target after they have found it. In a preliminary experiment, for the first time the robots went deeper than two meters, although software bugs were an issue. However, these late-night experiments brought to light crucial insights and allowed the team to develop the more complex search scenarios that will feature in the second half of the Year of CoCoRo.

In another extension of the scenario, a swarm of Lily robots was introduced, with one Lily emulating the behavior of the base station on the water’s surface. A searching Jeff robot on the ground first autonomously looks for a magnetic target. When it finds this, it stops and switches on its vertical blue-light and red LEDs. This attracts some Lily robots browsing near the surface. They pick up the signal from the ground and recruit other Lily robots with their horizontal blue-light LEDs. This attracts even more Lily robots, forming a positive feedback loop through the growing aggregation of robots. Ultimately, all the Lily robots meet at the target spot as well as the one emulating the surface station.

What’s next in The Year of CoCoRo?

In the second half of The Year of CoCoRo, the videos will emphasize more complex waterbed search tasks, showing several different approaches to this. In addition, they will focus on swarm-level self-awareness, internal homeostatic self-regulation and self-repair. The CoCoRo team will also demonstrate shoaling behavior, how the swarm can communicate, even in murky waters, and that it’s not tricked easily, not even by its own mirror image; it knows whether what it “sees” is a reflection of itself or another robot swarm. Aside from scientific experiments, further videos will cover special CoCoRo events, providing some humorous insights into EU-project driven research. Some of these will include: an exhibition at CEBIT (a consumer electronics fair) overnight project workshops and overview videos to round off the big picture: The Year of CoCoRo.

CoCoRo evolves into subCULTron

The experiments and developments shown in The Year of CoCoRo are being fed into subCULTron, a follow-up project that has already started. Here, an even larger swarm of robots – novel types with more bio-inspired algorithms – are being developed. Leading scientists from other projects, including CADDY and ANGELS have joined the consortium. This CoCoRo offspring is planning missions in the Venice lagoon, as well as in a complex, structured, aquatic mussel farm. In these environments, the water is deeper and murky, and the habitat even more fragmented, posing ever-greater challenges for this novel collective system.

If you liked this article, you may also be interested in:

- 3’600-strong underwater robot sensor network returns 1’000’000th measurement, has scientists craving for more

- Thousand-robot swarm self-assembles into arbitrary shapes

- Surgical micro-robot swarms: Science fiction, or realistic prospect?

- Underwater Dreams: The story of undocumented teens who win underwater robot competition

- Crowdsourcing new strategies for cancer treatment: Towards swarming nanobots

- Robots Podcast: Termite-Inspired Construction

See all the latest robotics news on Robohub, or sign up for our weekly newsletter.

tags: AUV, c-Consumer-Household, CoCoRo, EU, EU robotics industry news, Swarming, UAV, underwater video