Robohub.org

Making sense of vision and touch: #ICRA2019 best paper award video and interview

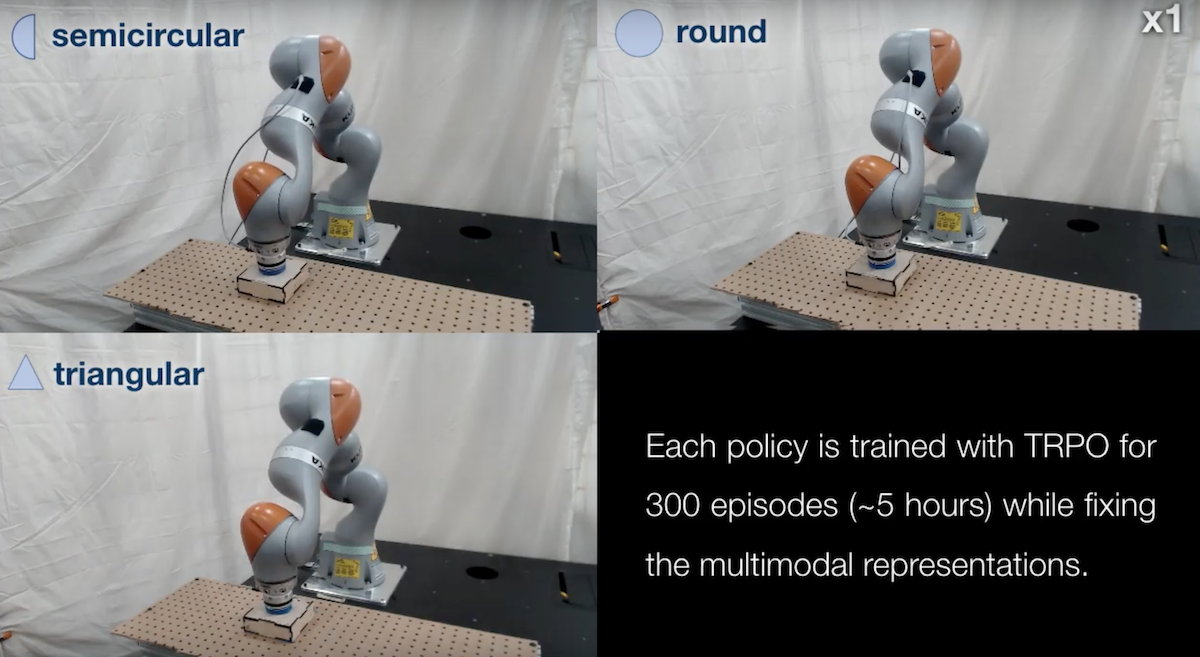

PhD candidate Michelle A. Lee from the Stanford AI Lab won the best paper award at ICRA 2019 with her work “Making Sense of Vision and Touch: Self-Supervised Learning of Multimodal Representations for Contact-Rich Tasks”. You can read the paper on arxiv here.

Audrow Nash was there to capture her pitch.

And here’s the official video about the work.

Full reference

Lee, Michelle A., Yuke Zhu, Krishnan Srinivasan, Parth Shah, Silvio Savarese, Li Fei-Fei, Animesh Garg, and Jeannette Bohg. “Making sense of vision and touch: Self-supervised learning of multimodal representations for contact-rich tasks.” arXiv preprint arXiv:1810.10191 (2018).

Robohub Editors

Related posts :

Robot Talk Episode 152 – Dexterous robot hands, with Rich Walker

Robot Talk

17 Apr 2026

In the latest episode of the Robot Talk podcast, Claire chatted to Rich Walker from Shadow Robot Company about their advanced robotic hands for research and industry.

What I’ve learned from 25 years of automated science, and what the future holds: an interview with Ross King

Ella Scallan and AIhub

14 Apr 2026

Ross King created the first robot scientist back in 2009. He spoke to us about the nature of scientific discovery, the role AI has to play, and his recent work in DNA computing.

Robot Talk Episode 151 – Robots to study the ocean, with Simona Aracri

Robot Talk

10 Apr 2026

In the latest episode of the Robot Talk podcast, Claire chatted to Simona Aracri from National Research Council of Italy about innovative robot designs for oceanography and environmental monitoring.

Generative AI improves a wireless vision system that sees through obstructions

MIT News

08 Apr 2026

With this new technique, a robot could more accurately detect hidden objects or understand an indoor scene using reflected Wi-Fi signals.

Resource-constrained image generation and visual understanding: an interview with Aniket Roy

AIhub

07 Apr 2026

Aniket tells us about his research exploring how modern generative models can be adapted to operate efficiently while maintaining strong performance.

Back to school: robots learn from factory workers

Horizon Magazine

02 Apr 2026

A Czech startup is making factory automation easier by letting workers teach robots new tasks through simple demonstrations instead of complex coding.

Resource-sharing boosts robotic resilience

EPFL

31 Mar 2026

When a modular robot shares power, sensing, and communication resources among its individual units, it is significantly more resistant to failure than traditional robotic systems.

Robot Talk Episode 150 – House building robots, with Vikas Enti

Robot Talk

27 Mar 2026

In the latest episode of the Robot Talk podcast, Claire chatted to Vikas Enti from Reframe Systems about using robotics and automation to build climate-resilient, high-performance homes.