Robohub.org

Mayfield Robotics to introduce enhanced interactions with Kuri

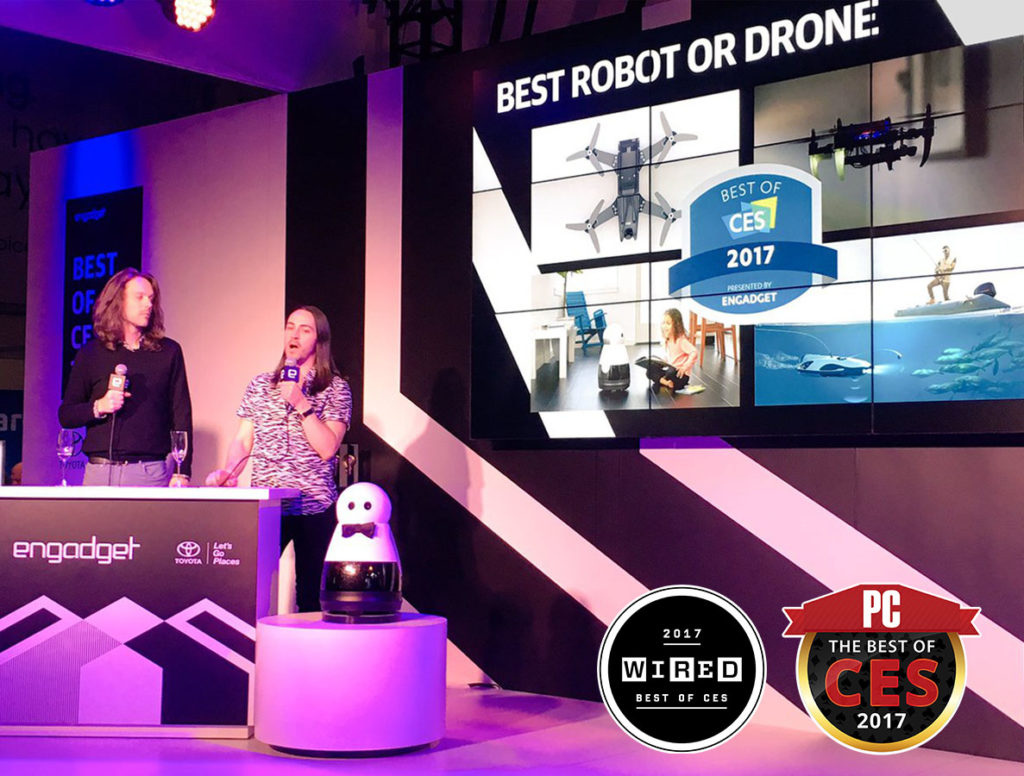

It’s been about 8 weeks since we first introduced Kuri to the world at the Consumer Electronics Show in Las Vegas and we’re incredibly, amazingly, stupendously happy to keep hearing from people about how excited they are about Kuri. We won “Best of CES” nominations and awards from our friends at Engadget, Wired, PC Magazine, and others.

We also heard accolades from people around the world on social media, and even many of our own mothers called to say they heard about our robot on TV.

Since then, we’ve been hard at work, testing Kuri in lots of different homes, fine tuning hardware details and expanding our software capabilities. We’ll be sharing updates, like this, about once a month, letting you know how we’re progressing towards shipping our first robots.

First up, our voice command capabilities are growing. Today, if you say “hey Kuri, I love you”, Kuri responds with an adorable little “I love you too” dance and light show. Or, if it’s time to call it a night we can now say “hey Kuri, go to sleep” and he’ll close his eyes and doze off to dream about whatever robots dream about*.

We’ve also made some big advances in Kuri’s sound and face detection capabilities: he’ll now look toward sounds, and when he sees a face he’ll be able to react with a smile (like we do when we see someone we like), and his eyes will follow you, waiting for your next instruction. This is big step forward making Kuri act alive in ways that are responding to you, and it feels warm and friendly when you see it in person.

Our hardware team has also been covering new territory, redesigning the speaker enclosures that help to project Kuri’s remarkable DJ capabilities, and in a small-but-important detail, updating Kuri’s eyes to be a convex lens shape that reflects light more naturally, making him even more lifelike. They’re more difficult to make, but it’s a detail that matters.

Lastly, we’re excited to also announce that we’ve formalized our partnership with IFTTT, an online service that will make it easy for Kuri to interact with other smart home appliances and devices. We’ll have more on this in future updates, and we can’t wait to show you how smart home connections will make Kuri even more helpful around your home.

Thanks for joining us, we’ll continue to keep you posted on our progress.

* Pretty soon, when his offline mapping and facial recognition training is incorporated, we are pretty sure Kuri will dream about wandering around his home and seeing his family members as he’s consolidating his memories of the day, just like humans do. Right now he’s actually just patiently waiting for our engineers to wake him up again.

tags: c-Consumer-Household