Robohub.org

UBR-1 on ROS2 Humble

It has been a while since I’ve posted to the blog, but lately I’ve actually been working on the UBR-1 again after a somewhat long hiatus. In case you missed the earlier posts in this series:

ROS2 Humble

The latest ROS2 release came out just a few weeks ago. ROS2 Humble targets Ubuntu 22.04 and is also a long term support (LTS) release, meaning that both the underlying Ubuntu operating system and the ROS2 release get a full 5 years of support.

Since installing operating systems on robots is often a pain, I only use the LTS releases and so I had to migrate from the previous LTS, ROS2 Foxy (on Ubuntu 20.04). Overall, there aren’t many changes to the low-level ROS2 APIs as things are getting more stable and mature. For some higher level packages, such as MoveIt2 and Navigation2, the story is a bit different.

Visualization

One of the nice things about the ROS2 Foxy release was that it targeted the same operating system as the final ROS1 release, Noetic. This allowed users to have both ROS1 and ROS2 installed side-by-side. If you’re still developing in ROS1, that means you probably don’t want to upgrade all your computers quite yet. While my robot now runs Ubuntu 22.04, my desktop is still running 18.04.

Therefore, I had to find a way to visualize ROS2 data on a computer that did not have the latest ROS2 installed. Initially I tried the Foxglove Studio, but didn’t have any luck with things actually connecting using the native ROS2 interface (the rosbridge-based interface did work). Foxglove is certainly interesting, but so far it’s not really an RVIZ replacement – they appear to be more focused on offline data visualization.

I then moved onto running rviz2 inside a docker environment – which works well when using the rocker tool:

sudo apt-get install python3-rocker

sudo rocker --net=host --x11 osrf/ros:humble-desktop rviz2

If you are using an NVIDIA card, you’ll need to add --nvidia along with --x11.

In order to properly visualize and interact with my UBR-1 robot, I needed to add the ubr1_description package to my workspace in order to get the meshes and also my rviz configurations. To accomplish this, I needed to create my own docker image. I largely based it off the underlying ROS docker images:

ARG WORKSPACE=/opt/workspace

FROM osrf/ros:humble-desktop

# install build tools

RUN apt-get update && apt-get install -q -y --no-install-recommends \

python3-colcon-common-extensions \

git-core \

&& rm -rf /var/lib/apt/lists/*

# get ubr code

ARG WORKSPACE

WORKDIR $WORKSPACE/src

RUN git clone https://github.com/mikeferguson/ubr_reloaded.git \

&& touch ubr_reloaded/ubr1_bringup/COLCON_IGNORE \

&& touch ubr_reloaded/ubr1_calibration/COLCON_IGNORE \

&& touch ubr_reloaded/ubr1_gazebo/COLCON_IGNORE \

&& touch ubr_reloaded/ubr1_moveit/COLCON_IGNORE \

&& touch ubr_reloaded/ubr1_navigation/COLCON_IGNORE \

&& touch ubr_reloaded/ubr_msgs/COLCON_IGNORE \

&& touch ubr_reloaded/ubr_teleop/COLCON_IGNORE

# install dependencies

ARG WORKSPACE

WORKDIR $WORKSPACE

RUN . /opt/ros/$ROS_DISTRO/setup.sh \

&& apt-get update && rosdep install -q -y \

--from-paths src \

--ignore-src \

&& rm -rf /var/lib/apt/lists/*

# build ubr code

ARG WORKSPACE

WORKDIR $WORKSPACE

RUN . /opt/ros/$ROS_DISTRO/setup.sh \

&& colcon build

# setup entrypoint

COPY ./ros_entrypoint.sh /

ENTRYPOINT ["/ros_entrypoint.sh"]

CMD ["bash"]

The image derives from humble-desktop and then adds the build tools and clones my repository. I then ignore the majority of packages, install dependencies and then build the workspace. The ros_entrypoint.sh script handles sourcing the workspace configuration.

#!/bin/bash

set -e

# setup ros2 environment

source "/opt/workspace/install/setup.bash"

exec "$@"

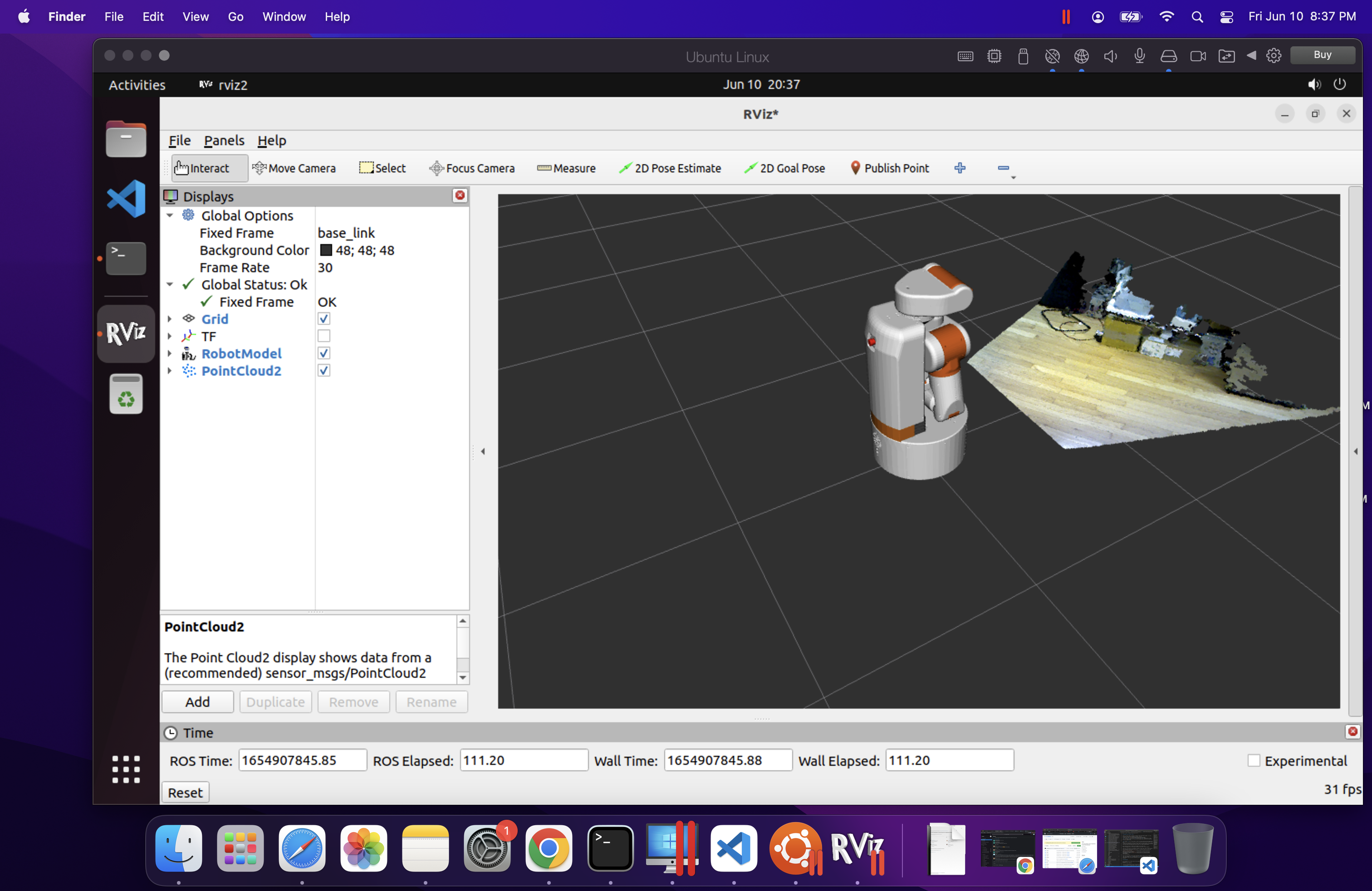

I could then create the docker image and run rviz inside it:

docker build -t ubr:main

sudo rocker --net=host --x11 ubr:main rviz2

The full source of these docker configs is in the docker folder of my ubr_reloaded repository. NOTE: The updated code in the repository also adds a late-breaking change to use CycloneDDS as I’ve had numerous connectivity issues with FastDDS that I have not been able to debug.

Visualization on MacOSX

I also frequently want to be able to interact with my robot from my Macbook. While I previously installed ROS2 Foxy on my Intel-based Macbook, the situation is quite changed now with MacOSX being downgraded to Tier 3 support and the new Apple M1 silicon (and Apple’s various other locking mechanisms) making it harder and harder to setup ROS2 directly on the Macbook.

As with the Linux desktop, I tried out Foxglove – however it is a bit limited on Mac. The MacOSX environment does not allow opening the required ports, so the direct ROS2 topic streaming does not work and you have to use rosbridge. I found I was able to visualize certain topics, but that switching between topics frequently broke.

At this point, I was about to give up, until I noticed that Ubuntu 22.04 arm64 is a Tier 1 platform for ROS2 Humble. I proceeded to install the arm64 version of Ubuntu inside Parallels (Note: I was cheap and initially tried to use the VMWare technology preview, but was unable to get the installer to even boot). There are a few tricks here as there is no arm64 desktop installer, so you have to install the server edition and then upgrade it to a desktop. There is a detailed description of this workflow on askubuntu.com. Installing ros-humble-desktop from arm64 Debians was perfectly easy.

rviz2 runs relatively quick inside the Parallels VM, but overall it was not quite as quick or stable as using rocker on Ubuntu. However, it is really nice to be able to do some ROS2 development when traveling with only my Macbook.

Migration Notes

Note: each of the links in this section is to a commit or PR that implements the discussed changes.

In the core ROS API, there are only a handful of changes – and most of them are actually simply fixing potential bugs. The logging macros have been updated for security purposes and require c-strings like the old ROS1 macros did. Additionally the macros are now better at detecting invalid substitution strings. Ament has also gotten better at detecting missing dependencies. The updates I made to robot_controllers show just how many bugs were caught by this more strict checking.

image_pipeline has had some minor updates since Foxy, mainly to improve consistency between plugins and so I needed to update some topic remappings.

Navigation has the most updates. amcl model type names have been changed since the models are now plugins. The API of costmap layers has changed significantly, and so a number of updates were required just to get the system started. I then made a more detailed pass through the documentation and found a few more issues and improvements with my config, especially around the behavior tree configuration.

I also decided to do a proper port of graceful_controller to ROS2, starting from the latest ROS1 code since a number of improvements have happened in the past year since I had originally ported to ROS2.

Next steps

There are still a number of new features to explore with Navigation2, but my immediate focus is going to shift towards getting MoveIt2 setup on the robot, since I can’t easily swap between ROS1 and ROS2 anymore after upgrading the operating system.