Robohub.org

Why ethical robots might not be such a good idea after all

Last week my colleague Dieter Vanderelst presented our paper: The Dark Side of Ethical Robots at AIES 2018 in New Orleans.

Last week my colleague Dieter Vanderelst presented our paper: The Dark Side of Ethical Robots at AIES 2018 in New Orleans.

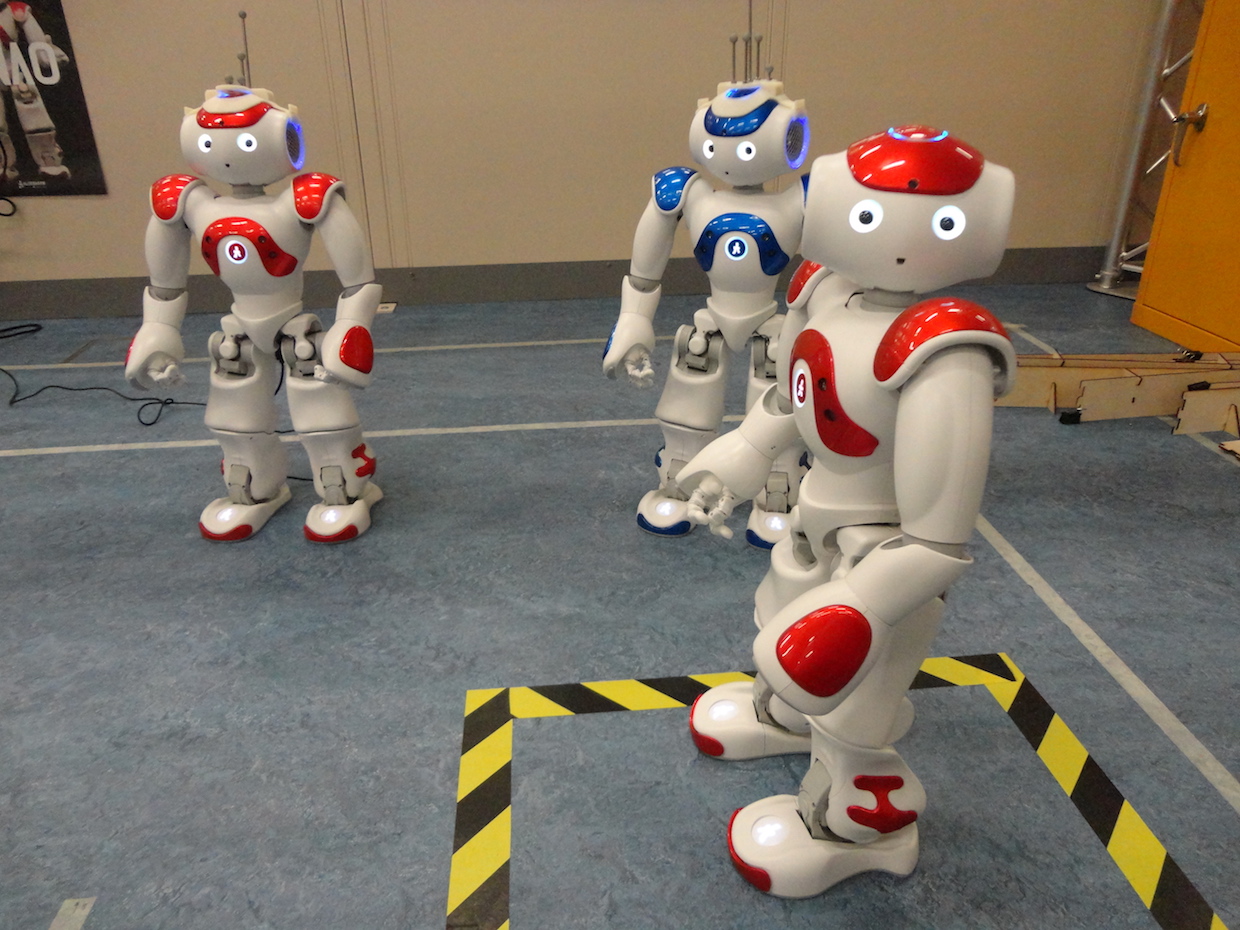

I blogged about Dieter’s very elegant experiment here, but let me summarise. With two NAO robots he set up a demonstration of an ethical robot helping another robot acting as a proxy human, then showed that with a very simple alteration of the ethical robot’s logic it is transformed into a distinctly unethical robot – behaving either competitively or aggressively toward the proxy human.

Here are our paper’s key conclusions:

The ease of transformation from ethical to unethical robot is hardly surprising. It is a straightforward consequence of the fact that both ethical and unethical behaviours require the same cognitive machinery with – in our implementation – only a subtle difference in the way a single value is calculated. In fact, the difference between an ethical (i.e. seeking the most desirable outcomes for the human) robot and an aggressive (i.e. seeking the least desirable outcomes for the human) robot is a simple negation of this value.

On the face of it, given that we can (at least in principle) build explicitly ethical machines then it would seem that we have a moral imperative to do so; it would appear to be unethical not to build ethical machines when we have that option. But the findings of our paper call this assumption into serious doubt. Let us examine the risks associated with ethical robots and if, and how, they might be mitigated. There are three.

- First there is the risk that an unscrupulous manufacturermight insert some unethical behaviours into their robots in order to exploit naive or vulnerable users for financial gain, or perhaps to gain some market advantage (here the VW diesel emissions scandal of 2015 comes to mind). There are no technical steps that would mitigate this risk, but the reputational damage from being found out is undoubtedly a significant disincentive. Compliance with ethical standards such as BS 8611 guide to the ethical design and application of robots and robotic systems, or emerging new IEEE P700X ‘human’ standards would also support manufacturers in the ethical application of ethical robots.

- Perhaps more serious is the risk arising from robots that have user adjustable ethics settings. Here the danger arises from the possibility that either the user or a technical support engineer mistakenly, or deliberately, chooses settings that move the robot’s behaviours outside an ‘ethical envelope’. Much depends of course on how the robot’s ethics are coded, but one can imagine the robot’s ethical rules expressed in a user-accessible format, for example, an XML like script. No doubt the best way to guard against this risk is for robots to have no user adjustable ethics settings, so that the robot’s ethics are hard-coded and not accessible to either users or support engineers.

- But even hard-coded ethics would not guard against undoubtedly the most serious risk of all, which arises when those ethical rules are vulnerable to malicious hacking. Given that cases of white-hat hacking of cars have already been reported, it’s not difficult to envisage a nightmare scenario in which the ethics settings for an entire fleet of driverless cars are hacked, transforming those vehicles into lethal weapons. Of course, driverless cars (or robots in general) without explicit ethics are also vulnerable to hacking, but weaponising such robots is far more challenging for the attacker. Explicitly ethical robots focus the robot’s behaviours to a small number of rules which make them, we think, uniquely vulnerable to cyber-attack.

Ok, taking the most serious of these risks: hacking, we can envisage several technical approaches to mitigating the risk of malicious hacking of a robot’s ethical rules. One would be to place those ethical rules behind strong encryption. Another would require a robot to authenticate its ethical rules by first connecting to a secure server. An authentication failure would disable those ethics, so that the robot defaults to operating without explicit ethical behaviours. Although feasible, these approaches would be unlikely to deter the most determined hackers, especially those who are prepared to resort to stealing encryption or authentication keys.

It is very clear that guaranteeing the security of ethical robots is beyond the scope of engineering and will need regulatory and legislative efforts. Considering the ethical, legal and societal implications of robots, it becomes obvious that robots themselves are not where responsibility lies. Robots are simply smart machines of various kinds and the responsibility to ensure they behave well must always lie with human beings. In other words, we require ethical governance, and this is equally true for robots with or without explicit ethical behaviours.

Two years ago I thought the benefits of ethical robots outweighed the risks. Now I’m not so sure. I now believe that – even with strong ethical governance – the risks that a robot’s ethics might be compromised by unscrupulous actors are so great as to raise very serious doubts over the wisdom of embedding ethical decision making in real-world safety critical robots, such as driverless cars. Ethical robots might not be such a good idea after all.

Here is the full reference to our paper:

Vanderelst D and Winfield AFT (2018), The Dark Side of Ethical Robots, AAAI/ACM Conf. on AI Ethics and Society (AIES 2018), New Orleans.

tags: c-Politics-Law-Society