Robohub.org

Yesterday I looked through the eyes of a robot

Yesterday I looked through the eyes of a robot.

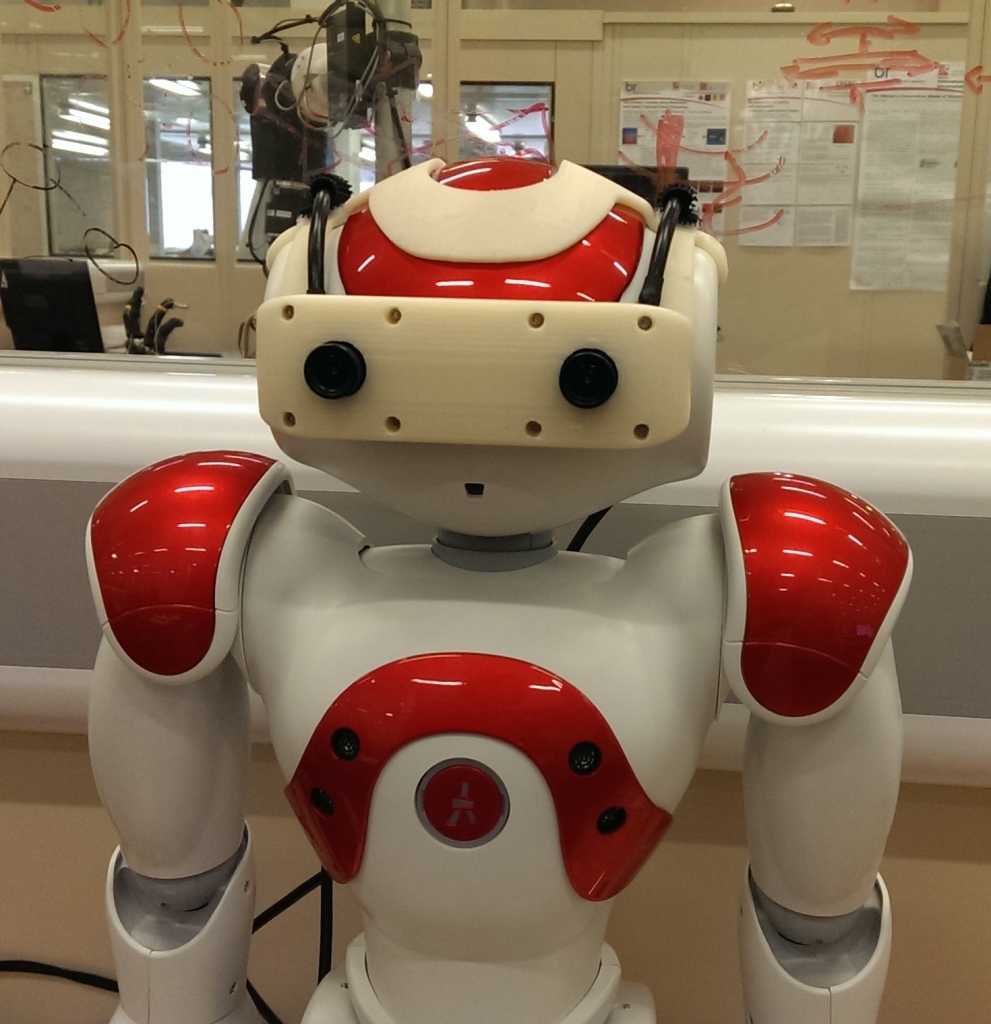

It was a NAO robot fitted with a 3D printed set of goggles, so that the robot has two real cameras on its head (the eyes of the NAO robot are not in fact cameras). I was in another room wearing an Oculus Rift headset. The Oculus was hooked up to the NAO’s goggle cameras, so that I could see through those cameras – in stereo vision.

But it was even better than that. The head positioning system of the Oculus headset was also hooked up to the robot, so I could turn my head and – in sync – the robot’s head moved. And I was standing in front of a Microsoft Kinect that was tracking my arm movements. Those movements were being sent to the NAO, so by moving my arms I was also moving the robot’s arms.

All together this made for a pretty compelling immersive experience. I was able look down while moving my (robot) arms and see them pretty much where you would expect to see your own arms. The illusion was further strengthened when Peter placed a mirror in front of the NAO robot, so I could see my (robot) self moving in the mirror. Then it got weird. Peter asked me to open my hand and placed a paper cup into it. I instinctively looked down and was momentarily shocked not to see the cup in my hand. That made me realise how quickly – within a couple of minutes of donning the headset – I was adjusting to the new robot me.

This setup, developed here in the BRL by my colleagues Paul Bremner and Pete Gibbons, is part of a large EPSRC project called Being There. Paul and Peter are investigating the very interesting question of how humans interact with and via teleoperated robots, in shared social spaces. I think teleoperated robot avatars will be hugely important in the near future – more so than fully autonomous robots. But our robot surrogates will not look like a younger buffer Bruce Willis. They will look like robots. How will we interact with these surrogate robots – robots with human intelligences, human personalities – seemingly with human souls? Will they be treated with the same level of respect that would be accorded their humans if they were actually there, in the flesh? Or be despised as voyeuristic; an uninvited webcam on wheels?

Here is a YouTube video of an earlier version of this setup, without the goggles or Kinect:

I didn’t get the feeling of what it is like to be a robot, but it’s a step in that direction.

tags: BRL, kinect, NAO