Robohub.org

3D display could soon bring touch to the digital world

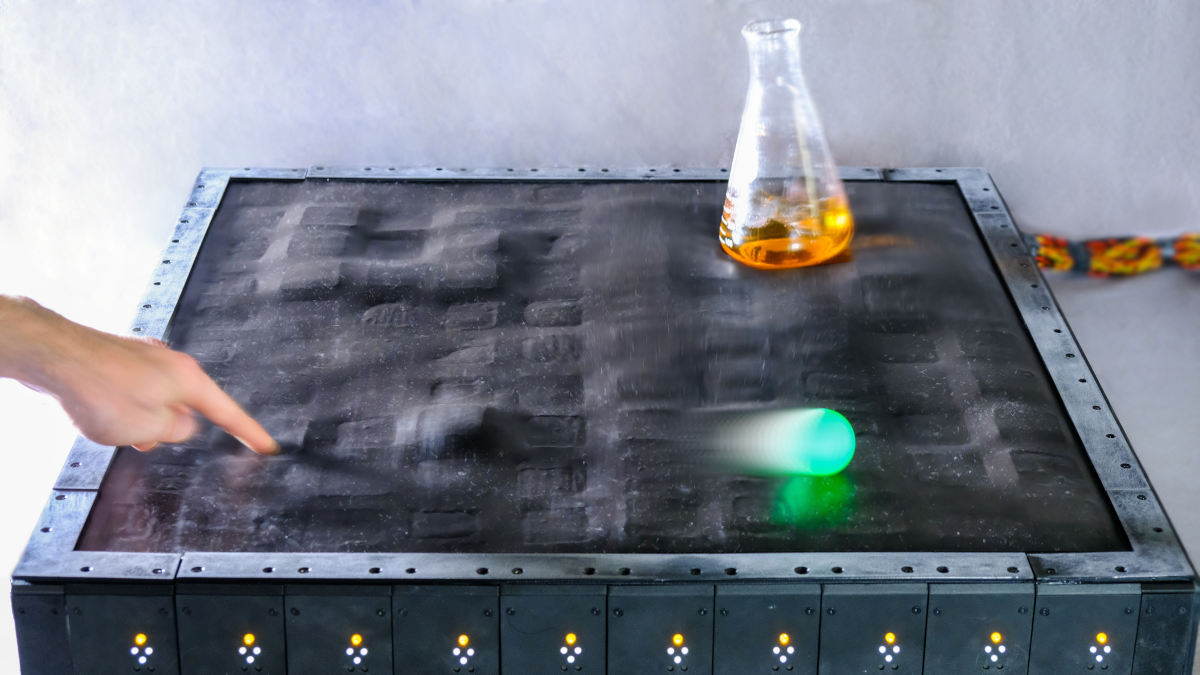

Copyright: Brian Johnson

Researchers at the Max Planck Institute for Intelligent Systems and the University of Colorado Boulder have developed a soft shape display, a robot that can rapidly and precisely change its surface geometry to interact with objects and liquids, react to human touch, and display letters and numbers – all at the same time. The display demonstrates high performance applications and could appear in the future on the factory floor, in medical laboratories, or in your own home.

Imagine an iPad that’s more than just an iPad—with a surface that can morph and deform, allowing you to draw 3D designs, create haiku that jump out from the screen and even hold your partner’s hand from an ocean away.

That’s the vision of a team of engineers from the University of Colorado Boulder (CU Boulder) and the Max Planck Institute for Intelligent Systems (MPI-IS) in Stuttgart, Germany. In a new study published in Nature Communications, they’ve created a one-of-a-kind shape-shifting display that fits on a card table. The device is made from a 10-by-10 grid of soft robotic “muscles” that can sense outside pressure and pop up to create patterns. It’s precise enough to generate scrolling text and fast enough to shake a chemistry beaker filled with fluid.

It may also deliver something even rarer: the sense of touch in a digital age.

“As technology has progressed, we started with sending text over long distances, then audio and later video,” said Brian Johnson, one of two lead authors of the new study who earned his doctorate in mechanical engineering at CU Boulder in 2022 and is now a postdoctoral researcher at the Max Planck Institute for Intelligent Systems. “But we’re still missing touch.”

The innovation builds off a class of soft robots pioneered by a team led by Christoph Keplinger, formerly an assistant professor of mechanical engineering at CU Boulder and now a director at MPI-IS. They’re called Hydraulically Amplified Self-Healing ELectrostatic (HASEL) actuators. The prototype display isn’t ready for the market yet. But the researchers envision that, one day, similar technologies could lead to sensory gloves for virtual gaming or a smart conveyer belt that can undulate to sort apples from bananas.

“You could imagine arranging these sensing and actuating cells into any number of different shapes and combinations,” said Mantas Naris, co-lead author of the paper and a doctoral student in the Paul M. Rady Department of Mechanical Engineering. “There’s really no limit to what these technologies could, ultimately, lead to.”

Playing the accordion

The project has its origins in the search for a different kind of technology: synthetic organs.

In 2017, researchers led by Mark Rentschler, professor of mechanical engineering and biomedical engineering, secured funding from the National Science Foundation to develop what they call sTISSUE—squishy organs that behave and feel like real human body parts but are made entirely out of plastic-like materials.

“You could use these artificial organs to help develop medical devices or surgical robotic tools for much less cost than using real animal tissue,” said Rentschler, a co-author of the new study.

In developing that technology, however, the team landed on the idea of a tabletop display.

The group’s design is about the size of a Scrabble game board and, like one of those boards, is composed of small squares arranged in a grid. In this case, each one of the 100 squares is an individual HASEL actuator. The actuators are made of plastic pouches shaped like tiny accordions. If you pass an electric current through them, fluid shifts around inside the pouches, causing the accordion to expand and jump up.

The actuators also include soft, magnetic sensors that can detect when you poke them. That allows for some fun activities, said Johnson.

“Because the sensors are magnet-based, we can use a magnetic wand to draw on the surface of the display,” he said.

Hear that?

Other research teams have developed similar smart tablets, but the CU Boulder display is softer, takes up a lot less room and is much faster. Each of its robotic muscles can move up to 3000 times per minute.

The researchers are focusing now on shrinking the actuators to increase the resolution of the display—almost like adding more pixels to a computer screen.

“Imagine if you could load an article onto your phone, and it renders as Braille on your screen,” Naris said.

The group is also working to flip the display inside out. That way, engineers could design a glove that pokes your fingertips, allowing you to “feel” objects in virtual reality.

And, Rentschler said, the display can bring something else: a little peace and quiet. “Our system is, essentially, silent. The actuators make almost no noise.”

Other CU Boulder co-authors of the new study include Nikolaus Correll, associate professor in the Department of Computer Science; Sean Humbert, professor of mechanical engineering; mechanical engineering graduate students Vani Sundaram, Angella Volchko and Khoi Ly; and alumni Shane Mitchell, Eric Acome and Nick Kellaris. Christoph Keplinger also served as a co-author in both of his roles at CU Boulder and MPI-IS.