Robohub.org

3D printed robotic prosthetic hand makes Intel finals

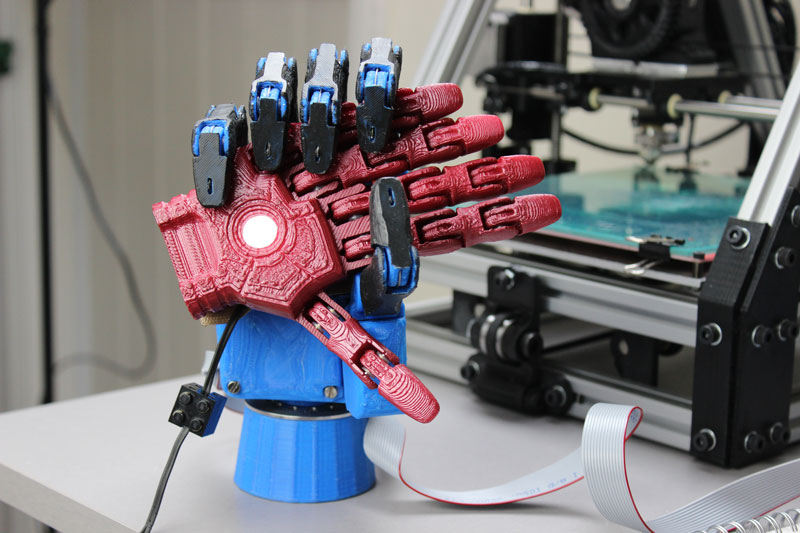

Our 3D-printed prosthetic hand project has made the global finals of Intel’s Make it Wearable competition! Open Bionics came out of the Open Hand Project, where we developed a 3D printed robotic hand that would cost amputees less than $1,000.

Our idea is to significantly reduce the cost of prosthetic hands by using 3D printing. We wanted to create a sustainable way hand amputees could get affordable prosthetics. So, we submitted a video explaining what we’re trying to achieve and we were selected as finalists!

As finalists we have already won $50k and will compete to win the Make it Wearable competition’s $500k first prize. We also get to fly to the United States for mentoring and training as one of ten projects selected.

Open Bionics is open-source, which means that all of the know-how needed to create a robotic prosthetic hand will eventually be posted on our website. The idea is that potentially anyone can improve and customise the designs themselves, and then upload them for everyone to share.

Our prosthetic hand offers much of the functionality of a human hand. It uses electric motors instead of muscles and steel cables instead of tendons. 3D printed plastic parts work like bones and a rubber coating acts as the skin. All of these parts are controlled by electronics to give it a natural movement that can handle all sorts of different objects.

We’ve made some great progress in the last few weeks: We’ve got the circuit boards working and controlling the motors, all that needs to be done now is a few more tweaks on the hand design and for the code to be written. I’ve enlisted the help of an embedded software developer that I work with at the BRL so we’ll be working on this over the next few weeks. The aim is to send out our prototype hand before the end of the Make It Wearable competition and receive some useful feedback on its performance.

We’re also working on a mini robot hand called “Adams” that is designed to work on small humanoid robots using a new flexible material that is 3D printed in one piece and requires very little assembly. We plan to scale the Adams hand up soon, so we can create a new hand for children.

We’re currently based in the incubator at the Bristol Robotics Laboratory (BRL). For more info, visit www.openbionics.com.

If you liked this article, you may also be interested in:

- This robotic prosthetic hand can be made for just $1000

- Introducing the Cybathlon

- Lifehand 2 prosthetic grips and senses like a real hand

See all the latest robotics news on Robohub, or sign up for our weekly newsletter.

tags: c-Health-Medicine, Competition-Challenge, cx-Research-Innovation, prosthetic hand, Service Professional Medical Prosthetics