Robohub.org

New programmable materials can sense their own movements

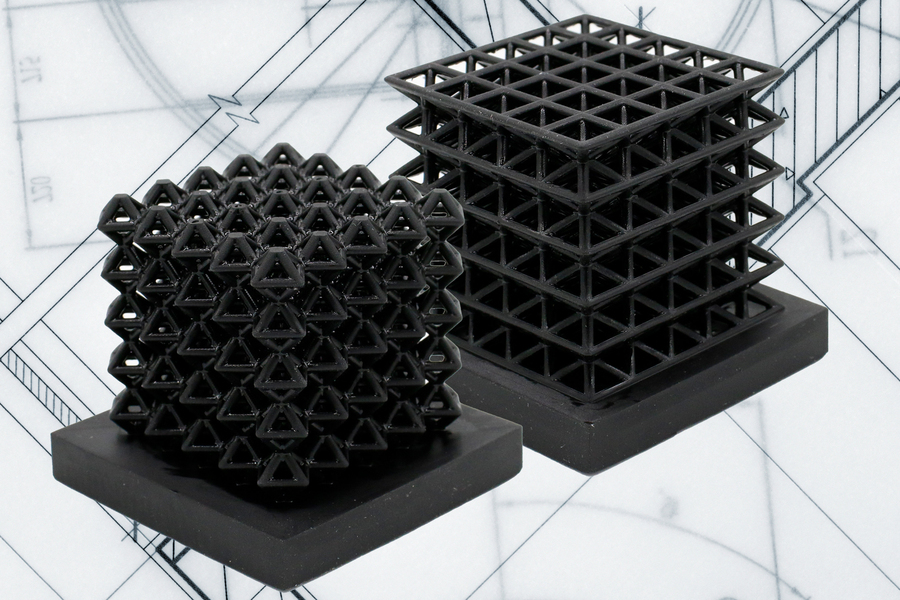

This image shows 3D-printed crystalline lattice structures with air-filled channels, known as “fluidic sensors,” embedded into the structures (the indents on the middle of lattices are the outlet holes of the sensors.) These air channels let the researchers measure how much force the lattices experience when they are compressed or flattened. Image: Courtesy of the researchers, edited by MIT News

By Adam Zewe | MIT News Office

MIT researchers have developed a method for 3D printing materials with tunable mechanical properties, that sense how they are moving and interacting with the environment. The researchers create these sensing structures using just one material and a single run on a 3D printer.

To accomplish this, the researchers began with 3D-printed lattice materials and incorporated networks of air-filled channels into the structure during the printing process. By measuring how the pressure changes within these channels when the structure is squeezed, bent, or stretched, engineers can receive feedback on how the material is moving.

The method opens opportunities for embedding sensors within architected materials, a class of materials whose mechanical properties are programmed through form and composition. Controlling the geometry of features in architected materials alters their mechanical properties, such as stiffness or toughness. For instance, in cellular structures like the lattices the researchers print, a denser network of cells makes a stiffer structure.

This technique could someday be used to create flexible soft robots with embedded sensors that enable the robots to understand their posture and movements. It might also be used to produce wearable smart devices that provide feedback on how a person is moving or interacting with their environment.

“The idea with this work is that we can take any material that can be 3D-printed and have a simple way to route channels throughout it so we can get sensorization with structure. And if you use really complex materials, then you can have motion, perception, and structure all in one,” says co-lead author Lillian Chin, a graduate student in the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL).

Joining Chin on the paper are co-lead author Ryan Truby, a former CSAIL postdoc who is now as assistant professor at Northwestern University; Annan Zhang, a CSAIL graduate student; and senior author Daniela Rus, the Andrew and Erna Viterbi Professor of Electrical Engineering and Computer Science and director of CSAIL. The paper is published today in Science Advances.

Architected materials

The researchers focused their efforts on lattices, a type of “architected material,” which exhibits customizable mechanical properties based solely on its geometry. For instance, changing the size or shape of cells in the lattice makes the material more or less flexible.

While architected materials can exhibit unique properties, integrating sensors within them is challenging given the materials’ often sparse, complex shapes. Placing sensors on the outside of the material is typically a simpler strategy than embedding sensors within the material. However, when sensors are placed on the outside, the feedback they provide may not provide a complete description of how the material is deforming or moving.

Instead, the researchers used 3D printing to incorporate air-filled channels directly into the struts that form the lattice. When the structure is moved or squeezed, those channels deform and the volume of air inside changes. The researchers can measure the corresponding change in pressure with an off-the-shelf pressure sensor, which gives feedback on how the material is deforming.

Because they are incorporated into the material, these “fluidic sensors” offer advantages over conventional sensor materials.

This image shows a soft robotic finger made from two cylinders comprised of a new class of materials known as handed shearing auxetics (HSAs), which bend and rotate. Air-filled channels embedded within the HSA structure connect to pressure sensors (pile of chips in the foreground), which actively measure the pressure change of these “fluidic sensors.” Image: Courtesy of the researchers

“Sensorizing” structures

The researchers incorporate channels into the structure using digital light processing 3D printing. In this method, the structure is drawn out of a pool of resin and hardened into a precise shape using projected light. An image is projected onto the wet resin and areas struck by the light are cured.

But as the process continues, the resin remains stuck inside the sensor channels. The researchers had to remove excess resin before it was cured, using a mix of pressurized air, vacuum, and intricate cleaning.

They used this process to create several lattice structures and demonstrated how the air-filled channels generated clear feedback when the structures were squeezed and bent.

“Importantly, we only use one material to 3D print our sensorized structures. We bypass the limitations of other multimaterial 3D printing and fabrication methods that are typically considered for patterning similar materials,” says Truby.

Building off these results, they also incorporated sensors into a new class of materials developed for motorized soft robots known as handed shearing auxetics, or HSAs. HSAs can be twisted and stretched simultaneously, which enables them to be used as effective soft robotic actuators. But they are difficult to “sensorize” because of their complex forms.

They 3D printed an HSA soft robot capable of several movements, including bending, twisting, and elongating. They ran the robot through a series of movements for more than 18 hours and used the sensor data to train a neural network that could accurately predict the robot’s motion.

Chin was impressed by the results — the fluidic sensors were so accurate she had difficulty distinguishing between the signals the researchers sent to the motors and the data that came back from the sensors.

“Materials scientists have been working hard to optimize architected materials for functionality. This seems like a simple, yet really powerful idea to connect what those researchers have been doing with this realm of perception. As soon as we add sensing, then roboticists like me can come in and use this as an active material, not just a passive one,” she says.

“Sensorizing soft robots with continuous skin-like sensors has been an open challenge in the field. This new method provides accurate proprioceptive capabilities for soft robots and opens the door for exploring the world through touch,” says Rus.

In the future, the researchers look forward to finding new applications for this technique, such as creating novel human-machine interfaces or soft devices that have sensing capabilities within the internal structure. Chin is also interested in utilizing machine learning to push the boundaries of tactile sensing for robotics.

“The use of additive manufacturing for directly building robots is attractive. It allows for the complexity I believe is required for generally adaptive systems,” says Robert Shepherd, associate professor at the Sibley School of Mechanical and Aerospace Engineering at Cornell University, who was not involved with this work. “By using the same 3D printing process to build the form, mechanism, and sensing arrays, their process will significantly contribute to researcher’s aiming to build complex robots simply.”

This research was supported, in part, by the National Science Foundation, the Schmidt Science Fellows Program in partnership with the Rhodes Trust, an NSF Graduate Fellowship, and the Fannie and John Hertz Foundation.

tags: c-Research-Innovation