Robohub.org

Do humans get lazier when robots help with tasks?

Image/Shutterstock.com

By Angharad Brewer Gillham, Frontiers science writer

‘Social loafing’ is a phenomenon which happens when members of a team start to put less effort in because they know others will cover for them. Scientists investigating whether this happens in teams which combine work by robots and humans found that humans carrying out quality assurance tasks spotted fewer errors when they had been told that robots had already checked a piece, suggesting they relied on the robots and paid less attention to the work.

Now that improvements in technology mean that some robots work alongside humans, there is evidence that those humans have learned to see them as team-mates — and teamwork can have negative as well as positive effects on people’s performance. People sometimes relax, letting their colleagues do the work instead. This is called ‘social loafing’, and it’s common where people know their contribution won’t be noticed or they’ve acclimatized to another team member’s high performance. Scientists at the Technical University of Berlin investigated whether humans social loaf when they work with robots.

“Teamwork is a mixed blessing,” said Dietlind Helene Cymek, first author of the study in Frontiers in Robotics and AI. “Working together can motivate people to perform well but it can also lead to a loss of motivation because the individual contribution is not as visible. We were interested in whether we could also find such motivational effects when the team partner is a robot.”

A helping hand

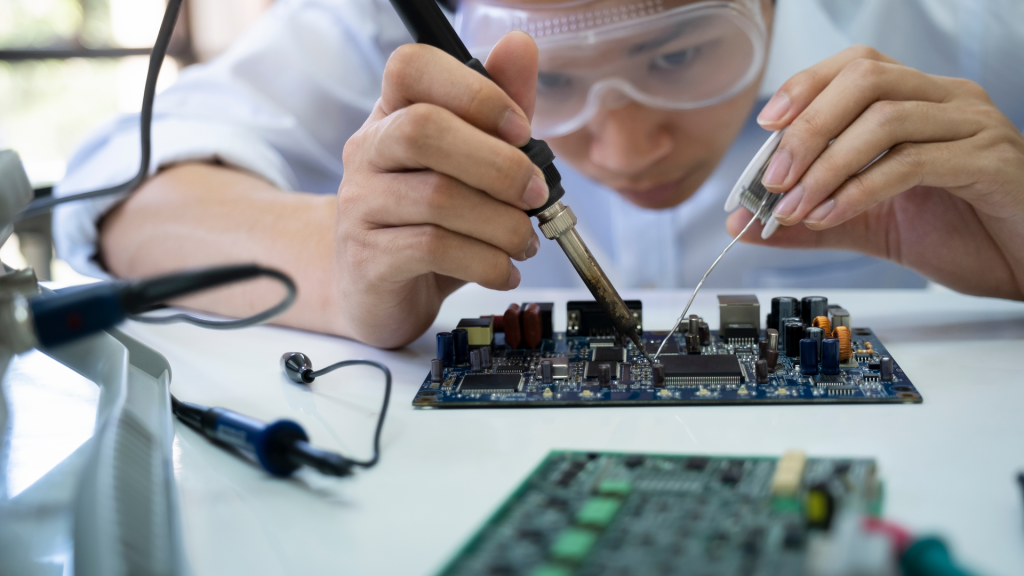

The scientists tested their hypothesis using a simulated industrial defect-inspection task: looking at circuit boards for errors. The scientists provided images of circuit boards to 42 participants. The circuit boards were blurred, and the sharpened images could only be viewed by holding a mouse tool over them. This allowed the scientists to track participants’ inspection of the board.

Half of the participants were told that they were working on circuit boards that had been inspected by a robot called Panda. Although these participants did not work directly with Panda, they had seen the robot and could hear it while they worked. After examining the boards for errors and marking them, all participants were asked to rate their own effort, how responsible for the task they felt, and how they performed.

Looking but not seeing

At first sight, it looked as if the presence of Panda had made no difference — there was no statistically significant difference between the groups in terms of time spent inspecting the circuit boards and the area searched. Participants in both groups rated their feelings of responsibility for the task, effort expended, and performance similarly.

But when the scientists looked more closely at participants’ error rates, they realized that the participants working with Panda were catching fewer defects later in the task, when they’d already seen that Panda had successfully flagged many errors. This could reflect a ‘looking but not seeing’ effect, where people get used to relying on something and engage with it less mentally. Although the participants thought they were paying an equivalent amount of attention, subconsciously they assumed that Panda hadn’t missed any defects.

“It is easy to track where a person is looking, but much harder to tell whether that visual information is being sufficiently processed at a mental level,” said Dr Linda Onnasch, senior author of the study.

The experimental set-up with the human-robot team. Image supplied by the authors.

Safety at risk?

The authors warned that this could have safety implications. “In our experiment, the subjects worked on the task for about 90 minutes, and we already found that fewer quality errors were detected when they worked in a team,” said Onnasch. “In longer shifts, when tasks are routine and the working environment offers little performance monitoring and feedback, the loss of motivation tends to be much greater. In manufacturing in general, but especially in safety-related areas where double checking is common, this can have a negative impact on work outcomes.”

The scientists pointed out that their test has some limitations. While participants were told they were in a team with the robot and shown its work, they did not work directly with Panda. Additionally, social loafing is hard to simulate in the laboratory because participants know they are being watched.

“The main limitation is the laboratory setting,” Cymek explained. “To find out how big the problem of loss of motivation is in human-robot interaction, we need to go into the field and test our assumptions in real work environments, with skilled workers who routinely do their work in teams with robots.”