Robohub.org

Tree cavity inspection using drones

The importance of conserving biodiversity in forest ecosystems is widely recognized. Tree cavities are home to many animal species, such as birds, mammals and beetles, for shelter, nesting or larval development. However trees with low economic interest, often those with cavities or prone to cavity formation, are routinely removed. Consequently, these cavity-dependent species are now endangered. We need better conservation methods to prevent endangering even more cavity-dependent species.

Effective conservation requires better understanding of tree cavities. However, large-scale studies are rare, and detailed data is costly to collect.

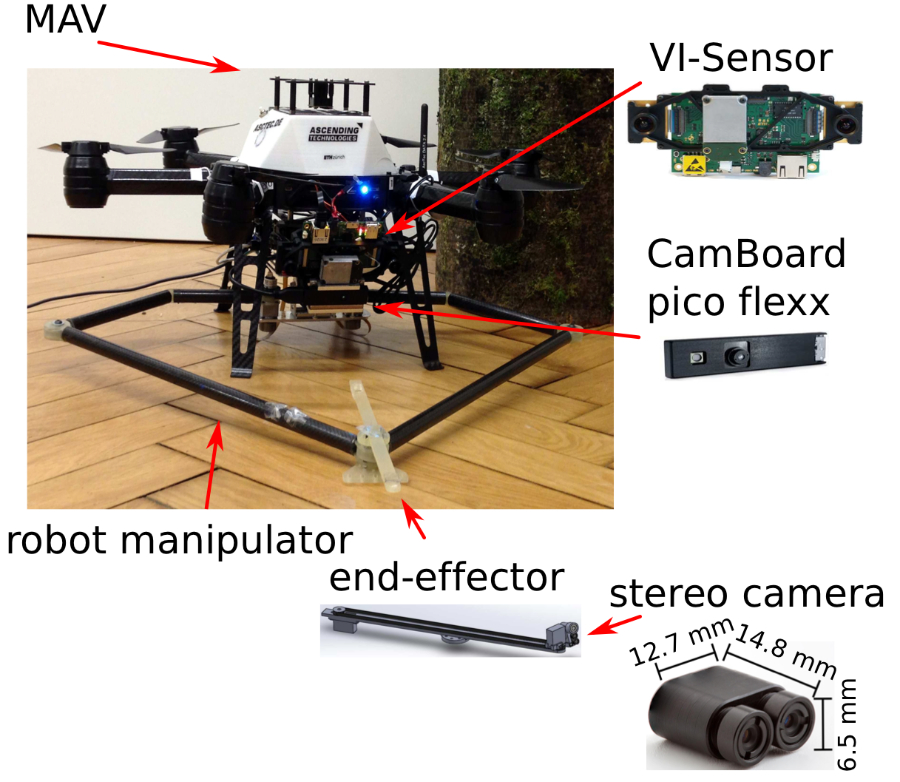

In this work, we focus on woodpeckers’ cavities which are the most common type of cavities. These cavities have an opening of 3-12 cm and a deep hollow inside the tree. We present a system based on Micro Aerial Vehicle (MAV) equipped with a manipulator to perform inspection and 3D reconstruction of tree cavities. The MAV is equipped with a visual-inertial sensor, a depth camera, and a parallel manipulator with an actuated micro-stereo camera at the end-effector, as shown in Figure 1.

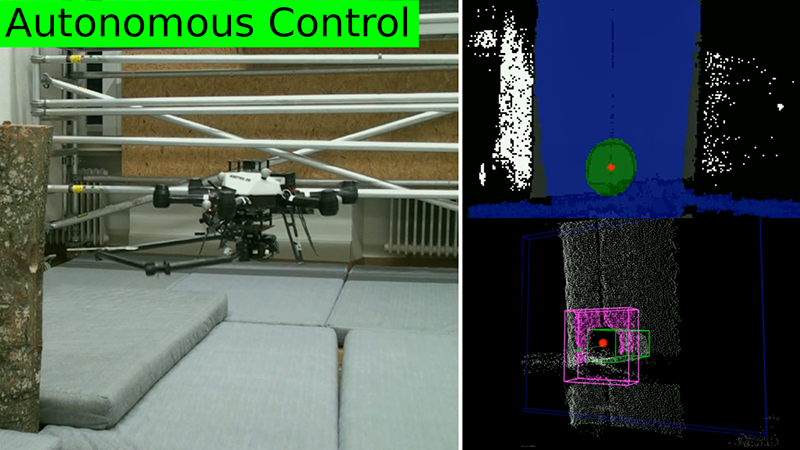

With limited intervention from the user, the system can detect and track a tree cavity. Upon confirmation by the user through a Graphical User Interface (GUI), the inspection process starts.

The proposed approach has been tested in a lab environment as well as outdoors in real tree cavities.

More details for this approach can be found in our paper: K. Steich, M. Kamel, P. Beardsley, M. K. Obrist, R. Siegwart and T. Lachat, “Tree Cavity Inspection Using Aerial Robots.” (The 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems)

Researchers:

Kelly Steich, Mina Kamel, Paul Beardsley, Martin Obrist, Roland Siegwart and Thibault Lachat.

tags: c-Aerial