Robohub.org

A multi-armed robot for assisting with agricultural tasks

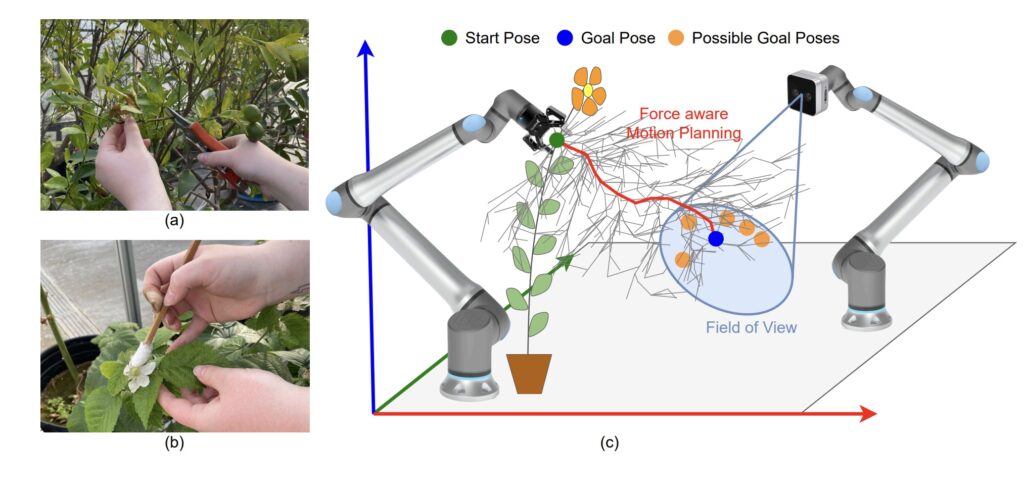

Humans often use one hand to grasp the branch for better accessibility, while the other hand is used to perform primary tasks like (a) branch pruning and (b) hand pollination of the flower. (c) An overview of the approach used by Madhav and colleagues, where one robot manipulates the branch to move the flower to the field of view of another robot by planning a force-aware path. Figure from Force Aware Branch Manipulation To Assist Agricultural Tasks.

Humans often use one hand to grasp the branch for better accessibility, while the other hand is used to perform primary tasks like (a) branch pruning and (b) hand pollination of the flower. (c) An overview of the approach used by Madhav and colleagues, where one robot manipulates the branch to move the flower to the field of view of another robot by planning a force-aware path. Figure from Force Aware Branch Manipulation To Assist Agricultural Tasks.

In their paper Force Aware Branch Manipulation To Assist Agricultural Tasks, which was presented at IROS 2025, Madhav Rijal, Rashik Shrestha, Trevor Smith, and Yu Gu proposed a methodology to safely manipulate branches to aid various agricultural tasks. We interviewed Madhav to find out more.

Could you give us an overview of the problem you were addressing in the paper?

Madhav Rijal (MR): Our work is motivated by StickBug [1], a multi-armed robotic system for precision pollination in greenhouse environments. One of the main challenges StickBug faces is that many flowers are partially or fully hidden within the plant canopy, making them difficult to detect and reach directly for pollination. This challenge also arises in other agricultural tasks, such as fruit harvesting, where target fruits may be occluded by surrounding branches and foliage.

To address this, we study how one robot arm can safely manipulate branches so that these occluded flowers can be brought into the field of view or reachable workspace of another robot arm. This is a challenging manipulation problem because plant branches are deformable, fragile, and vary significantly from one branch to another. In addition, unlike pick-and-place tasks, where objects move freely in space, branches remain attached to the plant, which imposes additional motion constraints during manipulation. If the robot moves a branch without accounting for these constraints and safety limits, it can apply excessive force and damage the branch.

So, the core problem we addressed in this paper is: how can a robot safely manipulate branches to reveal hidden flowers while remaining aware of interaction forces and minimizing damage?

How did your approach go about tackling the problem?

MR: Our approach [2] combines motion planning that accounts for branch constraints with real-time force feedback.

First, we generate a feasible manipulation path using an RRT* (rapidly exploring random tree) algorithm-based planner in the workspace. The planner respects the geometric constraints of the branch and the task requirements. We model branches as deformable linear objects and use a geometric heuristic to identify configurations that are safer to manipulate.

Then, during execution, we monitor the interaction force using a force sensor mounted on the manipulator. If the measured force exceeds a predefined safe threshold, the system does not continue along the same path. Instead, it re-plans the motion online and searches for an alternative path or goal configuration that can reduce branch stress while still achieving the task.

So, the key idea is that the robot does not plan only for reachability. It also adapts its motion based on the physical response of the branch during manipulation.

Madhav with the multi-armed pollination robot, StickBug.

Madhav with the multi-armed pollination robot, StickBug.

What are the main contributions of your work?

MR: The main contributions of our work are:

- A geometric heuristic model for branch manipulation that does not require branch-specific parameter tuning or physical probing.

- A motion planning strategy for branch manipulation that respects both workspace and branch constraints, using the geometric heuristic to guide RRT* and incorporating online replanning based on force feedback.

- An experimental demonstration showing that force feedback-based motion planning can protect branches from excessive force during manipulation.

- Generalization across different branch types, since the method relies primarily on branch geometry and can adapt online to compensate for model inaccuracies.

Could you talk about the experiments that you carried out to test the approach?

MR: We evaluated the proposed method through a set of branch manipulation experiments using five different starting poses, all targeting a common goal region. Each configuration was tested 10 times, resulting in a total of 50 trials. A trial was considered successful if the robot brought the grasp point to within 5 cm of the goal point. For all trials, the planning time limit was set to 400 seconds, and the allowable interaction force range was −40 N to 40 N. Across the 50 trials, 39 were successful and 11 failed, corresponding to a success rate of about 78%. The average number of replanning attempts across all scenarios was 20.

In terms of force reduction, the results show a clear progression in safety. Constraint-aware planning reduced the manipulation force from above 100 N to below 60 N. Building on this, online force-aware replanning further reduced the force from about 60 N to below the desired 40 N threshold. This indicates that safety awareness through geometric heuristics, which model branches as deformable linear objects, together with force-aware online replanning, can effectively lower interaction forces during manipulation.

Overall, the experiments demonstrate that the proposed framework enables safer branch manipulation while maintaining task feasibility. By combining branch-constraint-aware planning with real-time force feedback, the robot can adapt its motion to reduce excessive force and minimize the risk of branch damage. These findings highlight the value of force-aware planning for practical robotic manipulation in agricultural environments.

Do you have plans to further extend this work?

MR: Yes, there are several directions for extending this work.

One current limitation is the need to define a safe force threshold in advance. In practice, different types of branches require different force limits for safe manipulation. A key direction for future work is to learn or estimate safe force thresholds automatically from branch geometry or visual cues.

Another extension is to improve grasp-point selection. Instead of only replanning after grasping, the system could also reason about the most suitable grasp point beforehand so that the required manipulation force is reduced from the start.

We are also interested in designing a compliant gripper with integrated force sensing that is better suited for manipulating delicate branches. In the longer term, we plan to integrate this method into a multi-arm agricultural robot, where one arm manipulates the branch and another performs pollination, pruning, or harvesting.

Overall, this work advances the development of agricultural robots that can actively manipulate branches to support tasks such as harvesting, pruning, and pollination. By exposing fruits, cut points, and hidden flowers within the canopy, this capability can help overcome key barriers to the broader adoption of robot-assisted agricultural technologies.

References

[1] Smith, Trevor, Madhav Rijal, Christopher Tatsch, R. Michael Butts, Jared Beard, R. Tyler Cook, Andy Chu, Jason Gross, and Yu Gu. Design of Stickbug: a six-armed precision pollination robot. In 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 69-75. IEEE, 2024.

[2] Rijal, Madhav, Rashik Shrestha, Trevor Smith, and Yu Gu, Force Aware Branch Manipulation To Assist Agricultural Tasks. In 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 1217-1222. IEEE, 2025.

About Madhav

|

Madhav Rijal is a Ph.D. candidate in Mechanical Engineering at West Virginia University working in agricultural robotics. His research combines motion planning, optimization, multi-agent collaboration and distributed decision making to develop robotic systems for precision pollination and other plant-interaction tasks. His current work focuses on branch manipulation and safe robot operation in agricultural environments. |

tags: IROS