Robohub.org

Could we experience the workings of our own brains?

One of the oft quoted paradoxes of consciousness is that we are unable to observe or experience our own conscious minds at work; that we cannot be conscious of the workings of consciousness. I’ve always been puzzled about why this is a puzzle. After all, we don’t think it odd that word processors have no insight into their inner workings (although that’s a bad example because we might conceivably code a future self-aware WP and arrange for it to access its inner machinery).

Perhaps a better example is this: The act of picking up a cup of hot coffee and bringing it to your lips appears, on the face of it, to be perfectly observable. No mystery at all. We can see the joints and muscles at work, ‘feel’ the tactile sensing of the coffee cup, and its weight as we begin to lift it. We can even build mathematical models of the kinetics and dynamics, and (with somewhat more difficulty) make robot arms to pick up cups of coffee. But – I contend – we are kidding ourselves if we think we know what’s going on in the complex sensory and neurological processes that appear so effortless to perform. The fact we can observe and even feel ourselves lifting a coffee cup gives very little real insight. And the mathematical models – and robots – are not really models of the human neurological and physiological processes at all, they are models of idealised abstractions of limbs, joints and hand.

I would argue that we have no greater insight into the workings of this (apparently straightforward) physical act, than we do of thinking itself. But again this is not surprising. The additional cognitive machinery to be able to access or experience the inner workings of any process, whether mental or physical, would be huge and (biologically) expensive. And with no apparent survival value (except perhaps for philosophers of mind), it’s not surprising that such mechanisms have not evolved. They would of course require not just extra grey matter, but sensing too. It’s interesting that there are no pain receptors within our brains – that’s why it’s perfectly possible to have brain surgery while wide awake.

But this got me thinking. Imagine that at some future time we have nanoscale sensors capable of positioning themselves throughout our brains in order to provide a very large sensor network. If each sensor is monitoring the activity of key neurons, or axons, and able to transmit its readings in real-time to an external device, then we would have the data to provide ourselves with a real-time activity image of our own brains. It could be presented visually, or perhaps sonically (or via multi-media). It might be fun for awhile, but this personal brain imaging technology (let’s call it iBrain) probably wouldn’t provide us with much more insight or experience of our own thought processes.

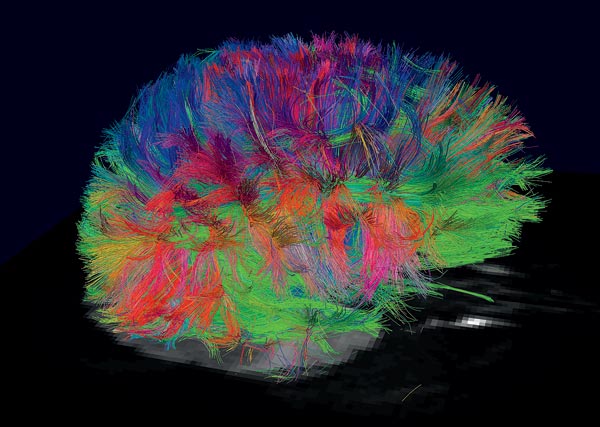

But let’s assume that by the time we have the nanotechnology for harmlessly inserting millions of brain nanosensors we will have also figured out the major architectural structures of the brain – crucially linking the neural scale to the macro scale. Actually, if we believe that the recently announced European and US human brain Grand Challenges will achieve what they are promising in terms of modelling and mapping human brain activity, then such an understanding should only be a few decades away. So now build those maps and structures into the personal iBrain, and we will be presented not with a vast and bewildering cloud of colours, as in the beautiful image above, but a simpler image with major highways and structures highlighted. Still complex of course, but then so are street maps of cities or countries. So the iBrain would allow you to zoom into certain regions and really see what’s going on while you (say) listen to Bach (the very thing I’m doing right now).

Then we really would be able to observe our own brains at work and, just perhaps, experience the connection between brain and thought.

This post originally appeared on Alan Winfield’s Web Log on Feb. 20, 2013.

tags: Alan Winfield, Algorithm AI-Cognition, EU perspectives