Robohub.org

NTSB Tesla crash report and new NHTSA regulations to come

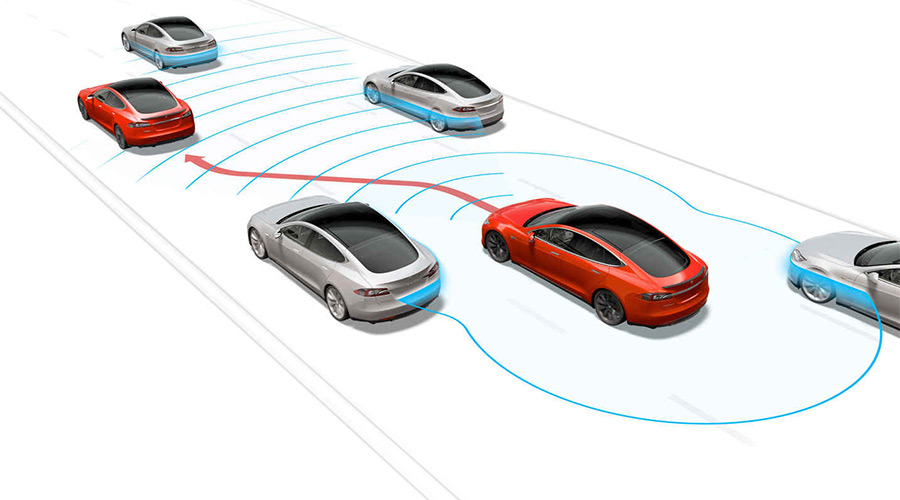

Tesla Motors autopilot (photo:Tesla)

The NTSB (National Transportation Safety Board) has released a preliminary report on the fatal Tesla crash with the full report expected later this week. The report is much less favourable to autopilots than their earlier evaluation.

(This is a giant news day for Robocars. Today NHTSA also released their new draft robocar regulations which appear to be much simpler than the earlier 116 page document that I was very critical of last year. It’s a busy day, so I will be posting a more detailed evaluation of the new regulations — and the proposed new robocar laws from the House — later in the week.)

The earlier NTSB report indicated that though the autopilot had its flaws, overall the system was working. This is to say that though drivers were misusing the autopilot, the combined system including drivers not misusing the autopilot combined with those who did, was overall safer than drivers with no autopilot. The new report makes it clear that this does not excuse the autopilot being so easy to abuse. (By abuse, I mean ignore the warnings and treat it like a robocar, letting it driving you without actively monitoring the road, ready to take control.)

While the report mostly faults the truck driver for turning at the wrong time, it blames Tesla for not doing a good enough job to assure that the driver is not abusing the autopilot. Tesla makes you touch the wheel every so often, but NTSB notes that it is possible to touch the wheel without actually looking at the road. NTSB also is concerned that the autopilot can operate in this fashion even on roads it was not designed for. They note that Tesla has improved some of these things since the accident.

This means that “touch the wheel” systems will probably not be considered acceptable in the future, and there will have to be some means of assuring the driver is really paying attention. Some vendors have decided to put in cameras that watch the driver or in particular the driver’s eyes to check for attention. After the Tesla accident, I proposed a system which tested driver attention from time to time and punished them if they were not paying attention which could do the job without adding new hardware.

It also seems that autopilot cars will need to have maps of what roads they work on and which they don’t, and limit features based on the type of road you’re on.