Robohub.org

Robot see, robot do: System learns after watching how-tos

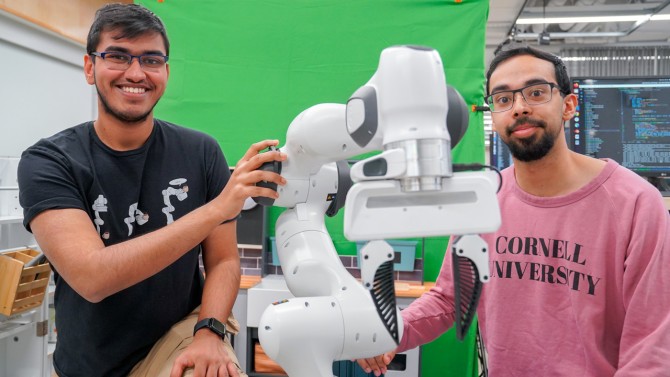

Kushal Kedia (left) and Prithwish Dan (right) are members of the development team behind RHyME, a system that allows robots to learn tasks by watching a single how-to video.

Kushal Kedia (left) and Prithwish Dan (right) are members of the development team behind RHyME, a system that allows robots to learn tasks by watching a single how-to video.

By Louis DiPietro

Cornell researchers have developed a new robotic framework powered by artificial intelligence – called RHyME (Retrieval for Hybrid Imitation under Mismatched Execution) – that allows robots to learn tasks by watching a single how-to video. RHyME could fast-track the development and deployment of robotic systems by significantly reducing the time, energy and money needed to train them, the researchers said.

“One of the annoying things about working with robots is collecting so much data on the robot doing different tasks,” said Kushal Kedia, a doctoral student in the field of computer science and lead author of a corresponding paper on RHyME. “That’s not how humans do tasks. We look at other people as inspiration.”

Kedia will present the paper, One-Shot Imitation under Mismatched Execution, in May at the Institute of Electrical and Electronics Engineers’ International Conference on Robotics and Automation, in Atlanta.

Home robot assistants are still a long way off – it is a very difficult task to train robots to deal with all the potential scenarios that they could encounter in the real world. To get robots up to speed, researchers like Kedia are training them with what amounts to how-to videos – human demonstrations of various tasks in a lab setting. The hope with this approach, a branch of machine learning called “imitation learning,” is that robots will learn a sequence of tasks faster and be able to adapt to real-world environments.

“Our work is like translating French to English – we’re translating any given task from human to robot,” said senior author Sanjiban Choudhury, assistant professor of computer science in the Cornell Ann S. Bowers College of Computing and Information Science.

This translation task still faces a broader challenge, however: Humans move too fluidly for a robot to track and mimic, and training robots with video requires gobs of it. Further, video demonstrations – of, say, picking up a napkin or stacking dinner plates – must be performed slowly and flawlessly, since any mismatch in actions between the video and the robot has historically spelled doom for robot learning, the researchers said.

“If a human moves in a way that’s any different from how a robot moves, the method immediately falls apart,” Choudhury said. “Our thinking was, ‘Can we find a principled way to deal with this mismatch between how humans and robots do tasks?’”

RHyME is the team’s answer – a scalable approach that makes robots less finicky and more adaptive. It trains a robotic system to store previous examples in its memory bank and connect the dots when performing tasks it has viewed only once by drawing on videos it has seen. For example, a RHyME-equipped robot shown a video of a human fetching a mug from the counter and placing it in a nearby sink will comb its bank of videos and draw inspiration from similar actions – like grasping a cup and lowering a utensil.

RHyME paves the way for robots to learn multiple-step sequences while significantly lowering the amount of robot data needed for training, the researchers said. They claim that RHyME requires just 30 minutes of robot data; in a lab setting, robots trained using the system achieved a more than 50% increase in task success compared to previous methods.

“This work is a departure from how robots are programmed today. The status quo of programming robots is thousands of hours of tele-operation to teach the robot how to do tasks. That’s just impossible,” Choudhury said. “With RHyME, we’re moving away from that and learning to train robots in a more scalable way.”

This research was supported by Google, OpenAI, the U.S. Office of Naval Research and the National Science Foundation.

Read the work in full

One-Shot Imitation under Mismatched Execution, Kushal Kedia, Prithwish Dan, Angela Chao, Maximus Adrian Pace, Sanjiban Choudhury.