Robohub.org

ROSCon 2015 recap and videos – part 4

ROSCon is an annual conference focused on ROS, the Robot Operating System. Every year, hundreds of ROS developers of all skill levels and backgrounds, from industry to academia, come together to teach, learn, and show off their latest projects.

Here is the next set of posts from the OSRF blog, along with videos.

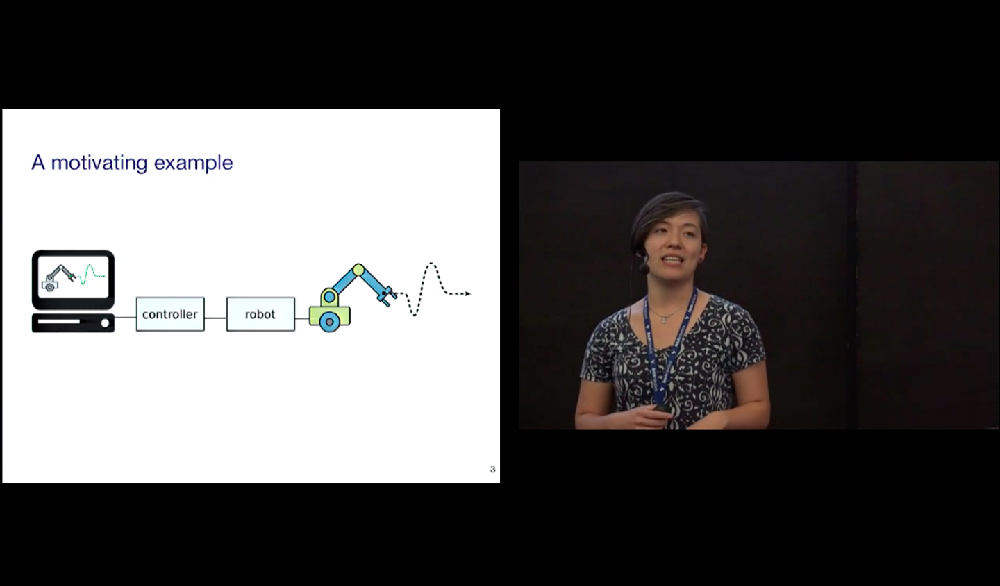

Jackie Kay (OSRF): Real-time Performance in ROS 2

Jackie Kay was upgraded from OSRF intern to full-time software engineer in 2014. Her background includes robotics education and path planning for autonomous lunar rovers. More recently, she’s been working on bringing real-time computing to ROS 2.

Real-time computing isn’t about computing at a certain speed— it’s about computing on schedule. It means that your system can return data reliably and on time, in situations where responding late is usually bad thing; and sometimes a really bad thing. Hard real-time computing is important in safety critical applications (like nuclear reactors, spacecraft, and autonomous vehicles), when taking too long thinking about something could result in a figurative or literal crash — or both. Soft real-time computing is a bit more forgiving, in that things running behind have a cost, but the data are still usable, as with packets arriving out of order while streaming video. And in between there’s firm real-time computing, where missing deadlines is definitely bad but nothing explodes (or things only explode a little bit), like on a robotic assembly line.

Making a system that’s adaptable and reliable, especially in the context of commercialization, often requires real-time computing, and this is why integrating real-time compatibility is one of the primary goals of ROS 2. Jackie’s keynote addresses many of the technical details underlying the ROS 2 real-time approach, including scheduling, memory management, node design, and communications strategies. To illustrate the improvements that ROS 2 has over ROS, Jackie shares benchmarking results of a ROS 2 demo running in real-time, showing that even under stress, implementing a high performance soft real-time system in ROS 2 looks promising.

To try real-time computing in ROS 2 for yourself, you can download an Alpha release and play around with a demo here: https://github.com/ros2/ros2/wiki/Real-Time-Programming

Dave Coleman (University of Colorado Boulder): MoveIt! Strengths, Weaknesses, and Developer Insight

Dave Coleman has worked in (almost) every robotics lab there is: Willow Garage, JSK Humanoids Lab in Tokyo, Google, UC Boulder, and (of course) OSRF. He’s also the owner of PickNik, a ROS consultancy that specializes in training robots to destructively put packages of Oreo cookies on shelves. Dave has been working on MoveIt! since before it was first released, and to kick off the second day of ROSCon, he gave a keynote to share everything he knows about motion planning in ROS.

MoveIt! is a flexible and robot agnostic motion planning framework that integrates manipulation, 3D perception, kinematics, control, and navigation. It’s a collaboration between lots of people across many different organizations, and is the third most popular ROS package with a fast-growing community of contributors. It’s simple to set up and use, and for beginners, a plugin lets you easily move your robot around in Rviz.

As a MoveIt! pro, Dave offers a series of pro tips on how to get the most out of your motion planner. For example, he suggests that researchers try using C++ classes individually to avoid getting buried in a bunch of layered services and actions. This makes it easier to figure out why your code doesn’t work. Dave also describes his experience in the Amazon Picking Challenge, held last year at ICRA in Seattle.

MoveIt! is great, but there’s still a lot of potential for improvement. Dave discusses some of the things that he’d like to see, including better reliability (and more communicative failures), grasping support, and, as always, more documentation and better tutorials. A recent MoveIt! community meeting resulted in a future roadmap that focuses on better humanoid kinematic support and support for other types of planners, as well as integrated visual servoing and easy access to calibration packages.

Dave ends with a reminder that progress is important, even if it’s often at odds with stability. Breaking changes are sometimes necessary in order to add valuable features to the code. As with much of ROS, MoveIt! depends on the ROS community to keep it capable and relevant. If you’re an expert in one of the components that makes MoveIt! so useful, you should definitely consider contributing back with a plug-in from which others can take advantage.

Mirko Bordignon (Fraunhofer IPA) and Shaun Edwards (SwRI): Bringing ROS to the Factory Floor

The ROS Industrial Consortium was established four years ago as a partnership between Yaskawa Motoman Robotics, Southwest Research Institute (SwRI), Willow Garage, and Fraunhofer IPA. The idea was to provide a ROS-based open-source framework for robotics applications, designed to make it easy (or at least possible) to leverage advanced ROS capabilities (like perception and planning) in industrial environments. Basically, ROS-I adds models, libraries, drivers, and packages to ROS that are specifically designed for manufacturing automation, with a focus on code quality and end user reliability.

Mirko Bordignon from Fraunhofer IPA opened the final ROSCon 2016 keynote by pointing out that ROS is still heavily focused on research and service robotics. This isn’t a bad thing, but with a little help, there’s an enormous opportunity for ROS to transform industrial robotics as well. Over the past few years. The ROS Industrial Consortium has grown into two international consortia (one in America and one in Europe), comprising over thirty members that provide financial and managerial support to the ROS-I community.

To help companies get more comfortable with the idea of using ROS in their robots, ROS-I holds frequent training sessions and other outreach events. “People out there are realizing that at least they can’t ignore ROS, and that they actually might benefit from it,” Bordignon says. And companies are benefiting from it, with ROS starting to show up in a variety of different industries in the form of factory floor deployments as well as products.

Bordignon highlights a few of the most interesting projects that the ROS-I community is working on at the moment, including a CAD to ROS workbench, getting ROS to work on PLCs, and integrating the OPC data protocol, which is common to many industrial systems.

Before going into deeper detail on ROS-I’s projects, Shaun Edwards from SwRI talks about how the fundamental idea for a ROS-I consortium goes back to one of their first demos. The demo was of a PR2 using 3D perception and intelligent path planning to pick up objects off of a table. “[Companies were] impressed by what they saw at Willow Garage, but they didn’t make the connection: that they could leverage that work,” Edwards explains. SwRI then partnered with Yaskawa to get the same software running on an industrial arm, “and this alone really sold industry on ROS being something to pay attention to,” says Edwards.

Since 2014, ROS-I has been refining a general purpose Calibration Toolbox for industrial robots. The goal is to streamline an otherwise time-consuming (and annoying) calibration process. This toolbox covers robot-to-camera calibration (with both stationary and mobile cameras), as well as camera-to-camera calibration. Over the next few months, ROS-I will be releasing templates for common calibration use cases to make it as easy as possible.

Path planning is another ongoing ROS-I project, as is ROS support for CANOpen devices (to enable IoT-type networking), and integrated motion planning for mobile manipulators. ROS-I actually paid the developers of the ROS mobile manipulation stack to help with this. “Leveraging the community this way, and even paying the community, is a really good thing, and I’d like to see more of it,” Edwards says.

To close things out, Edwards briefly touches on the future of ROS-I, including the seamless fusion of 3D scanning, intelligent planning, and dynamic manipulation, which is already being sponsored by Boeing and Caterpillar. If you’d like to get involved in ROS-I, they’d love for you to join them, and even if you’re not directly interested in industrial robotics, there are still plenty of opportunities to be part of a more inclusive and collaborative ROS ecosystem.

Kai von Szadkowski (University of Bremen): Phobos — Robot Model Development on Steroids

To model a robot in rviz, you first need to create what’s called a Unified Robot Description Format (URDF) file, which is an XML-formatted text file that represents the physical configuration of your robot. Fundamentally, it’s not that hard to create a URDF file, but for complex robots, these files tend to be enormously complicated and very tedious to put together. At the University of Bremen, Kai von Szadkowski was tasked with developing a URDF model for a 60 degrees of freedom robot called MANTIS (Multi-legged Manipulation and Locomotion System). Kai got a bit fed up with the process and developed a better way of doing it, called Phobos.

http://robotik.dfki-bremen.de/en/research/robot-systems/mantis.html

Phobos is an add-on for a piece of free and open-source 3D modeling and rendering software called Blender. Using Blender, you can create armatures, which are essentially kinematic skeletons that you can use to animate a 3D character. As it turns out, there are some convenient parallels between URDF models and 3D models in Blender: the links and joints in a URDF file equate to armatures and bones in Blender, and both use similar hierarchical structures to describe their models. Phobos adds a new toolbar to Blender that makes it easy to edit these models by adding links, motors, sensors, and collision geometries. You can also leverage Blender’s Python scripting environment to automate as much of the process as you’d like. Additionally, Phobos comes with a sort of “robot dictionary” in Python that manages all of the exporting to URDF for you.

Since the native URDF format can’t handle all of the information that can be incorporated into your model in Blender, Kai proposes an extended version of URDF called SMURF (Supplemental Mostly Universal Robot Format) that adds YAML files to a URDF, supporting annotations for sensor, motors, and anything else you’d like to include.

If any of this sounds good to you, it’s easy to try it out: Blender is available for free, and Phobos can be found on GitHub.

Shaun Edwards (SwRI): The Descartes Planning Library for Semi-Constrained Cartesian Trajectories

Descartes is a path planning library that’s designed to solve the problem of planning with semi-constrained trajectories. Semi-constrained means that the degrees of freedom of the path you need to plan are fewer than the degrees of freedom that your robot has. In other words, when planning a path, there are one or more “free” axes that your robot has to work with that can be moved any which way without disrupting the path. This can open up the planning space if you can utilize them creatively, which traditional robots (especially in the industrial space) usually can’t. This results in reduced workspaces and (most dangerous of all) increased reliance on human intuition during the planning process.

Descartes was designed to generate common sense plans, exhibiting similar characteristics to paths planned by a human. It can solve easy problems quickly, and difficult problems eventually, integrating hybrid trajectories and dynamic replanning. It’s easy to use, with a GUI that allows you to quickly set anchor points that the robot replans around, with visual confirmation of the new path. The second half of Shaun’s ROSCon talk is an in-depth explanation of Descartes’ interfaces and implementations intended for path planning fans (you know who you are).

As with many (if not most) of the projects being presented at ROSCon, Descartes is open source, and all of the development is public. If you’d like to try it out, the current stable release runs on ROS Hydro, and a tutorial is available on the ROS Wiki to help you get started.

If you enjoyed this article, you may also want to read:

- ROSCon 2015 recap and videos – Part 3

- ROSCon 2015 recap and videos – Part 2

- ROSCon 2015 recap and videos – Part 1

tags: c-Events, ROSCon 2015