Robohub.org

The infrastructure of life part 2: Transparency

Part 2: Autonomous Systems and Transparency

In my previous post I argued that a wide range of AI and Autonomous Systems (from now on I will just use the term AS as shorthand for both) should be regarded as Safety Critical. I include both autonomous software AI systems and hard (embodied) AIs such as robots, drones and driverless cars. Many will be surprised that I include in the soft AI category apparently harmless systems such as search engines. Of course no-one is seriously inconvenienced when Amazon makes a silly book recommendation, but consider very large groups of people. If a truth (such as global warming) is – because of accidental or willful manipulation – presented as false, and that falsehood is believed by a very large number of people, then serious harm to the planet (and we humans who depend on it) could result.

I argued that the tools barely exist to properly assure the safety of AS, let alone the standards and regulation needed to build public trust, and that political pressure is needed to ensure our policymakers fully understand the public safety risks of unregulated AS.

In this post I will outline the case that transparency is a foundational requirement for building public trust in AS, based on the radical proposition that it should always be possible to find out why an AS made a particular decision.

Transparency is not one thing. Clearly your elderly relative doesn’t require the same level of understanding of her care robot as the engineer who repairs it. Nor would you expect the same appreciation of the reasons a medical diagnosis AI recommends a particular course of treatment as your doctor. Broadly (and please understand this is a work in progress) I believe there are five distinct groups of stakeholders, and that AS must be transparent to each, in different ways and for different reasons. These stakeholders are: (1) users, (2) safety certification agencies, (3) accident investigators, (4) lawyers or expert witnesses and (5) wider society.

- For users, transparency is important because it builds trust in the system, by providing a simple way for the user to understand what the system is doing and why.

- For safety certification of an AS, transparency is important because it exposes the system’s processes for independent certification against safety standards.

- If accidents occur, AS will need to be transparent to an accident investigator; the internal process that led to the accident need to be traceable.

- Following an accident lawyers or other expert witnesses, who may be required to give evidence, require transparency to inform their evidence. And

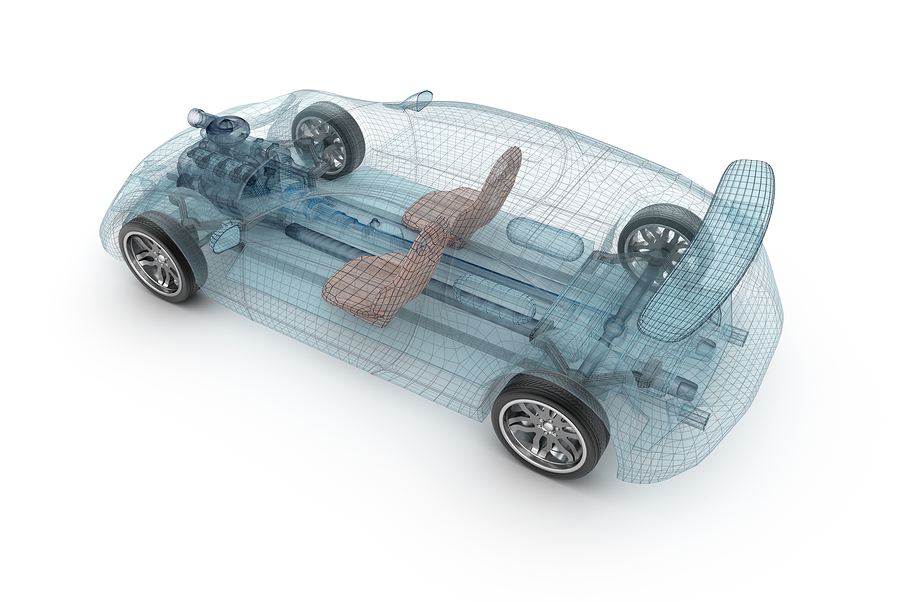

- for disruptive technologies, such as driverless cars, a certain level of transparency to wider society is needed in order to build public confidence in the technology.

Of course the way in which transparency is provided is likely to be very different for each group. If we take a care robot as an example transparency means the user can understand what the robot might do in different circumstances; if the robot should do anything unexpected she should be able to ask the robot ‘why did you just do that?’ and receive an intelligible reply. Safety certification agencies will need access to technical details of how the AS works, together with verified test results. Accident investigators will need access to data logs of exactly what happened prior to and during an accident, most likely provided by something akin to an aircraft flight data recorder (and it should be illegal to operate an AS without such a system). And wider society would need accessible documentary-type science communication to explain the AS and how it works.

Source: ROBOTT-NET

In IEEE Standards Association project P7001, we aim to develop a standard that sets out measurable, testable levels of transparency in each of these categories (and perhaps new categories yet to be determined), so that Autonomous Systems can be objectively assessed and levels of compliance determined. It is our aim that P7001 will also articulate levels of transparency in a range that defines minimum levels up to the highest achievable standards of acceptance. The standard will provide designers of AS with a toolkit for self-assessing transparency, and recommendations for how to address shortcomings or transparency hazards.

Of course transparency on its own is not enough. Public trust in technology, as in government, requires both transparency and accountability. Transparency is needed so that we can understand who is responsible for the way Autonomous Systems work and – equally importantly – don’t work.

Thanks: I’m very grateful to colleagues in the IEEE global initiative on ethical considerations in Autonomous Systems for supporting P7001, especially John Havens and Kay Firth-Butterfield. I’m equally grateful to colleagues at the Dagstuhl on Engineering Moral Machines, especially Michael Fisher, Marija Slavkovik and Christian List for discussions on transparency.

More from Alan Winfield:

See all the latest robotics news on Robohub, or sign up for our weekly newsletter.

tags: AI, Artificial Intelligence, Automation, c-Industrial-Automation, Culture and Philosophy, cx-Politics-Law-Society