Robohub.org

The pedestrian experiment

Followers of this blog will know that I have been working for some years on simulation-based internal models – demonstrating their potential for ethical robots, safer robots and imitating robots. But pretty much all of our experiments so far have involved only one robot with a simulation-based internal model while the other robots it interacts with have no internal model at all.

Followers of this blog will know that I have been working for some years on simulation-based internal models – demonstrating their potential for ethical robots, safer robots and imitating robots. But pretty much all of our experiments so far have involved only one robot with a simulation-based internal model while the other robots it interacts with have no internal model at all.

But some time ago we wondered what would happen if two robots, each with a simulation-based internal model, interacted with each other. Imagine two such robots approaching each other in the same way that two pedestrians approach each other on the sidewalk. Is it possible that these ‘pedestrian’ robots might, from time to time, engage in the kind of ‘dance’ that human pedestrians do when one steps to their left and the other to their right only to compound the problem of avoiding a collision with a stranger? The answer, it turns out, is yes!

The idea was taken up by Mathias Schmerling at the Humboldt University of Berlin, adapting the code developed by Christian Blum for the Corridor experiment. Chen Yang, one of my masters students, has now updated Mathias’ code and has produced some very nice new results.

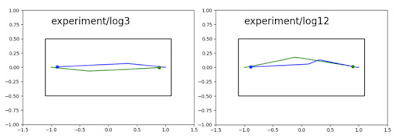

Most of the time the pedestrian robots pass each other without fuss but in something between 1 in 5 and 1 in 10 trials we do indeed see an interesting dance. Here are a couple of examples of the majority of trials, when the robots pass each other normally, showing the robots’ trajectories. In each trial blue starts from the left and green from the right. Note that there is an element of randomness in the initial directions of each robot (which almost certainly explains the relative occurrence of normal and dance behaviours).

And here is a gif animation showing what’s going on in a normal trial. The faint straight lines from each robot show the target directions for each next possible action modelled in each robot’s simulation-based internal model (consequence engine); the various dotted lines show the predicted paths (and possible collisions) and the solid blue and green lines show which next action is actually selected following the internal modelling.

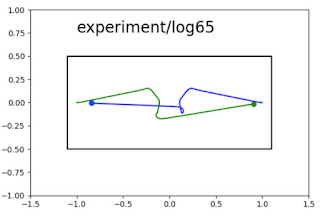

Here is a beautiful example of a ‘dance’, again showing the robot trajectories. Note that the impasse resolves itself after awhile. We’re still trying to figure out exactly what mechanism enables this resolution.

And here is the gif animation of the same trial:

Notice that the impasse is not resolved until the fifth turns of each robot.

Is this the first time that pedestrians passing each other – and in particular the occasional dance that ensues – has been computationally modelled?

All of the results above were obtained in simulation (yes there really are simulations within a simulation going on here), but within the past week Chen Yang has got this experiment working with real e-puck robots. Videos will follow shortly.

Acknowledgements.

I am indebted to the brilliant experimental work of first Christian Blum (supported by Wenguo Liu), then Mathias Schmerling who adapted Christian’s code for this experiment, and now Chen Yang who has developed the code further and obtained these results.