Robohub.org

The Year of CoCoRo Video #37/52: Combined scenario two

The EU-funded Collective Cognitive Robotics (CoCoRo) project has built a swarm of 41 autonomous underwater vehicles (AVs) that show collective cognition. Throughout 2015 – The Year of CooRo – we’ll be uploading a new weekly video detailing the latest stage in its development. This week we’ve once again uploaded two videos. The first is a computer animation of our “combined scenario number two” and the second shows several runs of this scenario.

The EU-funded Collective Cognitive Robotics (CoCoRo) project has built a swarm of 41 autonomous underwater vehicles (AVs) that show collective cognition. Throughout 2015 – The Year of CooRo – we’ll be uploading a new weekly video detailing the latest stage in its development. This week we’ve once again uploaded two videos. The first is a computer animation of our “combined scenario number two” and the second shows several runs of this scenario.

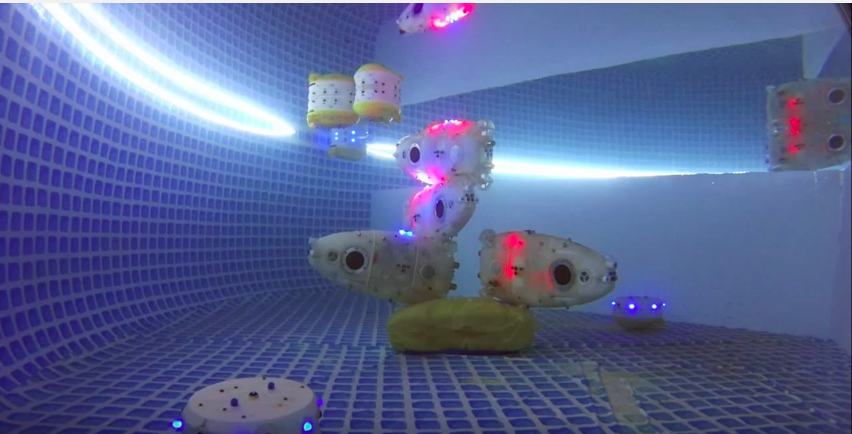

In a fragmented habitat only one compartment holds the magnetic search target. In every compartment a swarm of Jeff robots searches the ground. Those that find the target inform the Lily robots who disseminate the information to other compartments, moving randomly. When the Jeff robots who did not find the target are informed that other Jeff robots in a different compartment did, they rise up to the surface, perform a random walk and then sink back down into a new compartment. Over time, the swarm converges on the place where the target was found. Upward signalling by Jeff and Lily robots also attracts the surface station to the location above the search target.

The second video shows several runs of “combined scenario number two.” Jeff robots (on the ground), Lily robots (information carriers at all depths) and a simple surface station (we used a special Lily robot as a surrogate ) cooperate to identify the compartment containing the magnetic search target. The robots perform the same algorithms, or behaviors, in the computer animation. Initially, each one of the four compartments holds one Jeff robot. At the end of the run, three of these robots are located in the compartment with the target, together with a number of Lily robots and the surrogate of the surface station. The remaining Jeff robot could not reach the target compartment for mechanical reasons, although it tried several times, as indicated by the green LED signals it shows from time to time. This is a good example of how things work in swarm robotics; there are always robots that don’t perform well but they do stay functional as a collective.

tags: AUV, c-Research-Innovation, CoCoRo, EU, EU robotics industry news, UAV, underwater video