Robohub.org

Unity between human and social machines: What if we humans were anthrobots?

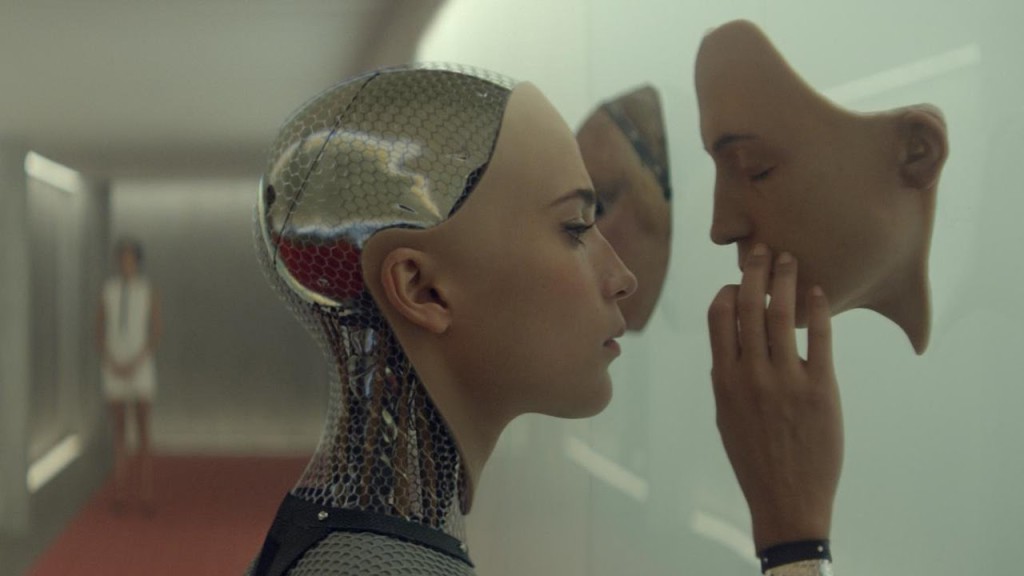

Ex Machina. Source: Youtube/Universal Pictures

Writer and philosopher Luis de Miranda studies cultural history of digital devices and automata, with his latest essay (in French) L’Art d’être libres au temps des automates (The Art of Being Free in the Era of Automata). At the University of Edinburgh, he recently created a platform for cross-disciplinary researchers and collaborators to discuss the relationship between humans, robots, and intelligent systems, called the Anthrobotics Cluster. In this interview, he speaks to Robohub about Anthrobotics and why he believes it’s essential to study the relationship between humans and robots.

What is the ‘Anthrobotics Cluster’? How did it come about?

I created the Anthrobotics Cluster at the University of Edinburgh this year and convinced a few researchers in several disciplines – roboticists, computer scientists, social sciences and humanities – to join it. It’s meant to be a think tank on the social spread of robotics, and also how automation is part of the definition of what humans have always been. I started to think of anthrobotics in 2015, as I was writing the first chapters of my PhD on esprit de corps. I realised, as Lewis Mumford has already suggested in the Myth of the Machine, that human institutions are a form of collective robot. Division of labour, protocols, rituals, automation are part of the conceptual DNA of the collective ‘humachine.’ We’re social robots to a certain extent. But I see anthrobotics as a blank slate for new research. It’s a concept that still needs to be defined, explored, investigated. I’d like to think of it as a ‘What if?” as defined by Vaihinger in The Philosophy of the ‘As If’. What if we humans were anthrobots?

https://www.youtube.com/watch?v=b0SMl8vOW18

Could you define esprit de corps? How does your research in Anthrobotics fit into this?

Esprit de corps was originally a French term, and adopted into English. It is widely used in today’s global corporate discourses and also in politics. The phrase qualifies the strong unity and cohesion of a group. My PhD is the first genealogy of the term, from its birth in military discourse at the end of the seventeenth century, with the French Musketeers, until today, where the English media speak on a daily basis of esprit de corps. If you start thinking of human societies as collective robots, it might appear to be a metaphor, but I think it’s more than a metaphor. We must never forget that the etymology of ‘robot’ is forced labour, and forced labour is what humans are about historically (and what they wish to free themselves of doing). This might change in the future, and – for example – there’s currently a great deal of talk around the idea of automation and universal basic income.

What challenges do you foresee to ensure that technological innovations go hand in hand with societal needs and expectations?

Engineering and philosophy should go hand-in-hand. Whatever we construct, it’s done according to a web of beliefs, a set of values that can be either conscious or unconscious. For example, if you think individuals are free-willing entities who always tend to maximize their personal interest, you won’t conceive the same multi-agent system than if you realise that we’re much more determined social machines who belong to more or less visible groups who shape our choices. Bourdieu has shown this very well in The Distinction. Today, Big Data also clearly demonstrates our predictable psychology. I’m not saying that we’re completely determined, but freedom is certainly much more difficult than we think. I published a book about this matter, in French: L’Art d’être libres au temps des automates (The Art of Being Free in a Time of Automata). If the 20th century was the century of globalisation, I believe the 21st century will be the century of robots and social machines. This doesn’t mean that they’re totally new, it only means our anthrobotic becoming is now manifest and conscious. My proposal and hypothesis is to consider the relationship between humans and robots as a unity, perhaps a form of symbiosis. We must look at history to avoid falling into irrational fears or an uninformed fascination for the new. Anthrobotics has to do with political science, and not only with technology.

How did your book ‘L’art d’être libres au temps des automates’ (The Art of Being Free in a Time of Automata) inspire your interest in robotics and society, and relate to your current work?

My book started as a cultural and philosophical history (I call this histosophy) of computers. In French, a computer is called ordinateur, named and administrated by a wide community. An ordinator is also the one that orders. Historian Adolf Gasser explained that life in a community, any community — it could be a State– is only possible within an ordinating or ordering principle. The two fundamental political ordering principles are subordination and coordination. I understood while researching in the MIT archives and writing my essay that humans have a dual character: they’re both ordinators and creators. Computers only reflect our human Cartesian ordinating work. And as for creation, I think humans are more editors of the cosmic flow of possibilities than pure creators (this is what I called the Creal hypothesis), but they still possess the existentialist – or crealist- freedom to coordinate in order to let new protocols, new rules emerge and be adopted.

You’re working with both a roboticist and computer scientist. As a philosopher how do these two other disciplines complement your research?

The risk of pure philosophy is to fall into excessive metaphysics with no pragmatic link to the real world. Working with Ram Ramamoorthy, and executive member of the Edinburgh Center for Robotics, and Michael Rovatsos, director of the Center for Intelligent Systems and their Applications, prevents me – at least it should – from being self-indulgent and playing with words without any form of concrete perspective, examples and experience. Anthrobotics should be able in the future to modify the way we design automated systems.

You have an International Workshop planned for 2017. Can you tell us a little more about that?

We’re still working on that but we plan to host the workshop around the dates of the popular European Robotics Forum (ECR 2017) that is due to take place in Edinburgh in March. We are partnering with the Human Centred Computing Research Group at the Oxford Department of Computer Science. The general theme could be anthrobotics as a unity, and social automata as anthrobots. According to roboticist Rodney Brooks, the distinction between us and robots is going to disappear over the next decades (Robot: The Future of Flesh and Machine, 2002). Are we theoretically and socially prepared for such a union? The ongoing spectacular development and deployment of robotics, AI, or genetic engineering induces pervasive and radical changes in our lives and societal systems. Ram, Michael and I — as well as a growing community of researchers around the world who are currently connecting with our Anthrobotics Cluster — wish to explore anthrobotics without an ideological a priori, but with a simple methodological prism: from the perspective of unity, where human and social machines are conceived as one rather than opposed. Could it be that this anthrobotic unity is in fact older than we think, as suggested by, among others, Lewis Mumford (The Myth of the Machine, 1967/1970)? How can an anthrobotic perspective impact contemporary design and reflections? How can we define anthrobotics in a more precise manner? How can engineers design automata while being more conscious of the presupposed values of their protocols? Can we overcome the ethical discourse towards a more holistic and politically creative data and task processing?

What’s next for you? Can you leave us with your thoughts on how you see anthrobotics?

Ram, Michael and I are writing an article on anthrobotics, that we’ll present at the Aarhus International Conference on social robotics in October. The working title is: We, The Anthrobot: Learning From Human Forms of Interaction and Esprit de Corps to Develop More Diverse Social Robotics. Here’s a copy of the abstract:

This paper considers the system Human-Robot as a coordinated Janus-faced reality. We propose to call this hybrid system the anthrobot, and the related approach anthrobotics. The anthrobotic perspective not only belongs to the current transdisciplinary research on the social becoming of robotics, but also contemplates a practical horizon in the future conception and implementation of socially healthy automation.

Methodologically, anthrobotics is the choice to consider the unit human-machine rather than the separated realities (humans, on one side, and machines on the other). The implication of this methodological stance is that we should conceive of the process of understanding and designing anthrobotic systems as fundamentally grounded in their hybrid character, and to view their intentionality and intelligence in a way that encompasses human activity and its augmentation or mediation through technological means.

Anthrobots that pre-date the computer age, such as organisations, institutions, nation states, etc. not only provide blueprints on which digitally, or, robotically in the narrower sense of the term, enhanced collective socio-technical systems can be modelled. They also allow us to develop notions of “healthy” anthrobots by considering how their existing non-technological counterparts manage to provide a range of social protocols that allow individuals and groups to assume and embody different forms of agency that allow them to achieve their goals and aspirations. This paper will examine four types of human collective interaction that help lay the conceptual and methodological groundwork to determine if we can learn from human collectives to design healthier anthrobots.

Many systems we find around ourselves consist of continually evolving combinations of humans and robots, where the second term is to be interpreted broadly. Licklider famously called this close coupling a man-computer symbiosis, using a biological metaphor to describe this ‘living together in intimate association, or even close union’. Today this distinction has to be redefined. Different design methodologies are brought to bear in devising our anthrobotic becoming, ranging from game-theoretic and organisational behavior analysis to ‘crowdsourcing’ and associated considerations on how networks behave or should behave. What makes this challenging is that the behaviour of the anthrobotic system as a whole is hard to predict and design, due to the subtlety of human preferences and personalities, and due to coupled dynamics between humans and automata.

Looking beyond these phenomena, our aim is to identify key mechanisms regarding how people, when interacting with other people, efficiently manage to solve complex problems. For instance, why doesn’t the Microsoft chatterbot issue happen quite so easily among most people? Why do human societies manage to recover from market failures? What is it about social interaction that makes us smarter in terms of interaction than the technology-centric, novel anthrobotic systems we are creating? Can the human-machine and machine-human interactions that underpin current and future digital anthrobots be informed by the intelligence exhibited by human-human interaction systems, to which they are currently are much inferior?

Moreover, can they provide social arenas that empower humans and allow themselves to be shaped by humans in ways that are appropriate to the respective social context and escape their current diversity-averseness that stems from over-regularisation and unilateral control, in similar ways as advanced democratic human societies manage to achieve this? The reason why human-to-human relations are not chaotic by default is not that we are rational beings calculating our individual interest at every minor interaction with another human. This would take too much brain energy and prove extremely difficult, since it would take infinite clairvoyance to anticipate the outcomes of the hundreds of interactions we have with other humans every single day. Again, most of the time we function on autopilot, which means that we apply the habitual rules and codes of a group, albeit we might not always be conscious of which social group we belong to.

This phenomenon has been known since the eighteenth century under the term esprit de corps, a French phrase that was immediately adopted talis qualis in English. By examining the different uses of ‘esprit de corps’ in the last three centuries, Luis de Miranda has found that at least four types of collective bond are typical of our modernity.

The first type of collective bond can be called a conformative esprit de corps. Conformative collectives exhibit strong conformity and an enclosed or enclaved form of solidarity. The main value of conformative esprit de corps is duty, and its mode of control is discipline as coercion. This is typical of religiously-grounded communities for example. The second type of modern collective bond can be called universal esprit de corps, typical of the Nation-State and modern democracies. Universal esprit de corps leans towards strong conformity but an inclusive and open solidarity (anyone can join the group in theory). Its main mode of relation is service, and its mode of control is co-dependence, what Durkheim called ‘organic solidarity’. The third type of collective bond can be called autonomist esprit de corps. It exhibits a rather enclosed form of solidarity and its drive is autonomy rather than conformity. Its main values are craft, knowledge, art, and its mode of control is discipline as practice. It is typical of communities of interest, clubs for example. The fourth kind of collective bond can be called creative esprit de corps, with a drive towards open interactions and autonomy. Its main relational type is élan and enthusiasm, as seen today for example in start-ups. Its mode of control is enjoyment and expectation.

Of course, as all forms of categorisations, this is better read as tendencies rather than a rigid structure. Each of these groups implies individual interactions that are a different form of human intelligence. Individual interest does not play the main role in these interactions, or, if it does, this concerns mainly the creative esprit de corps groups. People interact more or less well in life according to more or less esprit de corps.

When designing an anthrobotic system, we propose to ask ourselves if it is meant to serve a universalist, creative, conformative, or autonomist group. In universalistic groups, standardised systems with few variations work well, because they should construct sameness rather than difference. A simple example of that are translation bots. English is a universal language, and if we are to favour global understanding and communication, there should not be different forms of English when used as a Lingua Franca. In creative groups, the emphasis is on the agency of each individual and a form of mechanical solidarity where each one can contribute equally to different tasks, according to a horizontal hierarchy. Open source, flexible systems, and co-design will be favoured. In groups with autonomy and enclosed solidarity, the main social interaction is through craft understood broadly. This is a community of practice, for example, a citizen-managed town, a badminton club, or temporary autonomous zone (TAZ). In groups with strong conformity and enclosed solidarity, where the main social drive is duty, systems need to be robust with minimal evolution capacities and a strong hierarchy. Religious communities, for example, tend to die if they evolve.

We have chosen to look at the human-machine relation as an anthrobotic unit. By this we do not mean an isolated cyborg: our perspective cannot be a methodological individualism, because on one hand, the machines and robots we refer to are or will be produced industrially, and on the other hand, individual humans are to a large extent the product of collective belongings before they can singularize themselves. The anthrobotic unit is the conjunction of two collectives, robotic and human. Our hypothesis is therefore that if we look at how humans form collectives in different ways, we will be able to develop intelligent systems that are also more diverse.

If you enjoyed this article, you may also enjoy reading:

- Why robots need to be able to say ‘No’

- Should robots be gendered?

- Robohub Roundtable: Why is it so difficult to define ‘robot?’

- Beyond Asimov: How to plan for ethical robots

tags: c-Politics-Law-Society