Robohub.org

We need to discuss what jobs robots should do, before the decision is made for us

By Thusha Rajendran (Professor of Psychology, The National Robotarium, Heriot-Watt University)

The social separation imposed by the pandemic led us to rely on technology to an extent we might never have imagined – from Teams and Zoom to online banking and vaccine status apps.

Now, society faces an increasing number of decisions about our relationship with technology. For example, do we want our workforce needs fulfilled by automation, migrant workers, or an increased birth rate?

In the coming years, we will also need to balance technological innovation with people’s wellbeing – both in terms of the work they do and the social support they receive.

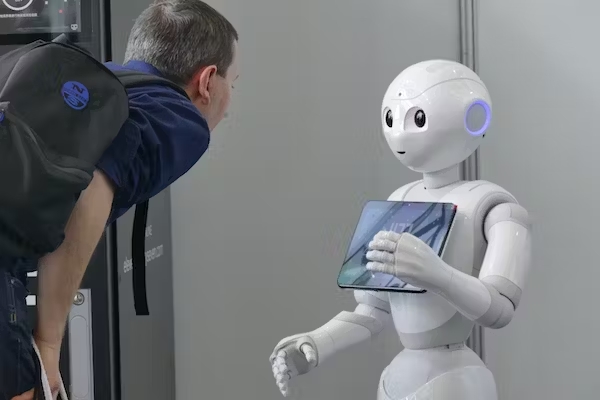

And there is the question of trust. When humans should trust robots, and vice versa, is a question our Trust Node team is researching as part of the UKRI Trustworthy Autonomous Systems hub. We want to better understand human-robot interactions – based on an individual’s propensity to trust others, the type of robot, and the nature of the task. This, and projects like it, could ultimately help inform robot design.

This is an important time to discuss what roles we want robots and AI to take in our collective future – before decisions are taken that may prove hard to reverse. One way to frame this dialogue is to think about the various roles robots can fulfill.

Robots as our servants

The word “robot” was first used by the Czech writer, Karel Čapek, in his 1920 sci-fi play Rossum’s Universal Robots. It comes from the word “robota”, meaning to do the drudgery or donkey work. This etymology suggests robots exist to do work that humans would rather not. And there should be no obvious controversy, for example, in tasking robots to maintain nuclear power plants or repair offshore wind farms.

The more human a robot looks, the more we trust it. Antonello Marangi/Shutterstock

However, some service tasks assigned to robots are more controversial, because they could be seen as taking jobs from humans.

For example, studies show that people who have lost movement in their upper limbs could benefit from robot-assisted dressing. But this could be seen as automating tasks that nurses currently perform. Equally, it could free up time for nurses and careworkers – currently sectors that are very short-staffed – to focus on other tasks that require more sophisticated human input.

Authority figures

The dystopian 1987 film Robocop imagined the future of law enforcement as autonomous, privatised, and delegated to cyborgs or robots.

Today, some elements of this vision are not so far away: the San Francisco Police Department has considered deploying robots – albeit under direct human control – to kill dangerous suspects.

This US military robot is fitted with a machine gun to turn it into a remote weapons platform. US Army

But having robots as authority figures needs careful consideration, as research has shown that humans can place excessive trust in them.

In one experiment, a “fire robot” was assigned to evacuate people from a building during a simulated blaze. All 26 participants dutifully followed the robot, even though half had previously seen the robot perform poorly in a navigation task.

Robots as our companions

It might be difficult to imagine that a human-robot attachment would have the same quality as that between humans or with a pet. However, increasing levels of loneliness in society might mean that for some people, having a non-human companion is better than nothing.

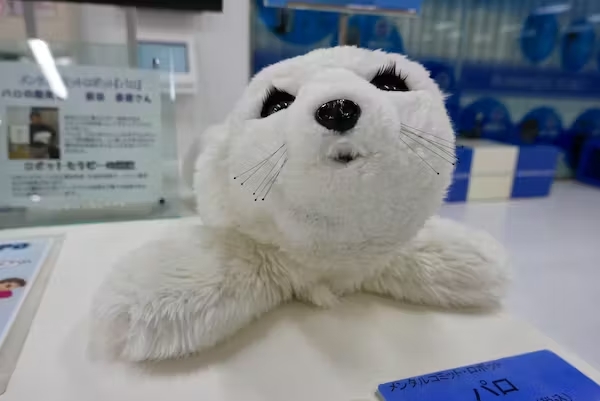

The Paro Robot is one of the most commercially successful companion robots to date – and is designed to look like a baby harp seal. Yet research suggests that the more human a robot looks, the more we trust it.

The Paro companion robot is designed to look like a baby seal. Angela Ostafichuk / Shutterstock

A study has also shown that different areas of the brain are activated when humans interact with either another human or a robot. This suggests our brains may recognise interactions with a robot differently from human ones.

Creating useful robot companions involves a complex interplay of computer science, engineering and psychology. A robot pet might be ideal for someone who is not physically able to take a dog for its exercise. It might also be able to detect falls and remind someone to take their medication.

How we tackle social isolation, however, raises questions for us as a society. Some might regard efforts to “solve” loneliness with technology as the wrong solution for this pervasive problem.

What can robotics and AI teach us?

Music is a source of interesting observations about the differences between human and robotic talents. Committing errors in the way humans do all the time, but robots might not, appears to be a vital component of creativity.

A study by Adrian Hazzard and colleagues pitted professional pianists against an autonomous disklavier (an automated piano with keys that move as if played by an invisible pianist). The researchers discovered that, eventually, the pianists made mistakes. But they did so in ways that were interesting to humans listening to the performance.

This concept of “aesthetic failure” can also be applied to how we live our lives. It offers a powerful counter-narrative to the idealistic and perfectionist messages we constantly receive through television and social media – on everything from physical appearance to career and relationships.

As a species, we are approaching many crossroads, including how to respond to climate change, gene editing, and the role of robotics and AI. However, these dilemmas are also opportunities. AI and robotics can mirror our less-appealing characteristics, such as gender and racial biases. But they can also free us from drudgery and highlight unique and appealing qualities, such as our creativity.

We are in the driving seat when it comes to our relationship with robots – nothing is set in stone, yet. But to make educated, informed choices, we need to learn to ask the right questions, starting with: what do we actually want robots to do for us?

Thusha Rajendran receives funding from the UKRI and EU. He would like to acknowledge evolutionary anthropologist Anna Machin’s contribution to this article through her book Why We Love, personal communications and draft review.

This article is republished from The Conversation under a Creative Commons license. Read the original article.