Robohub.org

What’s new in robotics this week? Australia’s $310K (AUD) firefighting bot

Plus: empathy for humanoids; learning to walk using neural networks; X Prize for marine robots; turtle-inspired robot … and much more.

Australia unveils firefighting robot that is way better than humans (IBT)

A remote-controlled firefighting robot that can put out fires, clear smoke, and sweep away debris has been unveiled in Australia.

Known as the TAF-20 (the ‘TAF’ stands for ‘Turbo Aided Firefighting’), the robot can be operated from up to 500 meters away, which enables firefighters to tackle blazes from a safe distance.

The firefighting robot is equipped with a bulldozer blade which it can [use to] clear obstacles such as cars in its way or sweep debris after a fire explosion. With its high-powered fans, it can clear smoke from a building. It can even spray water mist or foam from a distance of 60 m (197 ft) and blast water from 90m away.

As an Australian government website puts it:

Minister for Emergency Services David Elliott said the robot put FRNSW firefighters ahead of the game when managing hazardous fires and other emergencies where they could not safely approach flames or for when there was danger of an explosion.

The NSW Government has invested $310,000 (AUD) into this technology.

Empathy for Humanoid Robots is Ideal UI for Elementary Schools (Inverse)

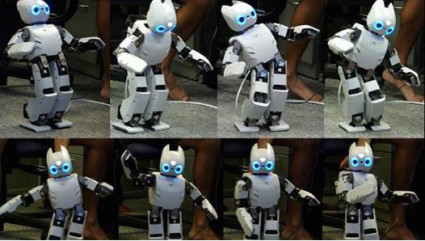

One way in which humanoid robots can be made to seem friendlier to humans is by having the bots adopt friendly body language.

This is the focus of an ongoing research project at the University of Delft, where experts have been testing the principle on graduate students.

The researchers had graduate students attend lectures from their robots, and the students significantly preferred the robot displaying ‘positive’ body language over the one employing negative expressions.

“This was a large effect. The students applauded the robot following the ‘positive’ lecture, and they all wanted to make a selfie with the robot afterwards. This was not the case for the other ‘negative’ group.”

The researchers have also found evidence that the mood and body language of a robot can be contagious: in other words, humans start mirroring the attitude and demeanor of robots that they are interacting with. From the University’s press release:

‘Robots are increasingly going to be working with us, helping us and keeping us company. Social skills are important for these robots, to be able to work with us in harmony and to be accepted by us’ explains Dr Koen Hindriks, researcher at the Faculty of EEMCS and one of Junchao Xu’s PhD research supervisors.

[…]

What is new in this research is that the emotions are integrated into the robot’s function. As Hindriks explains, ‘For example, if a robot is pointing to something and then suddenly has to be ‘happy’, for example by throwing its arms in the air, that looks very unnatural. We have tried to improve on this and have integrated our new insights into a model and software that are as generally applicable as possible.

‘We found that some of the main factors for expressing robot emotions are the height of the hands, the magnitude of movements, the vertical position of the robot’s head and the speed with which the robot moves. Through experimentation, we also showed that people are able to recognise the robot’s moods through its body language, even when they had not been instructed to pay attention to this. We also saw evidence of an ‘emotional contagion effect’: the emotional state of participants in the experiment matched the emotional state of the robot.’

The robot is now being tested in elementary school classrooms.

A robot teaching itself to walk like a human toddler (CNBC)

Woah! Careful there little robot.

A team at UC Berkeley’s Robot Learning Lab has been using neural networks to enable a robot to teach itself how to walk much in the same way a human toddler does:

Darwin’s baby steps speak to what many researchers believe will be the greatest leap in robotics — a kind of general machine learning that allows robots to adapt to new situations rather than respond to narrow programming.

Developed by Pieter Abbeel and his team at UC Berkeley’s Robot Learning Lab, the neural network that allows Darwin to learn is not programmed to perform any specific functions, like walking or climbing stairs. The team is using what’s called “reinforcement learning” to try and make the robots adapt to situations as a human child would.

Like a child’s brain, reinforcement technology invokes the trial-and-error process.

X Prize to map 4,000m-deep ocean floor with robots (BBC)

A new X Prize competition has been launched with the aim of developing cheap and efficient robots for exploring the world’s oceans.

Sponsored by Shell, and valued at $7 M (USD), the Shell Ocean Discovery competition challenges teams to map a 4 km deep, 500 sq km area of sea floor using autonomous robots. The prize must be claimed by the end of 2018.

There will be two rounds to the competition.

The first, to be held in 2017, will be undertaken at a shallower depth of 2,000 m, and require teams to make a bathymetric map of at least 20% of a 500 sq km zone of seabed in roughly 6-8 hours.

The top 10 teams will then go forward to the second round, which will be held at the full competition depth of 4,000 m. At least 50% of this area will have to be mapped in 12-15 hours.

A scanning resolution of 5 m per pixel is required. The teams will have to return high-resolution pictures from the deep, as well as one of a target specified by the organizers.

Control and communication in the dark at 4,000 m will be tough enough, never mind the considerations for pressure, which will be about 40 megapascals – nearly 6,000 pounds per square inch.

Estonian turtle-robot searches for shipwrecks and treasure (Associated Press)

One of the downsides of using propeller powered robots for investigating the sea floor is that the propellers disturb the water too much and throw up silt –a major problem for marine archaeologists that want to be able to detect –and preserve– precious marine artifacts.

Enter the vacuum cleaner sized U-CAT (Underwater Curious Archaeology Turtle): a propellerless robot with four silicon flippers inspired by sea turtle arms and legs.

“They move in a slow and quiet motion and won’t bring up sediment from the (sea) bottom,” says Taavi Salumae, a designer at the Biorobotics Center of Tallinn University of Technology.

The underwater probe has been developed since 2012 in the EU-funded Arrows project that focuses on new technologies for marine research. It can stay submerged for four hours at a depth of 100 meters (330 feet) on a single battery charge of two hours. It’s equipped with a camera and lights.

[…]

But its small size has a few drawbacks: It is limited to shallow waters, unlike large robots, some of which can reach depths of six kilometers (20,000 feet) without damage from water pressure. And it is not remotely controlled like traditional wired probes, which means there’s also a risk of losing it during missions.

Get ready to jump: Advanced robotics is the next great leap (Plant Magazine)

Interesting article about industrial robotics adoption in Canada:

Canada isn’t a lightweight in the development of robotics. Yes, we can lay claim to the famous Canadarm, but we also have three very innovative robotics developers (Clearpath Robotics, Robotiq and Kinova Robotics) in the latest Robotics Business Review global top 50.

Our adoption of the technology is less glorious. Canada’s installed base is hard to track. Because it’s small, it tends to be lumped in with North American statistics, but PLANT’s 2016 Manufacturers’ Outlook Survey provides a glimpse of Canadian adoption and it shows only 10% of the mostly smaller companies are using advanced robotics.

The Robotics Industries Association (RIA) reports 22,427 robots valued at $1.3 billion were ordered from North American companies in the first nine months of 2015, an increase of 6% and Canada’s share is almost 2,100 units valued at about $134,000.

And Also…

The Pentagon is Nervous about Russian and Chinese Killer Robots (Defense One)

Plus:

- Build your own walking, waving, 3D printed ‘Social Quadruped’ robot (3ders.org)

- China’s New Trio of Urban Combat Robots (Popular Science)

- Next generation of robot technology enters the operating theatre (Financial Times)

- Now AI Machines Are Learning to Understand Stories (MIT Technology Review)

- Watch out! This robot could just steal your job (e27)

- Eye on Safety, California Sets Rules for Self-Driving Cars (ABC)

- FAA Announces Its Small UAS Registration Rules (Drone360)

tags: What's new in robotics this week