Robohub.org

White House releases reports focusing on opportunities and challenges of AI

While the future of artificial intelligence is probably going to be driven by Silicon Valley, the folks in Washington DC want their say about how it will work, too.

In two reports, the White House outlined its strategy for promoting artificial intelligence research and development in the US. While most of the bigger questions were punted to future legislators (“more research is needed” is a key phrase), the executive branch did draw some lines in the sand. And most importantly to the research community, the White House is not pushing for AI to be broadly regulated—instead, the use of the technology will be held to specific standards in the automotive, aviation, and finance industries.

Three key guiding philosophies were presented across the reports: AI needs to augment humanity instead of replacing it, AI needs to be ethical, and there must be an equal opportunity for everyone to develop these systems.

These are subjects on US president Barack Obama’s mind, too—as he says in a well-timed feature in Wired magazine. “Most people aren’t spending a lot of time right now worrying about singularity—they are worrying about ‘Well, is my job going to be replaced by a machine?’” Obama said in the interview.

Human-machine collaboration in its many forms is a major theme in the reports, titled “Preparing for the Future of Artificial Intelligence” and “National Artificial Intelligence Research and Development Strategic Plan.”

“The walls between humans and AI systems are slowly beginning to erode, with AI systems augmenting and enhancing human capabilities,” the Strategic Plan report says.

The White House imagines virtual personal assistants housed in smart glasses, automated factories that assist humans in complex building tasks, and systems that provide better data for farmers, all in the context that these could be job creators and not job stealers. When it comes to manufacturing, the White House believes that more efficient automation could incentivize companies to bring their production back to US soil. While these are appealing futures, the reports mainly rely on macroeconomic forces to make them come to fruition, rather than any set initiatives.

The reports do briefly acknowledge the possibility that AI goes the complete opposite direction, making low- and medium-skill jobs redundant. The White House plans to release another report later this year to delve into that prospect more fully.

National security is also on the list, with the reports suggesting AI has a role in cyber security, and can be used to detect and annul cyberattacks as they target US citizens or infrastructure. The White House also recommends algorithmic surveillance of individuals and crowds, while acknowledging more study is needed on the matter, especially given current attempts to implement “predictive policing” have been shown to be racially biased.

That brings ethics into the discussion. “The government needs to be involved a little bit more,” Obama told Wired. “Not always to force the new technology into the square peg that exists but to make sure the regulations reflect a broad base set of values. Otherwise, we may find that it’s disadvantaging certain people or certain groups.”

The government seemingly wants to tackle ethics with more transparency. “Researchers must learn how to design these systems so that their actions and decision-making are transparent and easily interpretable by humans, and thus can be examined for any bias they may contain, rather than just learning and repeating these biases,” the Strategic Plan says.

Right now, there’s no standard for transparency in AI research. Companies like Google and Facebook release open-source code and sometimes reveal the data they use, but no regulation exists. Recently, Facebook, Google, Amazon, IBM, and Microsoft joined to make the Partnership on AI, with the goal of setting best practices for transparency, although no specifics have been released.

One way to combat biased algorithms is to provide unbiased data, something the White House reports urge. Not only would carefully-curated open data sets allow everyone to see how algorithms are taught about the world, but it also means that anyone can use them to develop their own AI.

“The lack of vetted and openly available datasets with identified provenance to enable reproducibility is a critical factor to confident advancement in AI,” the report reads.

Currently, there are standard open data sets in computer science, like the MNIST database of hand-written numbers or ImageNet, but they’re not regulated and can contain their own bias. As an example, ImageNet contains an overwhelming amount of images of dogs and cats. When Google researchers used DeepDreamlast year to find out what ImageNet-trained algorithms naturally gravitated towards, the algorithms generated dog faces, showing a bias towards finding those patterns in images. Google recently announced their open-source answer to ImageNet, the Open Images dataset, which covers six times as many categories, and is expected to be an improvement.

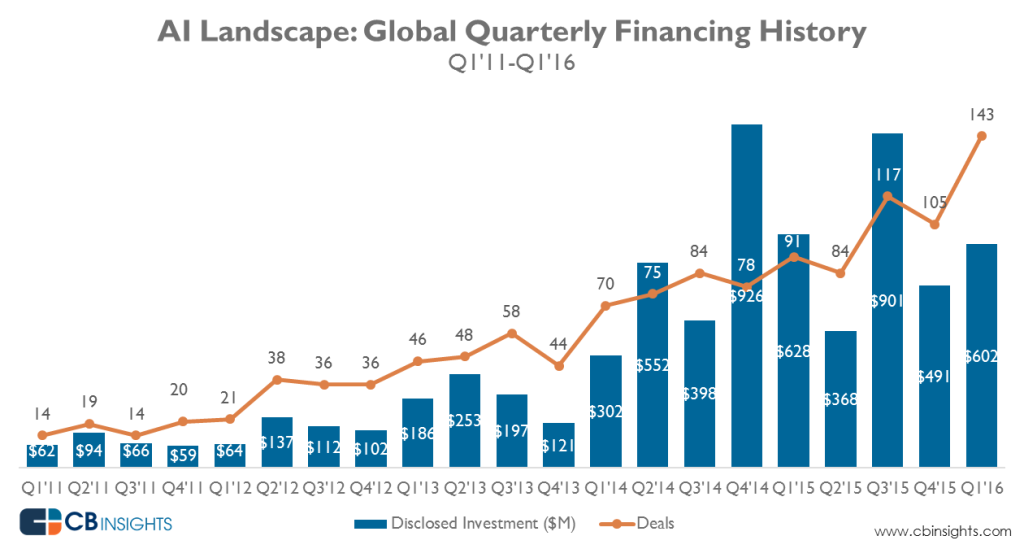

The government makes clear in the reports that it had led the charge on tech development for decades. It claims the internet and deep learning (the basis of most modern AI) as examples of government funding that paid off over the long-term; federal government investment in both technologies in the 1960s. In 2015, the federal government invested more than $1 billion in AI research.

But at the same time, the White House acknowledges that to accomplish what it lays out in the two reports, it must work with researchers and innovators in the field. The reports suggest most of the funding will come in the form of traditional grants and Grand Challenges, where teams build experimental tech to compete for a prize.

“The gap between the talent in the federal government and the private sector is actually not wide at all,” Obama told Wired. “The technology gap, though, is massive.”

Article originally published on WEF.

If you liked this article, you may also want to read:

- White House launches public workshops on AI issues

- White House economic report looks to robotics for the future

- We the Geeks: Whitehouse hangouts celebrate robots, maker culture and more

See all the latest robotics news on Robohub, or sign up for our weekly newsletter.

tags: AI, Artificial Intelligence, c-Politics-Law-Society, WEF, White House