Robohub.org

Liatris: looking to change how robots see the world

Fully autonomous robotics could be developed today if objects could tell a robot what they are, their purpose and how to utilize them. Liatris is a new open source project built with ROS. Its objective is to reliably read an object’s pose and identity without relying on vision. It presents an opportunity to rip down the barriers that prevent robotics from being present in our everyday lives.

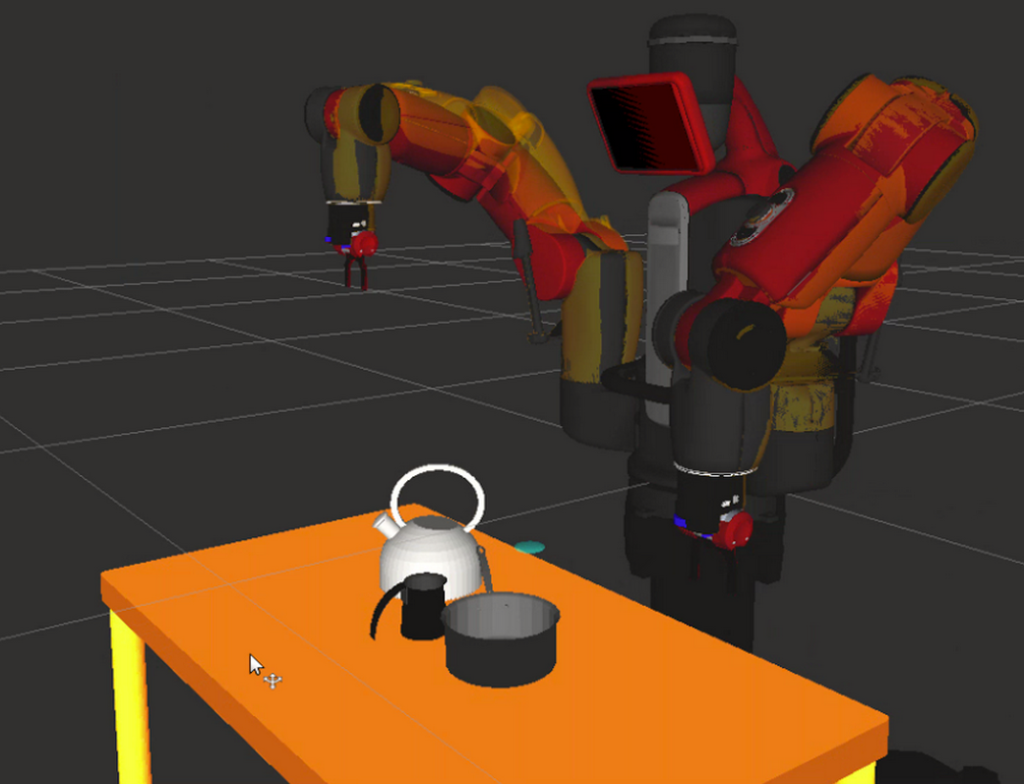

Traditionally, a robot would be required to identify and locate every object in its workspace. Liatris, which is built primarily using ROS, Kivy and Python, instead determines any object’s pose and identity using a touch screen and RFID reader.

The robot in the video is programmed to identify and grasp any object placed on the touch screen, regardless of the object’s shape, size or positioning. It perceives the object by utilizing the CAD model downloaded from Liatris’ API.

This also tells the robot how to grasp an object while avoiding collisions with other objects (thanks to MoveIt).

The result is an accurate 3D perception and mobile manipulation solution. Liatris can even work simultaneously with multiple objects and touch screens.

Learn more at Liatris.org

tags: c-Research-Innovation, computer vision, ROS