Robohub.org

Evaluating the effectiveness of robot behaviors in human-robot interactions

This post is part of our ongoing efforts to make the latest papers in robotics accessible to a general audience.

Robots that interact with everyday users may need a combination of speech, gaze, and gesture behaviors to convey their message effectively. This is similar to human-human interactions except that every behavior the robot displays must be designed and programmed ahead of time. In other words, designers of robot applications must understand how each of these behaviors contributes to the robot’s effectiveness so that they can determine which behaviors must be included in the application’s design.

To this end, the latest paper by Huang and Mutlu in Autonomous Robots presents a method that designers can use to determine which behaviors should be used to produce a desired effect. They illustrate the method’s use by designing and evaluating a set of narrative behaviors for a storytelling robot that might be used in educational, informational, and entertainment settings.

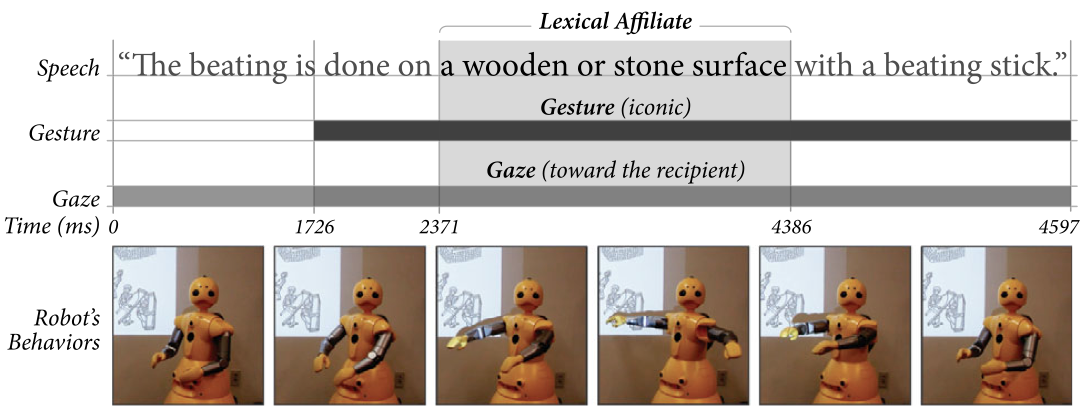

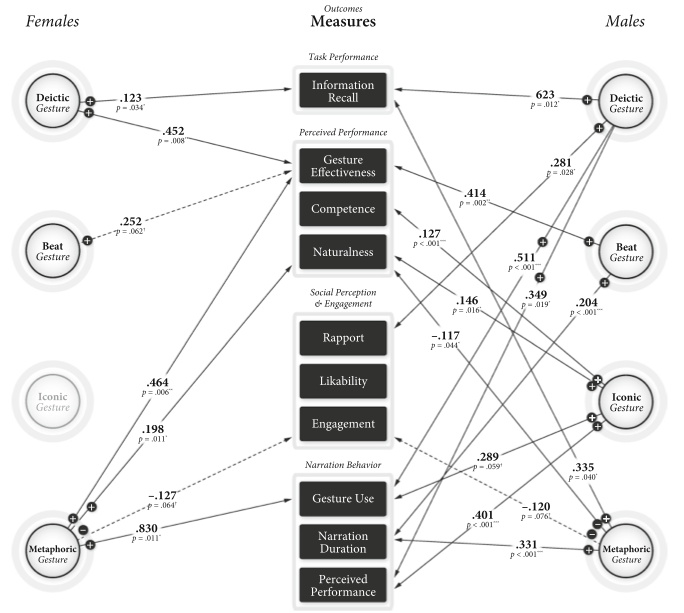

As an example, the figure above shows the Wakamaru human-like robot coordinating speech, gaze, and gesture to tell a story about the process of making paper. The full narration lasted approximately six minutes. One result showed how the robot’s use of pointing gestures improved its audience’s recall of story information and by how much. The impact of different gestures on the robot’s performance is further captured in the diagram shown below. Such a diagram can be used by robot designers to choose appropriate behaviors from a large set of behaviors, or to understand the impact each behavior has on the goals of their design.

For more information, you can read the paper Multivariate evaluation of interactive robot systems (Chien-Ming Huang and Bilge Mutlu, Autonomous Robots – Springer US, August 2014) or ask questions below!

tags: c-Research-Innovation, human-robot interaction