Robohub.org

Jellyfish-like robots could one day clean up the world’s oceans

Most of the world is covered in oceans, which are unfortunately highly polluted. One of the strategies to combat the mounds of waste found in these very sensitive ecosystems – especially around coral reefs – is to employ robots to master the cleanup. However, existing underwater robots are mostly bulky with rigid bodies, unable to explore and sample in complex and unstructured environments, and are noisy due to electrical motors or hydraulic pumps. For a more suitable design, scientists at the Max Planck Institute for Intelligent Systems (MPI-IS) in Stuttgart looked to nature for inspiration. They configured a jellyfish-inspired, versatile, energy-efficient and nearly noise-free robot the size of a hand. Jellyfish-Bot is a collaboration between the Physical Intelligence and Robotic Materials departments at MPI-IS. “A Versatile Jellyfish-like Robotic Platform for Effective Underwater Propulsion and Manipulation” was published in Science Advances.

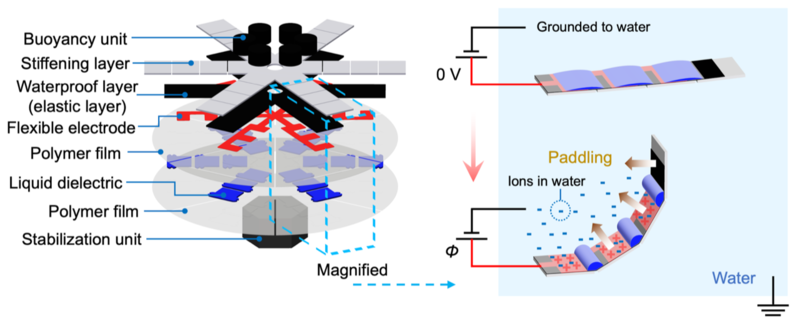

To build the robot, the team used electrohydraulic actuators through which electricity flows. The actuators serve as artificial muscles which power the robot. Surrounding these muscles are air cushions as well as soft and rigid components which stabilize the robot and make it waterproof. This way, the high voltage running through the actuators cannot contact the surrounding water. A power supply periodically provides electricity through thin wires, causing the muscles to contract and expand. This allows the robot to swim gracefully and to create swirls underneath its body.

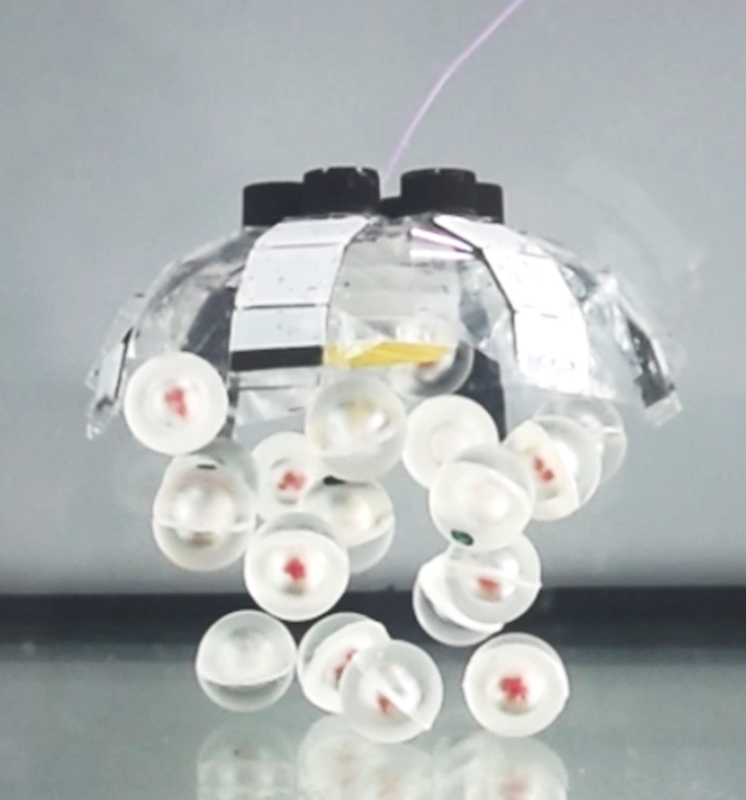

“When a jellyfish swims upwards, it can trap objects along its path as it creates currents around its body. In this way, it can also collect nutrients. Our robot, too, circulates the water around it. This function is useful in collecting objects such as waste particles. It can then transport the litter to the surface, where it can later be recycled. It is also able to collect fragile biological samples such as fish eggs. Meanwhile, there is no negative impact on the surrounding environment. The interaction with aquatic species is gentle and nearly noise-free”, Tianlu Wang explains. He is a postdoc in the Physical Intelligence Department at MPI-IS and first author of the publication.

His co-author Hyeong-Joon Joo from the Robotic Materials Department continues: “70% of marine litter is estimated to sink to the seabed. Plastics make up more than 60% of this litter, taking hundreds of years to degrade. Therefore, we saw an urgent need to develop a robot to manipulate objects such as litter and transport it upwards. We hope that underwater robots could one day assist in cleaning up our oceans.”

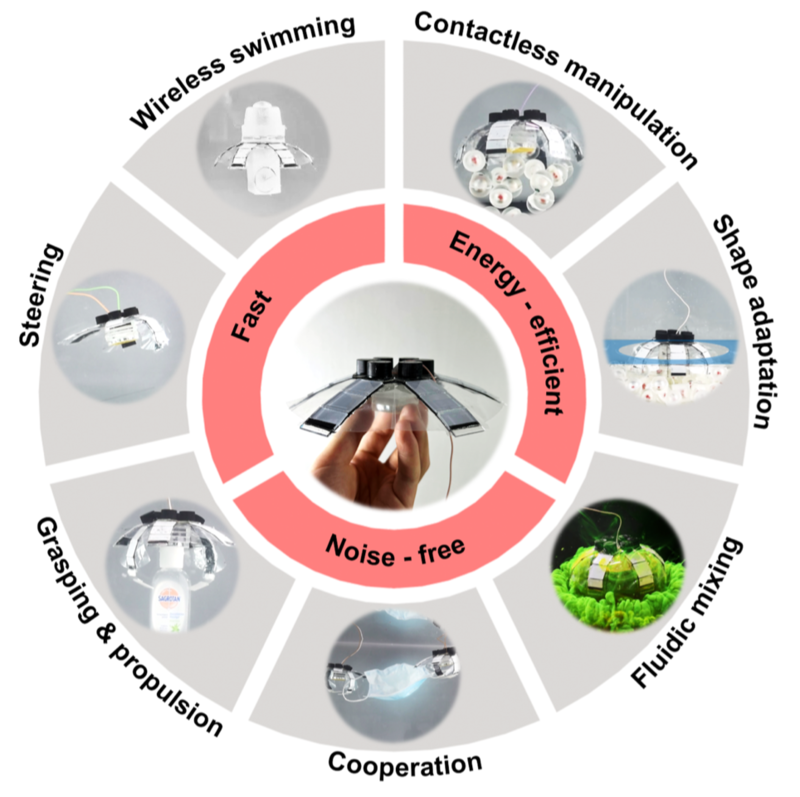

Jellyfish-Bots are capable of moving and trapping objects without physical contact, operating either alone or with several in combination. Each robot works faster than other comparable inventions, reaching a speed of up to 6.1 cm/s. Moreover, Jellyfish-Bot only requires a low input power of around 100 mW. And it is safe for humans and fish should the polymer material insulating the robot one day be torn apart. Meanwhile, the noise from the robot cannot be distinguished from background levels. In this way Jellyfish-Bot interacts gently with its environment without disturbing it – much like its natural counterpart.

The robot consists of several layers: some stiffen the robot, others serve to keep it afloat or insulate it. A further polymer layer functions as a floating skin. Electrically powered artificial muscles known as HASELs are embedded into the middle of the different layers. HASELs are liquid dielectric-filled plastic pouches that are partially covered by electrodes. Applying a high voltage across an electrode charges it positively, while surrounding water is charged negatively. This generates a force between positively-charged electrode and negatively-charged water that pushes the oil inside the pouches back and forth, causing the pouches to contract and relax – resembling a real muscle. HASELs can sustain the high electrical stresses generated by the charged electrodes and are protected against water by an insulating layer. This is important, as HASEL muscles were never before used to build an underwater robot.

The first step was to develop Jellyfish-Bot with one electrode with six fingers or arms. In the second step, the team divided the single electrode into separated groups to independently actuate them.

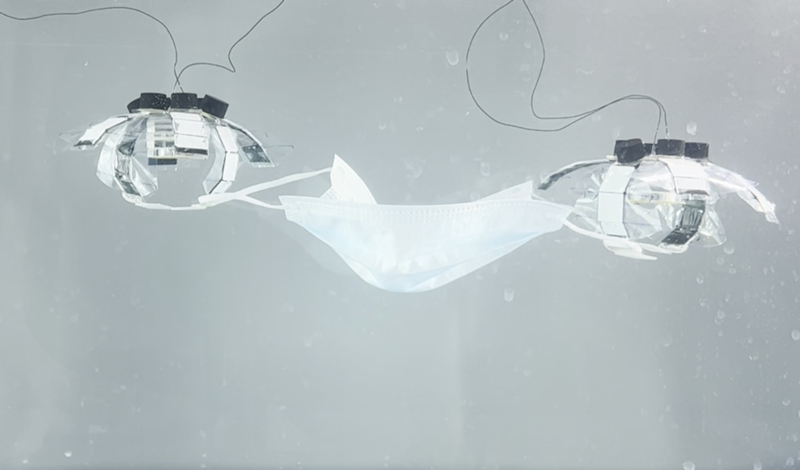

“We achieved grasping objects by making four of the arms function as a propeller, and the other two as a gripper. Or we actuated only a subset of the arms, in order to steer the robot in different directions. We also looked into how we can operate a collective of several robots. For instance, we took two robots and let them pick up a mask, which is very difficult for a single robot alone. Two robots can also cooperate in carrying heavy loads. However, at this point, our Jellyfish-Bot needs a wire. This is a drawback if we really want to use it one day in the ocean”, Hyeong-Joon Joo says.

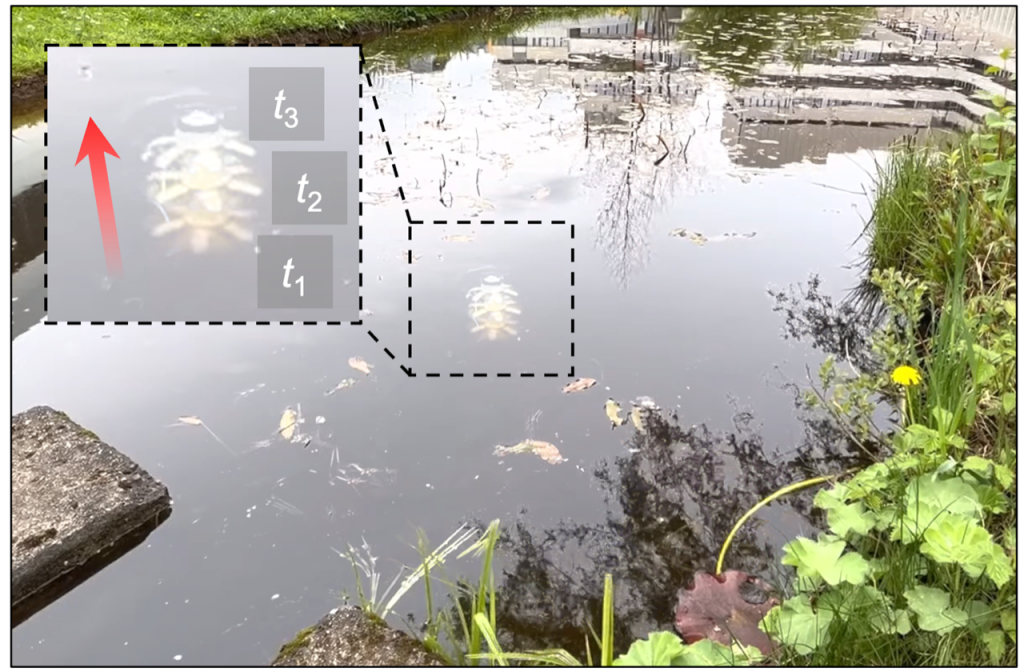

Perhaps wires powering robots will soon be a thing of the past. “We aim to develop wireless robots. Luckily, we have achieved the first step towards this goal. We have incorporated all the functional modules like the battery and wireless communication parts so as to enable future wireless manipulation”, Tianlu Wang continues. The team attached a buoyancy unit at the top of the robot and a battery and microcontroller to the bottom. They then took their invention for a swim in the pond of the Max Planck Stuttgart campus, and could successfully steer it along. So far, however, they could not direct the wireless robot to change course and swim the other way.

Knowing the team, it won’t take long to achieve this goal.

tags: bio-inspired