Robohub.org

Luminar unstealths their 1.5 micron LIDAR

Luminar, a bay area startup, has revealed details on their new LIDAR. Unlike all other commercial offerings, this is a LIDAR using 1.5 micron infrared light. They hope to sell it for $1,000.

1.5 micron LIDAR has some very special benefits. The lens of your eye does not focus medium depth infrared light like this. Ordinary light, including the 0.9 micron infrared light of the lasers in most commercial LIDARS is focused to a point by the lens. That limits the amount of power you can put in the laser beam, because you must not create any risk to people’s eyes.

Because of this, you can put a lot more power into the 1.5 micron laser beam. That, in turn, means you can see further, and collect more points. You can easily get out to 250 meters, while regular lidars are limited to about 100m and are petering out there.

What doesn’t everybody use 1.5 micron? The problem is silicon sensors don’t react to this type of light. Silicon is the basis of all mass market electronics. To detect 1.5 micron light, you need different materials, which are not themselves that hard to find, but they are not available cheap and off the shelf. So far, this makes units like this harder to build and more expensive.

If Luminar can do this, it will be valuable.

Why do you need to see 250m? Well, you don’t for city driving, though it’s nice. For highway driving, you can get by with 100m as well, and you use radar to help you perceive, at very low resolution, what’s going on beyond that. Still, there are things that radar can’t tell you. Rare things, but still important. So you need a sensor that sees further to spot things like stalled cars under bridges. Radar sees those, but can’t tell them from the bridge.

To this point, Google has been the only company to say they have a long range LIDAR, but it has not been for sale. And as we all know, there is a famous lawsuit underway accusing Uber/Otto of copying Google’s LIDAR designs.

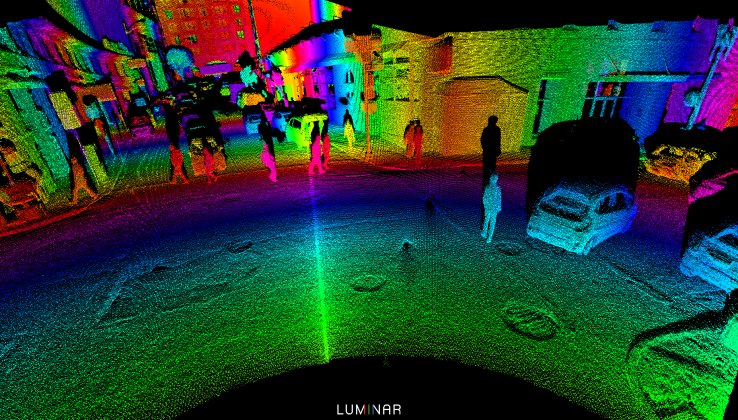

The Luminar point clouds are impressive. This will be a company to watch. (In the interests of disclosure, I am an advisor to Quanergy, another LIDAR startup.)

Article first appeared on robocars.com

tags: Automotive, autonomous vehicles, LIDAR, robocar, robocars