Robohub.org

Radhika Nagpal at #NeurIPS2021: the collective intelligence of army ants

The 35th conference on Neural Information Processing Systems (NeurIPS2021) featured eight invited talks. In this post, we give a flavour of the final presentation.

The collective intelligence of army ants, and the robots they inspire

Radhika’s research focusses on collective intelligence, with the overarching goal being to understand how large groups of individuals, with local interaction rules, can cooperate to achieve globally complex behaviour. These are fascinating systems. Each individual is miniscule compared to the massive phenomena that they create, and, with a limited view of the actions of the rest of the swarm, they achieve striking coordination.

Looking at collective intelligence from an algorithmic point-of-view, the phenomenon emerges from many individuals interacting using simple rules. When run by these large, decentralised groups, these simple rules result in highly intelligent behaviour.

The subject of Radhika’s talk was army ants, a species which spectacularly demonstrate collective intelligence. Without any leader, millions of ants work together to self-assemble nests and build bridge structures using their own bodies.

One particular aspect of study concerned self-assembly of such bridges. Radhika’s research team, which comprised three roboticists and two biologists, found that the ants created bridges adapt to traffic flow and terrain. The ants also disassembled the bridge when the flow of ants had stopped and it wasn’t needed any more.

The team proposed the following simple hypothesis to explain this behaviour using local rules: if an ant is walking along, and experiences congestion (i.e. another ant steps on it), then it becomes stationary and turns into a bridge, allowing other ants to walk over it. Then, if no ants are walking on it any more, it can get up and leave.

These observations, and this hypothesis, led the team to consider two research questions:

- Could they build a robot swarm with soft robots that can self-assemble amorphous structures, just like the ant bridges?

- Could they formulate rules which allowed these robots to self-assemble temporary and adaptive bridge structures?

There were two motivations for these questions. Firstly, the goal of moving closer to realising robot swarms that can solve problems in a particular environment. Secondly, the use of a synthetic system to better understand the collective intelligence of army ants.

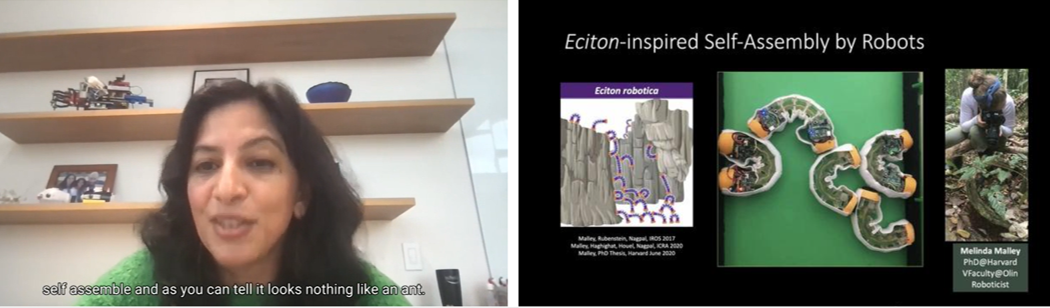

Screenshot from Radhika’s talk

Screenshot from Radhika’s talk

Radhika showed a demonstration of the soft robot designed by her group. It has two feet and a soft body, and moves by flipping – one foot remains attached, while the other detaches from the surface and flips to attach in a different place. This allows movement in any orientation. Upon detaching, a foot searches through space to find somewhere to attach. By using grippers on the feet that can hook onto textured surfaces, and having a stretchable Velcro skin, the robots can climb over each other, like the ants. The robot pulses, and uses a vibration sensor, to detect whether it is in contact with another robot. A video demonstration of two robots interacting showed that they have successfully created a system that can recreate the simple hypothesis outlined above.

In order to investigate the high-level properties of army ant bridges, which would require a vast number of robots, the team created a simulation. Modelling the ants to have the same characteristics as their physical robots, they were able to replicate the high level properties of army ant bridges with their hypothesized rules.

You can read the round-ups of the other NeurIPS invited talks at these links:

#NeurIPS2021 invited talks round-up: part one – Duolingo, the banality of scale and estimating the mean

#NeurIPS2021 invited talks round-up: part two – benign overfitting, optimal transport, and human and machine intelligence

tags: bio-inspired, c-Research-Innovation, Swarming