Robohub.org

RoboTED: a case study in Ethical Risk Assessment

A few weeks ago I gave a short paper at the excellent International Conference on Robot Ethics and Standards (ICRES 2020), outlining a case study in Ethical Risk Assessment – see our paper here. Our chosen case study is a robot teddy bear, inspired by one of my favourite movie robots: Teddy, in A. I. Artificial Intelligence.

Although Ethical Risk Assessment (ERA) is not new – it is after all what research ethics committees do – the idea of extending traditional risk assessment, as practised by safety engineers, to cover ethical risks is new. ERA is I believe one of the most powerful tools available to the responsible roboticist, and happily we already have a published standard setting out a guideline on ERA for robotics in BS 8611, published in 2016.

Before looking at the ERA, we need to summarise the specification of our fictional robot teddy bear: RoboTed. First, RoboTed is based on the following technology:

- RoboTed is an Internet (WiFi) connected device,

- RoboTed has cloud-based speech recognition and conversational AI (chatbot) and local speech synthesis,

- RoboTed’s eyes are functional cameras allowing RoboTed to recognise faces,

- RoboTed has motorised arms and legs to provide it with limited baby-like movement and locomotion.

And second RoboTed is designed to:

- Recognise its owner, learning their face and name and turning its face toward the child.

- Respond to physical play such as hugs and tickles.

- Tell stories, while allowing a child to interrupt the story to ask questions or ask for sections to be repeated.

- Sing songs, while encouraging the child to sing along and learn the song.

- Act as a child minder, allowing parents to both remotely listen, watch and speak via RoboTed.

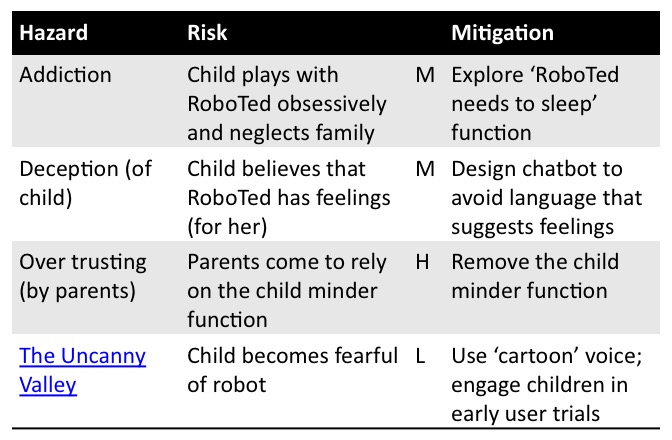

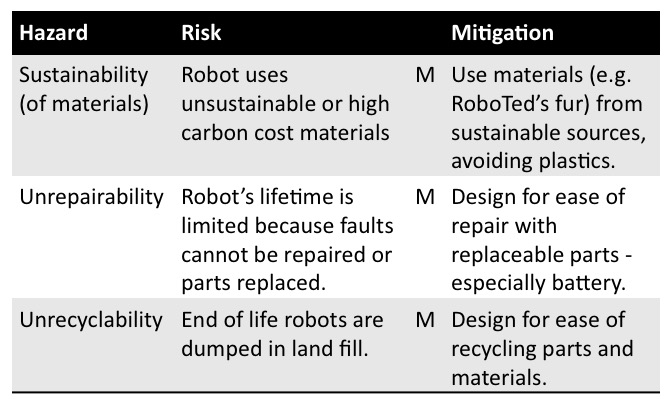

The tables below summarise the ERA of RoboTED for (1) psychological, (2) privacy & transparency and (3) environmental risks. Each table has 4 columns, for the hazard, risk, level of risk (high, medium or low) and actions to mitigate the risk. BS8611 defines an ethical risk as the “probability of ethical harm occurring from the frequency and severity of exposure to a hazard”; an ethical hazard as “a potential source of ethical harm”, and an ethical harm as “anything likely to compromise psychological and/or societal and environmental well-being”.

(1) Psychological Risks

(2) Security and Transparency Risks

(3) Environmental Risks

For a more detailed commentary on each of these tables see our full paper – which also, for completeness, covers physical (safety) risks. And here are the slides from my short ICRES 2020 presentation:

Through this fictional case study we argue we have demonstrated the value of ethical risk assessment. Our RoboTed ERA has shown that attention to ethical risks can

- suggest new functions, such as “RoboTed needs to sleep now”,

- draw attention to how designs can be modified to mitigate some risks,

- highlight the need for user engagement, and

- reject some product functionality as too risky.

But ERA is not guaranteed to expose all ethical risks. It is a subjective process which will only be successful if the risk assessment team are prepared to think both critically and creatively about the question: what could go wrong? As Shannon Vallor and her colleagues write in their excellent Ethics in Tech Practice toolkit design teams must develop the “habit of exercising the skill of moral imagination to see how an ethical failure of the project might easily happen, and to understand the preventable causes so that they can be mitigated or avoided”.

tags: c-Research-Innovation, ethics