Robohub.org

Robots14 brings it all together

One of the chief goals of the Robots14 event in Boston last month was to bring various constituents of the robotics community – innovators, researchers, startups, entrepreneurs – together in one place to hash out what it takes to go “from imagination to market”. Co-organized by Swissnex Boston, Robohub, and Swisslink in collaboration with the Martin Trust Center for MIT Entrepreneurship, this one-day single track seminar and networking event at MIT’s cozy Wong Auditorium was both varied and intimate enough to achieve just that. In case you missed it, here’s a recap.

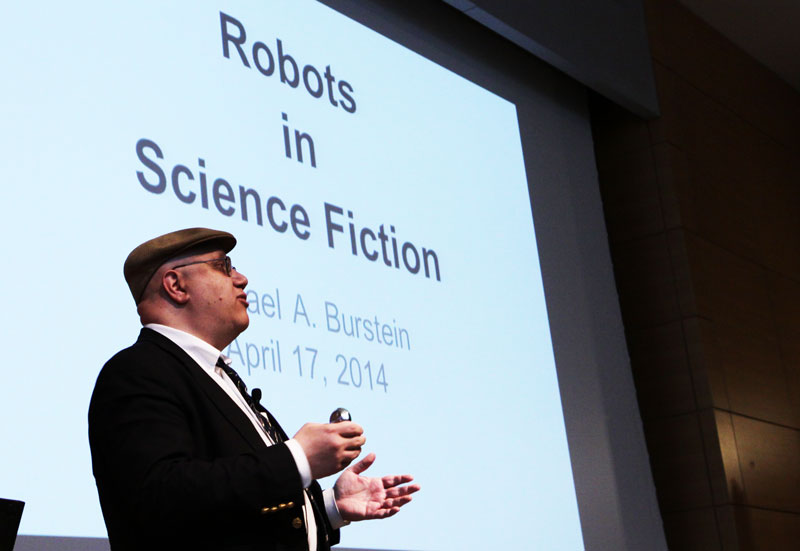

The morning kicked off with a talk by sci-fi writer Michael A. Burstein, who reminded us that the history of robotics began in the imagination, with early Greek classics like Hephaestus from the Iliad and the Pygmalion myth from Ovid describing mankind’s obsession with automaton figures that were human-like “but not born a human being”. Other early literary influences included the hebrew Golem, Frankenstein and even Tik Tok from the Wizard of Oz series, but the first time the word “robot” appeared was in the 1920 Czech play by Karel Čapek called R.U.R., which had the subtitle “Rossum’s Universal Robots”. Even the early Oxford English Definition of robot as a “chiefly science fiction” term illustrates the literary influence on the field. Watch Burnstein’s talk here.

Nature can be stranger than science fiction, however. Radhika Nagpal from Harvard’s Wyss Institute described how self-organizing biological systems like termite colonies can build complex structures through the decentralized actions of many small and limited individuals – a great source of inspiration for roboticists and computer scientists looking to build cooperative systems. Nagpal’s research combines the ideas of “self-assembly” and “social insects” to look at how simple robots with minimal sensing and actions can “mark” or “encode” the environment as a form of communication, allowing them to coordinate to build complex structures that are larger than the robots themselves. Watch Nagpal’s talk here.

MIT’s Sangbae Kim also takes his research cues from nature, and asks us “What is the role of biology in the design process of making a robot?”. Pointing to his bio-inpsired work on the MIT Cheetah, which can run and gallop with remarkable efficiency, Kim reminded us us that, unlike typical robots, animals aren’t designed for a single task; their bodies have evolved not just to jump, run or balance, but also to find food, socialize and reproduce. As such, simplifying models are needed if we are to begin to understand and efficiently mimic animal movements like galloping, turning and jumping. With his next focus on optimization, Sangbae Kim thinks the Cheetah robot system could approach the efficiency of cars within the next few years. Watch Kim’s talk here.

Biomechanics is also fundamental to the research of Conor Walsh, which was presented by Zivthan Dubrovsky from Harvard’s Wyss Institute. Walsh designs soft wearable robots that contain soft, stretchable sensors that measure tension and force in various directions, and which can be used to augment the skeletal structure in people with physical impairments. Noting that rigid exoskeletons actually make it harder to walk, Walsh proposes that soft wearable robotics can augment performance without interfering with our underlying biomechanics – and unlike rigid systems that would require multiple degrees of freedom, soft robotics makes sophisticated bending and twisting motions possible by applying fibre reinforcements and laminates to the device surface.

Flexible materials aren’t just useful in mechanical applications. Dario Floreano of EPFL has worked with bendable sensors that take their cue from nature too, but his focus is vision. Using the compound shape of insect eyes as inspiration, his lab developed Vision Tape – a bendable vision sensor that can be applied to clothing and hats – as well as its predecessor, the Curved Artificial Compound Eye (CurvACE). This technology uses flexible vision sensors to create curved ‘eyes’ that have a wide field of view and excellent motion perception, even in dark environments, making them ideal for obstacle avoidance in wearable applications (such as clothing that can be used as a collision-alert system for the blind), or for flight control in UAVs. Watch Floreano’s talk here.

There was a time when computers were elaborate technologies that were only used by experts, and when Cynthia Breazeal from MIT’s Media Lab first entered the field of robotics, “it was very much in the same place … No one was asking: What would it mean to bring this technology in to the home?” In her early work with Kismet, however, Breazeal noticed that robots had the ability to push our psychological buttons in a very profound way: what would it mean to build a socially intelligent robot? Citing research that showed that people drive more safely with a robot assistant than with a smart phone assistant or even a human assistant, Breazeal asks “What if it’s the case that people do better with robots?”. Breazeal envisions social robotics as a vector for bringing robotics into wider use, and suggests that successful commercialization hinges on thinking of robots as a new media platform through which companies will place recognized brands into the home.

Following a lunchtime packed with robot demos, the startup pitches kicked off the afternoon session. Adrien Briod pitched the Gimball as a robot designed for flight in cluttered spaces – its rotating spherical cage enables it to safely collide with obstacles (even humans!) and continue flying afterwards. John Amend from Empire Robotics pitched the Versaball, a unique gripper with a knack for picking up all kinds of items, from smartphones and Lego pieces to plastic cups and even broken glass! Arron Acosta from Rise Robotics pitched the Cyclone Muscle, an affordable lightweight linear actuator that uses a differential conical drive to turn a rotary motor into a linear ‘muscle’. Aaron Horowitz from Sproutel pitched Jerry the Bear, a robotic stuffed bear that teaches children with Type 1 diabetes to manage their illness through play.

While most robots aren’t designed to elicit a social or emotional response, some, like Jerry the Bear, are. Kate Darling from MIT’s Media Lab spoke about near-term ethical questions in social robot design and human interaction, noting that we respond to social cues from lifelike machines, even if they are not real. For this reason, she argues, it may be in our best interest to extend ‘rights’ to robots (similar to how we have done to animals), not because robots inherently need rights, but because ‘rights’ help to set the bar for socially acceptable behaviour. You wouldn’t want to hang out with someone who ‘tortures’ lifelike robots, would you? Watch Darling’s talk here.

Organizations may invest in robotics to improve efficiency, but Matt Bean from MIT Sloan says that robots can be a source of technical legitimacy too. Pointing to a case study of the use of telepresence robots within a hospital network, Bean determined that “The prime value of a robot may not be in what it was designed to do, but in its value as a legitimizing symbol.” Watch Bean’s talk here.

Perhaps one of the best-known case studies in robotics of imagination-to-market success is Kiva Systems. Kiva’s concept – to bring thousands of networked mobile robots to distribution warehouses to automate the delivery of consumer goods – was motivated in part when Co-founder Mick Mountz discovered a video of the fast-moving robot team that then-Cornell professor Raffaello D’Andrea had fielded at a RoboCup soccer event. D’Andrea soon joined Mountz and electrical engineer Peter Wurman as fellow co-founders, and the threesome embarked on a wild ride that reached epic proportions when Kiva was sold to retail giant Amazon.com for $775 million in 2012. Parris S. Wellman, Senior Director of Engineering at Kiva, spoke about what lies ahead for Kiva now that it has been sold and has to scale to Amazonian proportions. Fortunately for Kiva, scalability was built into the system design from the get-go.

Ben Einstein from Bolt spoke about the challenges of manufacturing in the robotics space. Despite hardware being suddenly the hot thing for VCs to be involved in thanks to cheap components, established manufacturing pipelines in China, crowd funding and 3D printing for rapid prototyping, moving from idea to market remains a serious challenge for most robotics startups. One of the biggest obstacles is convincing VCs that you are solving a real need for a lot of people; they don’t want to invest in a product you sell online, they want to invest in a product that can be purchased through major retailers like BestBuy, which will require thousands of product units on standby. And every ten cents of production cost per unit matters at that scale … it’s a whole new way of thinking of product design for most entrepreneurs when they are starting out. Watch Einstein’s talk here.

Daniel Theobald from Vecna rounded out the speaker’s list with a talk on how we can expect the robotics market to impact us as it evolves in the coming years. Noting that it’s gotten easier since Google “vacuumed up” so many companies and legitimized the robotics space, Theobald said the key to success is to automate things that don’t matter so that people can focus on things that do matter. Theobald expects that robots will be able to do just about any physical job a human can do within the next few decades, meaning that there will be a lot of people looking for something to do in the coming years if we do not make an effort now to rethink our relationship to technology. He believes that the onus is on scientists, engineers and businesses to plan how this new technology can be leveraged to improve the stability of our economic environment, and not just disrupt it.

Robots14 wrapped up with a lively panel discussion on what it takes to launch your robot – you can find a summary of our favourite soundbites from the panel here.

Hope to see you at ROBOTS next year!

tags: c-Events, ROBOTS14