Robohub.org

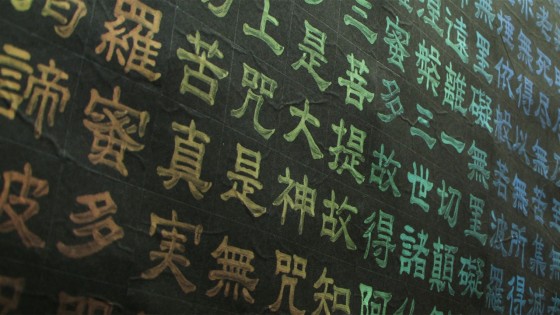

Transience – Dynamic color changing calligraphy harmonizes tradition with technology

Transience is an artwork by the Wakita Lab at Keio University. It is intended to represent a harmony between calligraphy and computers, by dynamically altering the color of calligraphy on paper.

“At first sight, it’s hard to understand, but if you watch for about two minutes, I think you’ll see how the color gradually changes. We suspected that this kind of transient effect could be achieved by combining calligraphy with the computer.”

To change the color of the ink on the paper, the research group did numerous tests, using several kinds of functional ink and conductive materials. In the process, they developed this unique coloring system.

“We’re using ink that changes color with temperature. But we’ve devised a way to change the temperature by printing conductive ink on the back of the paper, and not requiring the previous actuators. This enables us to control the color without losing the look and feel of paper. That’s the major point about this technology. We control the color by using that mechanism and a microcontroller.”

Currently, the computer is programmed to change the color of four rows of characters at a time, but the characters can also be controlled individually.

“For art on paper, an extensive culture has accumulated, including typesetting, woodcuts, and calligraphy. We’d like to keep expressing ideas on paper, while thinking about how to combine that extensive culture with computers.”

tags: c-Arts-Entertainment