Robohub.org

Write and play your own sheet music with the Gocen

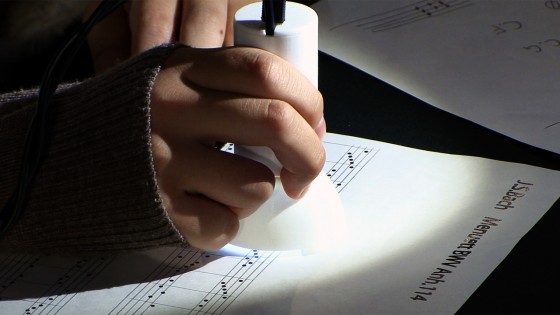

The Gocen is a device which scans and plays handwritten sheet music in real time. It is being developed by a group at the Tokyo Metropolitan University led by Assistant Professor Tetsuaki Baba.

“First, the system looks at the stave, then at the notes, then at the position of the notes, to determine the high notes. In addition, it directly reads words such as piano or guitar. The computer automatically recognizes them, and changes the instrument. Also, for example, if this melody is in F minor, rather than C major, when the system reads the letters Fm, it has the ability to add four flats.”

The sheet music image is analyzed using the OpenCV library in combination with a unique algorithm. While the play head is above a note it will continue to sound that key, and in the case of stringed instruments, if you move it up and down it can make the pitch fluctuate. Also, the size of the notes determines the volume level and it can handle chords as well.

“This idea arose when we were talking with music publishing companies. Until now, sheet music was really only used by placing it on a stand to play an instrument. But it could be played by computer in real time, and given various expressions. We think a system like this will make it easier for children and beginners to learn about musical notation.”

“Lots of people use computers to compose music, but it’s been found through research that paper works well at the initial stage, for deciding what kind of composition, or what kind of melody. In that regard, I feel that this system is very effective as a composition support system.”

tags: music