Robohub.org

A new artificial compound eye

Fruit Fly – drosophila melanogaster. Source: Francis Prior/flickr

Taking cues from the natural world is growing ever more popular in robotics. This week, in a paper published by the Royal Society, a team from NCCR Robotics and LIS, EPFL present a revolutionary new artificial compound eye, created with the fruit fly in mind.

Evolution has cleverly equipped the arthropods’ compound eye with key features that enable these insects to react instantly to danger, even in cluttered environments.Their eyes are able to create low-resolution images with wide fields of view, high sensitivity to motion and a near infinite depth of field, all at low energy cost. The insect’s eye is made up of many thousands of ommatidia and each is an individual sight receptor. Every ommatidia is positioned slightly differently in relation to its neighbours, allowing the brain to collect and compare information from all and piece together a complete image of the surrounding area. These quick functioning, simple eyes are what allow insects to react so quickly when you try to swat them.

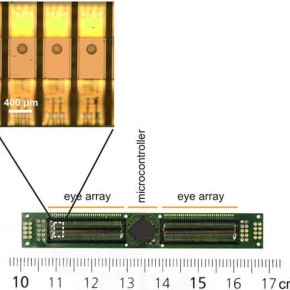

Each small eye can be used individually or combined with others. Source: Pericet-Camara et al., 2015.

With all these advantages in mind, the team, led by Prof. Dario Floreano, has created an artificial version of an ommatidium that functions through three hexagonal photodetectors arranged in a triangular shape, underneath a single lens. These photodetectors work together and combine perceived changes in structured light (optic flow), to present a 3D image that shows what is moving in the scene, and in which direction the movement is happening. This means that even when the eye is moving and the environment remains stationary, relative movement is picked up. With three sensors each receiving and combining optic flow in different directions, the eye is able to function as a stand alone sensor so that the multiple, perfectly aligned single eyes used in previous solutions are no longer required.

The eye has been tested in a wide range of lighting conditions and has been shown to function in environments ranging from a poorly lit room to bright sunshine. It even works three times faster than the insect eyes that it is modelled on, with 300 frames per second recorded during testing. This allows an image of a given environment to build up as the robot is moving, enabling it to spot obstacles and react quickly. The ability to rapidly extract data offline and provide an image suitable for navigation, without the need for high energy expenditure, is what allows this eye to be truly versatile.

Eyes can be arranged on a flexible array Source: Pericet-Camara et al., 2015).

This new sensor is 1925 x 475 x 860 μm in size and weighs only 2mg, making it ideal for a micro air vehicle (MAV), or other flying robots where both speed and payload are of high importance. A further advantage of this method is that multiple sensors may be used together, each pointing in different directions, to provide a miniature omnidirectional camera for motion detection. Since the sensors work independently, they can be mounted in any configuration, even on flexible substrates. The team has demonstrated the operation of their compositional eye in the shape of a bendable strip (which Prof. Floreano calls Vision Tape) because it can be attached to any planar or curved object.

Future work could see the sensor embedded onto the surface of soft and flexible robots or in smart clothing to allow blind people to “see” obstacles; it could be used in medical endoscopes, enabling more precise operations. The fact that it can also be arranged in layers, allowing for adaptation to arbitrary shapes, makes the possibilities for its use endless.

Reference

R. Pericet-Camara, M.K. Dobrzynski, R. Juston, S. Viollet, R. Leitel, H.A. Mallot, D. Floreano, “An artificial elementary eye with optic flow detection and compositional properties,” J. R. Soc. Interface, vol. 12: 20150414, 2015. http://dx.doi.org/10.1098/rsif.2015.0414

If you liked this article, you may also be interested in:

- Robots Podcast: Curved Artificial Compound Eye

- TED talk with Davide Scaramuzza on visual control of MAVs

- DALER: A bio-inspired robot that can both fly and walk

- Robots Podcast: A robot fly at Harvard and at the MoMA

- Developing Jibo: Animating the eye

See all the latest robotics news on Robohub, or sign up for our weekly newsletter.

tags: c-Research-Innovation, MAV, Royal Society