Robohub.org

Gazebo rendering abstraction

During his internship with OSRF, Mike Kasper developed a new ignition-robotics rendering library. The key feature of this library is that it provides an abstract render-engine interface for building and rendering scenes that allows the library to employ multiple underlying render engines.

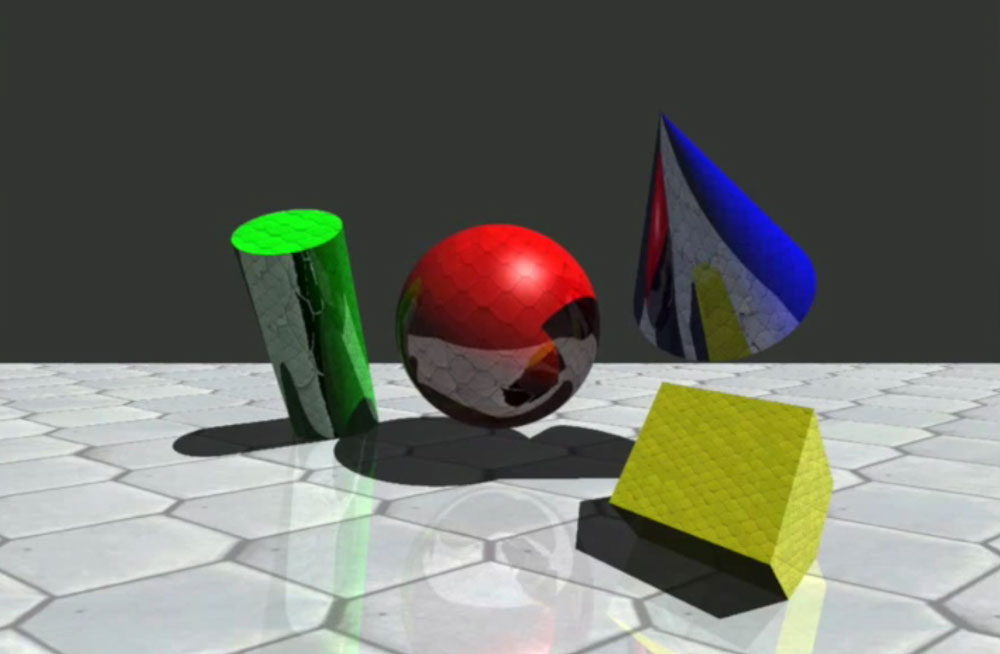

The motivation for this work was to extend Gazebo’s rendering capabilities to provide near photo-realistic imagery for simulated camera sensors. This could then be used for the development and testing of perceptions algorithms.

As Gazebo currently employs the Object-Oriented Graphics Rendering Engine (OGRE), an OGRE-based implementation has been added to the ignition-rendering library. Additionally, a render-engine using NVIDIA’s OptiX ray-tracing engine has also been implemented. The current OptiX-based render-engine employs simple ray-tracing techniques, but will employ physically based path-tracing techniques in the future to generate photo-realistic imagery.

The following videos give an overview of the libraries’ current capabilities:

Source code for the ignition-rendering library is available here:

https://bitbucket.org/

tags: c-Research-Innovation, Gazebo