Robohub.org

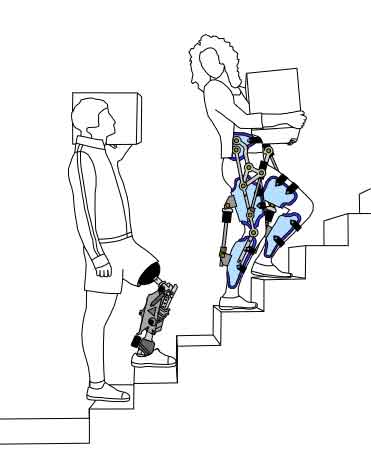

Control strategies for active lower extremity prosthetics and orthotics

Knee orthosis as worn by first author Mike Tucker (photo: ReLab, ETHZ and Alain Herzog).

Much has been made of the numerous advances in robotic prosthetics and orthotics (P/O) over recent years, and the question of how to control these devices so that they work in accordance with the intention of the user is a continuing dilemma for roboticists.

A team from four labs within NCCR Robotics, across ETH Zurich and EPFL (ReLab, ETH Zurich; LSRO, EPFL; SMS, ETH Zurich and CNBI, EPFL) have recently published a joint paper in the Journal of NeuroEngineering and Rehabilitation, in which 10 experts from the field review the state of the art in control approaches for active lower limb P/Os. They argue that for P/Os to be fully viable and to advance further, they must be treated as part of a framework whereby the control system becomes integrated with the user’s sensorimotor system.

Traditionally, the fields of orthotics and prosthetics have been viewed separately, with hardware and controllers developed with a specific portion of the body in mind (i.e. knee, ankle and hips). By taking a broad survey that includes research for all joints of the lower limbs across the different fields (rather than just looking at a small subset), it is hoped that future developments can blur the lines between fields and create technologies that can ultimately restore walking to those with physical or neurological impairments.

This open access review pieces together where the state of the art is now and what work still needs to be done, providing valuable background about the field.

The authors behind the paper have been working to enhance communication between research groups, and to promote a more holistic approach to P/O devices. One of the co-authors is organizing next year’s Cybathlon, where teams comprised of bionic technology developers and a pilot will compete in one of six races.The competition’s ultimate aim is to increase discussion between academia, industry and end users through friendly competition.

tags: c-Research-Innovation, Cybathlon, EPFL, ETH Zurich, robotic prosthetics, Switzerland