Robohub.org

Robot co-workers in the operating room

Robots won’t steal our jobs, but they will help us out conducting tiring and dangerous tasks, enabling humans to focus on creative and teaching activities where fantasy and sensitivity are needed. Think about how robots can help in nuclear plant management and decommissioning, bomb disposal, and humanitarian demining. In healthcare, for example in the operating room, robots already work in close cooperation with the surgeon by helping to perform interventions more accurately and in a comfortable, less invasive way.

What else can a robot help the surgeon do? I asked this question to my friend, neurosurgeon Dr Francesco Cardinale. He shared his thoughts for having a robotic assistant, one that is perfectly trained to understand which surgical tool is needed before a surgeon asks for it. Imagine a robotic “personal” scrub nurse who does not get tired during long and delicate surgical interventions!

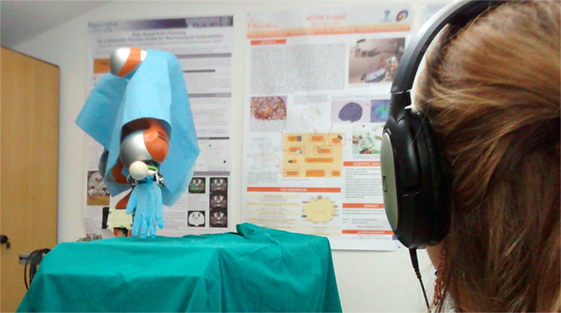

The human-like and the non-human-like trajectories were performed in a random order (10 human-like and 10 non-human-like). Photo: Courtesy of Dr. Elena De Momi, Politecnico di Milano.

But, is this possible? Can a robot play the role of the scrub nurse: passing the right surgical tool at the right moment in the exact place with the proper orientation for the surgeon to find it — in order for him/her to not be distracted from the surgery? In a paper we recently published in Frontiers in Robotics and AI, we looked into this problem that led to some interesting revelations.

First of all, the robotic scrub nurse has to move in a natural way. It has already been proven that humans prefer interacting with a robot whose movements resemble the ones of a human arm. Human motion can be characterized by simple rules: the velocity of reaching movements has a bell-shaped profile, there is a specific relationship between the velocity and the trajectory curvature and the intrinsic redundancy of the human arm is solved optimizing performance criteria. The question we wanted to answer was: Could we teach to the robot to adopt the same motor strategy?

In our lab, Medical Robotic Section (MRSLab) at Politecnico di Milano, we recorded the motion of a human and built a neural network (a general non-linear approximation algorithm) that would act as the “controlling brain” for the robotic arm. Equipped which such a controller, the robot was trained to move towards the target like it were a human arm reaching for the surgeon’s hand (waiting to be handed the proper tool).

But, what else is needed? Certainly increasing its intelligence, as the robot has to understand what the surgeon is doing and which needed surgical tool would be required. This is what we are currently working on. We are doing this by combining techniques of computer vision and artificial intelligence embedded in a physical system.

The path towards the introduction of robotic nurses is long, but our goal is to let our robots be incredibly helpful in order to improve the quality, the safety, the efficacy of surgical interventions.

For more information, please read our research paper:

Elena De Momi, Laurens Kranendonk, Marta Valenti, Nima Enayati and Giancarlo Ferrigno (2016) A Neural Network-Based Approach for Trajectory Planning in Robot–Human Handover Tasks Front. Robot. AI doi:10.3389/frobt.2016.00034

If you enjoyed this article, you may also want to read:

- Robots can successfully imitate human motions in the operating room

- Living with a prosthesis that learns: A case-study in translational medicine

- Putting humanoid robots in contact with their environment

- Robotic research: Are we applying the scientific method?

- Automation should complement professional expertise, not replace it

See all the latest robotics news on Robohub, or sign up for our weekly newsletter.

tags: c-Health-Medicine