Robohub.org

Buzz: A novel programming language for heterogeneous robot swarms

A new programming language designed specifically for robot swarms, Buzz is based on the idea that a developer must be allowed to pick the most comfortable approach to behavioral design – whether that’s bottom-up or top-down.

Designing swarm robotics systems

Swarm robotics is a branch of robotics that studies the coordination of large teams of robots. Swarm robotics systems have the potential to offer solutions for large-scale application scenarios that require reliable, scalable and autonomous behaviors.

Designing the behavior of robot swarms is difficult. The number of interactions among the robots increases steeply with the swarm size, making it difficult to predict the dynamics of the group and to pinpoint the causes of errors.

There are two general approaches to the design of swarm behaviors: the first is bottom-up, focusing on individual behaviors and low-level interactions; the second is top-down, meaning that the developer treats the swarm as a unique entity.

Both approaches have strengths and weaknesses. The bottom-up approach ensures full control of the swarm, but it also exposes the developer to unnecessary details, making development slow and error-prone. Conversely, the top-down approach allows for fast prototyping, but prevents developers from fine-tuning swarm behaviors.

Buzz concepts

Buzz is a new programming language we designed specifically for robot swarms. Buzz is based on the idea that an effective language for robot swarms must allow the developer to pick the most comfortable approach to behavior development – bottom-up or top-down.

The syntax and semantics of Buzz are inspired by well-known programming languages such as JavaScript, Python and Lua. We made this decision to allow for a short learning curve. Analogously to these languages, Buzz provides familiar constructs such as branching conditions, loops and function declarations.

Buzz also includes a number of constructs specifically designed for swarm-level development.

The “swarm” construct allows a developer to split the robots into multiple groups and assign a specific task to each. Swarms can be created, disbanded, and modified dynamically.

The “neighbors” construct captures an important concept in swarm systems: locality. In nature, individuals interact directly and only with nearby swarm-mates. Interactions include communication, obstacle avoidance or leader following. The neighbors construct provides functions to mimic these mechanisms.

Buzz also offers a construct that allows an entire robot swarm to agree on a set of (key, value) pairs. This construct is named “virtual stigmergy,” after the environment-mediated interaction process displayed by nest-building insect colonies.

The run-time platform of Buzz is designed to be lightweight (it occupies just 12KB), efficient, and extensible. In particular, developers can interface the Buzz run-time platform with other frameworks such as the Robot Operating System (ROS) and add new commands and constructs to fit diverse robots.

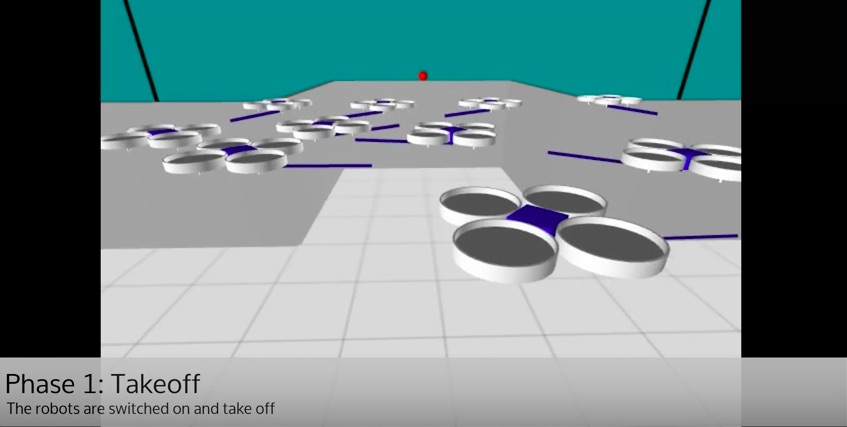

The video shows a swarm of Spiri robots engaged in a detection task. The environment presents two colored objects – one red, one blue – that the Spiris must find using their frontal camera. Some Spiris can see the objects directly, while others cannot, either because their view is obstructed by other robots or because they are too far from the objects. The aim of this behavior is to divide the robots into two swarms, one for each object to detect. The experiment is run using the ARGoS multi-robot simulator.

In the first phase, the robots take off. This phase ends when all robots have reached the target altitude. The robots use virtual stigmergy to inform the others when they are ready.

In the second phase, the robots individually search for the objects by rotating on the spot. When a robot finds an object, the simulator draws a cyan line. Virtual stigmergy is used again by the robots to inform the others whether or not an object was found after a complete rotation.

In the third phase, the robots that saw an object use virtual stigmergy to share information about it, such as distance and color. The robots that saw no objects use this information to pick one of the two objects.

In the fourth phase, two swarms are created – one for each object. The “neighbors structure” is used to separate the robots according to their swarm membership.

Conclusion

The possibility of expressing algorithms both in a bottom-up and in a top-down fashion allows Buzz developers to encode complex autonomous swarm behaviors in a concise way.

For this reason, Buzz has the potential to become an enabler for future research on real-world swarm robotics systems. Currently, no standardized platform exists that allows researchers to compare, share and reuse swarm behaviors. Inescapably, development involves a certain amount of re-coding of recurring swarm behaviors. The design of Buzz is motivated and encouraged by the necessity to overcome this state of affairs. We hope that Buzz will aid the growth of the swarm robotics field.

Future work on Buzz will involve several activities. First, we will integrate the run-time into multiple robotics platforms of different kinds, such as ground-based and aerial robots. Second, we will create a library of well-known swarm behaviors, which will be offered open-source to practitioners as part of the Buzz distribution. Finally, we will tackle the design of general approaches to swarm behavior, debugging and fault detection.

Buzz is released as open-source software under the MIT license. It can be downloaded at http://the.swarming.buzz/.

If you liked this post, you may also be interested in:

CoCoRo: New video series tracks dev’t of collective behaviour in autonomous underwater swarm

Evolving robot swarm behaviour suggests forgetting may be important to cultural evolution

Surgical micro-robot swarms: Science fiction, or realistic prospect?

Swarmbots: James McLurkin on why, how, and when we will see swarm robotics in practice

Thousand-robot swarm self-assembles into arbitrary shapes

See all the latest robotics news on Robohub, or sign up for our weekly newsletter.

tags: c-Research-Innovation, swarm robotics