Robohub.org

Frank Tobe on “What were the highlights at IROS/iREX this year?”

Two images remain in my mind from IROS 2013 last week in Tokyo. The respect for Professor Emeritus Mori and his charting of the uncanny valley in relation to robotics, and the need for a Watson-type synthesis of all the robotics-related scientific papers produced every year.

Let me explain.

Almost all of the presentations at IROS were abstract and technical except for the discussion about Prof. Mori’s Uncanny Valley theory. First of all, he was there and described how he came to observe the uncanny valley under different situations and circumstances. Secondly, all of the presenters and audience were respectful of Prof. Mori’s work, his theory, and him as a person. Third, and most interesting to me, each of the other speakers in this special lecture session described how the uncanny valley theory was relevant in different settings and disciplines. In art, philosophy, psychology — in the works of David Hanson and Hiroshi Ishiguro (both of whom were there) — as well as in medicine, prosthetics and in robotics in general. To me it was a reminder that robotics crosses sciences and connects with humans in many different forms, and this tribute presentation at IROS brought the personal relationships and the breadth of their reach to the forefront, and away from the abstract, theoretical and mechanical side of IROS.

In this video by IEEE/Spectrum, filmed outside the door of the room where the session was held, one can clearly see the multi-science and psychological/philosophical aspects of the theory:

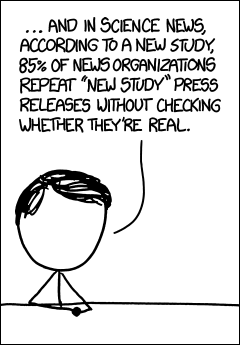

Worse, 90% of scientists don’t even know whether their research is “new” or not.

Ever since I learned of the IBM Watson Jeopardy project my mind has been fascinated with possibilities for practical applications. IBM is on that trail as well and is using Watson to help with medical diagnoses and legal research and briefing. My idea is to get the NSF and IEEE (and other organizations) to commission a Watson project to synthesize robotics and AI-related science papers into a meaningful resource for all to use. At present, there are so many papers published that a researcher cannot possibly read them all. Consequently we don’t even know what we already know. But with Watson, we could know — and we could redirect research activities truly into the unknown without reinventing things over and over.

tags: Algorithm AI-Cognition, c-Events, cx-Politics-Law-Society, cx-Research-Innovation, iREX2013, IROS2013, Masahiro Mori, Uncanny Valley, Watson