Robohub.org

Researchers use single joystick to control swarm of RC robots

What can you do with 12 RC robots all slaved to the same joystick remote control? Common sense might say you need 11 more remotes, but our video demonstrates you can steer all the robots to any desired final position by using an algorithm we designed. The algorithm exploits rotational noise: each time the joystick tells the robots to turn, every robot turns a slightly different amount due to random wheel slip. We use these differences to slowly push the robots to goal positions. The current algorithm is slow, so we’re designing new algorithms that are 200x faster. You can help by playing our online game: www.swarmcontrol.net.

The algorithm extends to any number of robots; this video shows a simulation with 120 robots and a more complicated goal pattern.

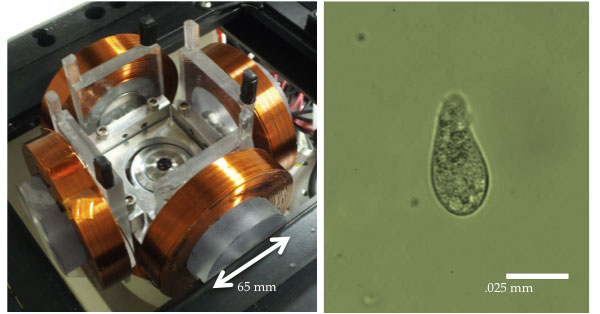

Our research is motivated by real-world challenges in microrobotics and nanorobotics, where often all the robots are steered by the same control signal (IROS 2012 paper). Our colleagues Yan Ou and Agung Julius at RPI and Paul Kim and MinJun Kim at Drexel use an external magnetic field to steer single-celled protozoa swimming in a Petri dish. The same magnetic field is applied to every protozoa. We want controllers to steer them to do useful tasks such as targeted drug delivery and mobile sensing.

Other examples include bacteria that move toward a light source (phototaxis), single celled organisms attracted by a chemical source (chemotaxis), microrobots driven by an external magnetic field (magmites) or capacitive charge (scratch-drive robots), or synthetic molecules with light-driven motors (nanocars).

How it works

To emulate micro or nanorobots, our robots are programmed to behave as simple remote control cars, and tuned to listen to the same frequency.

We can then either drive the robots around with a simple joystick, or let a computer apply the control. Regardless, the commands are the same, and consist merely of “go forwards/backwards x seconds” or “turn left for 2 seconds”. The computer has an advantage over human players, because it can precisely measure the position and orientation of every robot and compute the position error using an overhead camera and printed April Tag barcode attached to each robot.

Our control law is globally asymptotically stable, which means we can start the robots in any configuration and they will be guided to our desired formation. In the video, the robots start in a rectangle and are then guided to form the letter ‘R’. Next, 120 simulated robots start forming the word ‘Robotics’ and are guided to form our university logo and beloved owl mascot.

The math

Our initial work, with Tim Bretl at the University of Illinois, investigated a parallel problem: robust, open-loop control of a robot with unknown parameters. We chose two classical robot platforms to demonstrate our approach, the nonholonomic unicycle and the plate-ball manipulator.

The nonholonomic unicycle is a canonical model for mobile robotics, and can model robots including roombas, tanks and cars. It has two inputs: forward speed and turning rate. It is easy to design an open-loop input sequence to steer the robot if the wheel size is known, but if the wheel size is unknown that same input sequence is scaled by the wheel size and can move the robot to drastically different positions (as shown in the video below). In our paper we showed how to generate open-loop input sequences that are robust to an unknown wheel size. The key insight is that the sequence is designed to move from start to goal an imaginary continuum of robots containing every possible wheel size. This steering algorithm is based on piecewise-constant inputs and Taylor series approximations. Taylor series approximations give us a clear method for increasing precision. If we want the robot continuum to be closer to the goal, we simply increase the order of the Taylor series approximation.

We applied a similar approach to the ball-plate manipulator, a canonical model for robotic manipulation by rolling. In the classical version of this system, a ball is held between two parallel plates and manipulated by maneuvering the upper plate while holding the lower plate fixed. The ball can be brought to any position and orientation though translations of the upper plate. The two inputs are speed of the ball center along the x-axis and speed along the y-axis. Changing the ball size inversely affects the rate of orientation change.

Key insights

For robust control, we steered one real robot with a particular wheel size by imagining steering a continuum of all possible wheel sizes. Later, with Cem Onyuksel and Tim Bretl, we realized instead we could use the same input to steer many real robots. Our approach, based on a Control-Lyapunov function, allowed us to control the position of any number of robots using the same broadcast control signal. You can test this out by purchasing several RC cars tuned to the same radio frequency. If you command the cars to go forward, all will move forward. If you command them to turn, all turn—but due to process noise all turn a slightly different amount. The algorithm, published in another 2012 IROS article, shows that rotational noise improves control, but translational noise impairs control.

Our algorithm allowed us to control the final position of n robots, but we could not control the final orientation. To understand why, consider setting a pocket watch with hour, minute, and second hands. The hour and minute hands overlap 22 times per day, every 12/11 hours (the first crossing is at 1:05:27), but the hour, minute, and second hands overlap only twice: midnight and noon. In the same way, our turning command is sent to every robot. Even steering 10 robots to point in the same direction is like flipping a coin until you get 10 heads in a row. This seemed a closed door. Then I saw Bill Amend’s Foxtrot cartoon on January 1st 2012, where the strip’s younger brother steers 64 differential-drive robots to launch rubber darts at his older sister. The younger brother was holding a simple remote controller – just like our problem. After a day of effort, we proved we could both predict the final orientation of each robot and arbitrarily pick the final position of each robot – sufficient for attacking, surrounding, or defending targets. The video for our upcoming IROS 2013 paper illustrates this algorithm using robots equipped with laser turrets.

Future directions

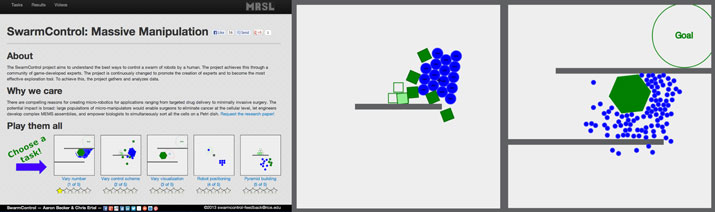

With Chris Ertel, we’ve just launched SwarmControl, an online game where players can steer a swarm of robots to complete challenges. The goal is to test several different control and visualization schemes for swarms of robots, and to gather quantitative data about what mechanisms help people work with these swarms most effectively. Secondarily, we’ve created a simple platform for publishing these academic user experiments projects online – why settle merely for a handful of undergrads to sample with? We’d like to advance the state of the art in experimentation.

All results are available as CSV or JSON from the results page. SwarmControl is an open-source project hosted at https://github.com/crertel/swarmmanipulate.git.

Like this article? You may also be interested in:

- Crowdsourcing new strategies for cancer treatment: Towards swarming nanobots

- Parrot AR.Drone app harnesses crowd power to fast-track vision learning in robotic spacecraft

- How biotech might pool the power of the human brain – with Proteo.me CEO Ivo Georgiev

See all the latest robotics news on Robohub, or sign up for our weekly newsletter.

tags: Algorithm Controls, c-Research-Innovation, Crowdsourcing, Swarming