Robohub.org

Making an iCub robot “clever”

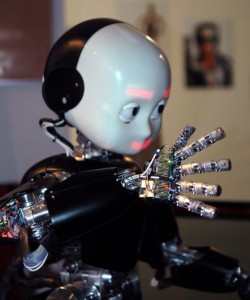

As part of the IM-CLeVeR project, the IDSIA robotics laboratory recently released a video-overview of their work on the technologies, architectures and algorithms required to give an iCub robot more human-like abilities. This includes the ability to reason about and manipulate its local environment, and in the process, to acquire new skills that can be applied to future problems.

The iCub robot is an open-source humanoid platform developed by the Italian Institute of Technology and used by over 20 robotics laboratories worldwide in research areas such as human-robot interaction, computer reasoning, motion planning and “socially aware robotics”.

Some of these we’ve covered on Robohub before: From iCub to artist and iCub drums and crawls using bio-inspired control.

The IM-CLeVeR project was launched in 2009 and uses the iCub as a platform to support the goal of:

Developing a new methodology for designing robots that can cumulatively learn new skills through autonomous development based on intrinsic motivations, and reuse such skills for accomplishing multiple, complex, and externally-assigned tasks.

In the following 13 minute video, researchers from the IDSIA laboratory, an IM-CLeVeR project-partner, describe motivations, challenges and successes in fields such as computer vision, motion planning, “reflex” behaviors and reinforcement learning.

For more information on the project, consult the laboratory’s other videos and technical releases, or visit the IM-CLeVeR homepage.

A list of iCub related projects can be found here.

tags: EU Project, iCub, Learning, video