Robohub.org

Graphene-based sensor to improve robot touch

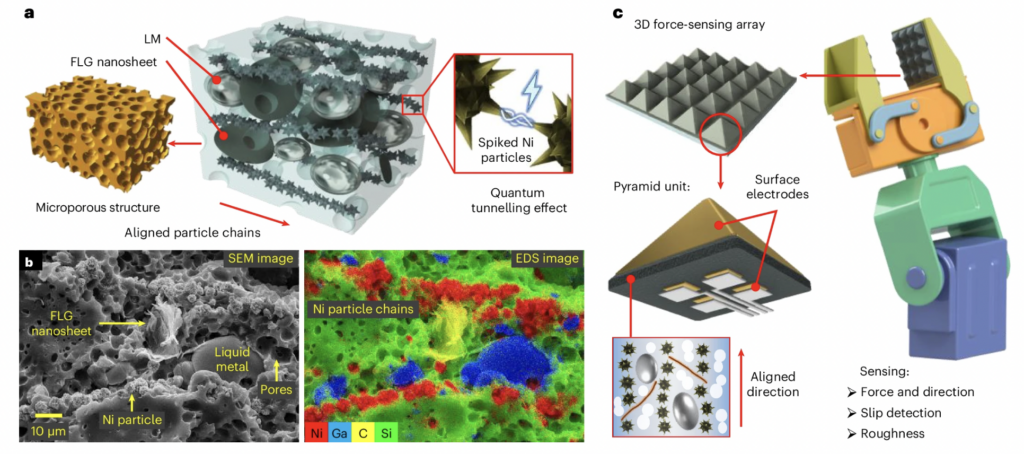

Schematic showing the materials used in the sensor and the sensing array on a robotic manipulator. Figure from Multiscale-structured miniaturized 3D force sensors. Reproduced under a CC BY 4.0 licence.

Schematic showing the materials used in the sensor and the sensing array on a robotic manipulator. Figure from Multiscale-structured miniaturized 3D force sensors. Reproduced under a CC BY 4.0 licence.

Robots are becoming increasingly capable in vision and movement, yet touch remains one of their major weaknesses. Now, researchers have developed a miniature tactile sensor that could give robots something much closer to a human sense of touch.

The technology, developed by researchers at the University of Cambridge, is based on liquid metal composites and graphene – a two-dimensional form of carbon. The ‘skin’ allows robots to detect not just how hard they are pressing on an object, but also the direction of applied forces, whether an object is slipping, and even how rough a surface is, at a scale small enough to rival the spatial resolution of human fingertips. Their results are reported in the journal Nature Materials.

Human fingers rely on multiple types of mechanoreceptors to sense pressure, force, vibration, and texture simultaneously. Reproducing this level of multidimensional tactile perception in artificial systems is a significant challenge, especially in devices that are both small and durable enough for practical use.

“Most existing tactile sensors are either too bulky, too fragile, too complex to manufacture or unable to accurately distinguish between normal and tangential forces,” said Professor Tawfique Hasan from the Cambridge Graphene Centre, who led the research. “This has been a major barrier to achieving truly dexterous robotic manipulation.”

To overcome this, the research team developed a soft, flexible composite material, combining graphene sheets, deformable metal microdroplets, and nickel particles, embedded in a silicone matrix.

Inspired by the microstructures found in human skin, the researchers shaped the material into tiny pyramids, some as small as 200 micrometres across. These pyramid structures concentrate stress at their tips, enabling the sensor to detect extremely small forces while maintaining a wide measurement range.

The result is a tactile sensor sensitive enough to detect a grain of sand. Compared with existing flexible tactile sensors, the new device improves size and detection limits by roughly an order of magnitude.

The sensor can also distinguish shear forces from normal pressure, a capability that allows it to detect when an object begins to slip. By measuring signals from four electrodes beneath each pyramid, the sensor can mathematically reconstruct the full three-dimensional force vector in real time.

In demonstrations, the team integrated the sensors into robotic grippers. The robots were able to grasp fragile objects, such as thin paper tubes, without crushing them. Unlike conventional force sensors, which rely on prior information about an object’s properties, the new system adapts in real time through slip detection.

At even smaller scales, microsensor arrays could identify the mass, geometry, and material density of tiny metal spheres by analysing force magnitude and direction. This opens the door to applications in minimally invasive surgery or microrobotics, where conventional force sensors are far too large.

Beyond robotics, the technology could have significant implications for prosthetics. Advanced artificial limbs increasingly rely on tactile feedback to provide users with a sense of touch. Highly sensitive, miniaturised 3D force sensors could enable more natural interactions with objects, improving control, safety, and user confidence.

“Our approach shows that bulky mechanical structures or complex optics are not required to achieve high-resolution 3D tactile sensing,” said lead author Dr Guolin Yun, a former Royal Society Newton International Fellow at Cambridge, and now Professor at the University of Science and Technology of China. “By combining smart materials with skin-inspired structures, we achieve performance that comes remarkably close to human touch.”

Looking ahead, the researchers believe the sensors could be miniaturised even further, potentially below 50 micrometres, approaching the density of mechanoreceptors in human skin. Future versions may also integrate temperature and humidity sensing, moving closer to a fully multimodal artificial skin.

As robots increasingly move out of controlled factory environments and into homes, hospitals, and unpredictable real-world settings, such advances in touch could be transformative — allowing machines not just to see and act, but to truly feel.

A patent application has been filed through Cambridge Enterprise, the University’s innovation arm. The research was supported by the Royal Society, the Henry Royce Institute, and the Advanced Research and Invention Agency (ARIA). Tawfique Hasan is a Fellow of Churchill College, Cambridge.

Reference

Multiscale-structured miniaturized 3D force sensors, Guolin Yun, Zesheng Chen, Zhuo Chen, Jinrui Chen, Binghan Zhou, Mingfei Xiao, Michael Stevens, Manish Chhowalla & Tawfique Hasan, Nature Materials (2026).