Robohub.org

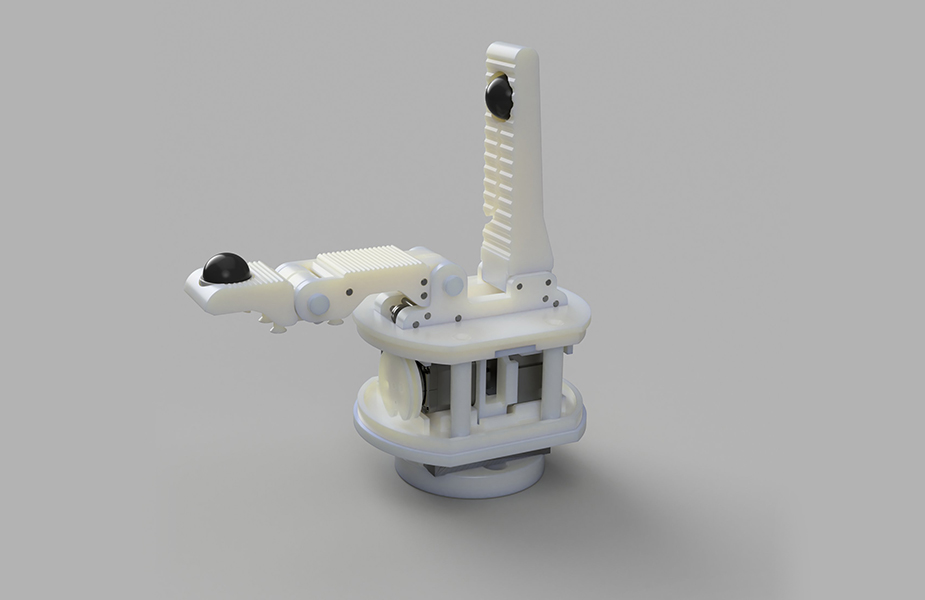

NAIST OpenHand M2S released

The NAIST OpenHand M2S was developed by a team of students as part of the school’s annual CICP project (read the blog post about it here), in which students can propose and organize their own research projects. Based on the Yale OpenHand M2, the NAIST OpenHand M2S was developed for textile manipulation, sensitive grasping as well as pinpoint pushing with high loads. All of the parts (except motors, sensors etc.) can be downloaded from here and 3D printed.

For grasping and manipulation, the hand is equipped with two 3D force sensors that act as fingertips. Using these, the hand can gently grasp textiles and let them glide through its fingers, pull them taut when tension is required, and even recognize different materials. By rubbing its fingertips together, a force signal is generated which allows the hand to sense if it successfully grasped a textile, and which kind of material it is.

Lastly, the rigid finger of the hand allows it to push with high loads and tuck into small openings. This can be used for tasks like making a bed, but also during the manufacturing of a car or airplane seat, which is part of the team’s research. Future versions of the hand will be able to pick up thin objects from a table easily without moving the robot.

You can read the conference paper here. Download the 3D files here, to use the gripper in your own research.

If you liked this article, you may also enjoy these:

- Robotics, maths, python: A fledgling computer scientist’s guide to inverse kinematics

- Python programming your NAO robot

- Soft robotic gripper can pick up and identify wide array of objects

- Sticky business: Five adhesives tested for 3D printing

- 3-D printing hydraulically-powered robots, no assembly required

See all the latest robotics news on Robohub, or sign up for our weekly newsletter.

tags: 3D printing, Actuation, c-Research-Innovation, cx-Industrial-Automation, DIY, gripper, Industrial Manufacturing, Manipulation, open source, Prototype, Research, robot