Robohub.org

Assistive robot operated via a brain-computer interface

Research and development of robotic assistive technologies has gained tremendous momentum in the last decade due to several factors such as the maturity level reached by several technologies, the advances in robotics and AI and the fact that more than 700 million of persons have some kind of disability or handicap. For many people with mobility impairments, essential and simple tasks, such as dressing or feeding, require the assistance of dedicated people. Thus, the use of devices providing independent mobility can have a large impact on their quality of life.

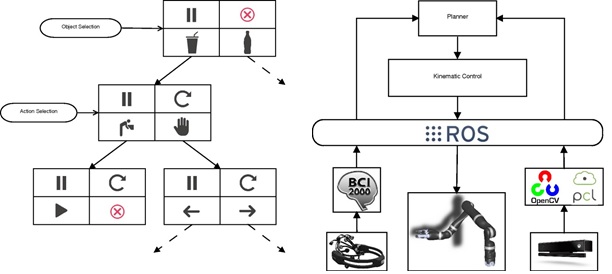

A team of researchers from the University of Cassino, under the direction of Professor Filippo Arrichiello, is working on the development of a control architecture for assistive robotic systems operated via a Brain-Computer Interface (BCI) to allow a user to operate a robot using his thoughts. The proposed software architecture relies on widely used frameworks to operate BCIs and robots (namely, BCI2000 for the operation of the BCI and ROS for the control of the robot) integrating control, perception and communication modules developed for the application at hand.

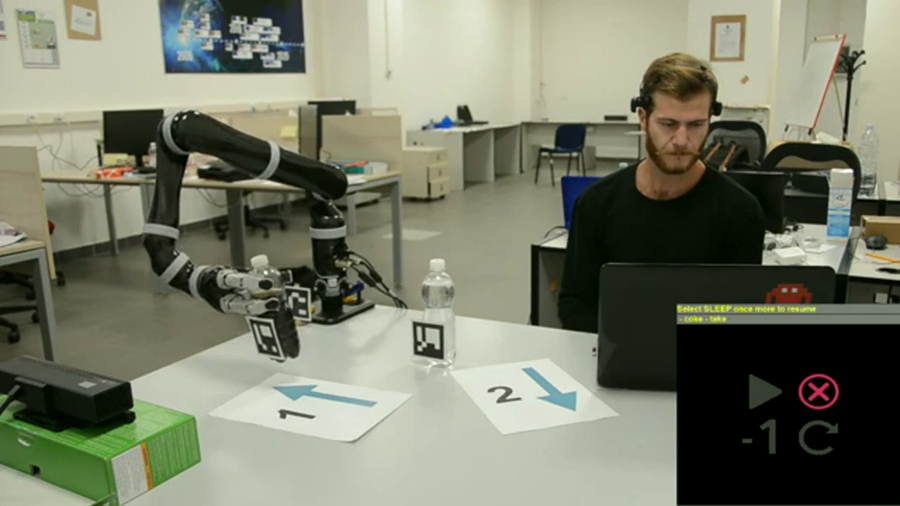

In particular, using the BCI2000 framework, they developed a Graphical User Interface (GUI) based on P300 paradigm to allow the user to select options via a BCI Epoch+. The user can select objects from the scene and the action to perform by focusing attention on the corresponding flashing icons on a screen. By counting how many times the icon he wants to select flashes, the user generates a P300 potential that is detected via the BCI and translated in a selection operation on the GUI.

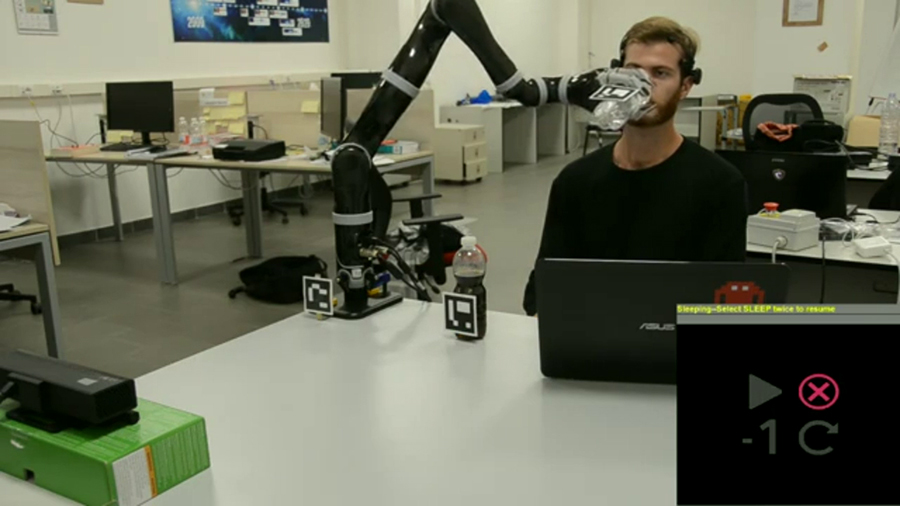

Messages related to the object and actions selected via the GUI are automatically sent to a 7DOF lightweight robot manipulator Jaco2 that has to operate in the environment. The robot control architecture relies on a perception module that allows to detection and localization of objects, and the face/mouth of the user, using a RGB-D sensor (Kinect one). The motion of the manipulator is then controlled relying on a closed loop inverse kinematic algorithm that simultaneously manages multiple setbased and equality-based tasks. Preliminary experiments have been performed where the user commands the robot via the BCI in order to move objects on a table or to select a bottle from where to drink.

Team members: prof. Filippo Arrichiello, Paolo Di Lillo (PhD student), Daniele Di Vito (PhD student), prof. Gianluca Antonelli, prof. Stefano Chiaverini.

Team members: prof. Filippo Arrichiello, Paolo Di Lillo (PhD student), Daniele Di Vito (PhD student), prof. Gianluca Antonelli, prof. Stefano Chiaverini.

Click here for more information on the Robotics Research Group of the DIEI.

If you enjoyed this article, you might also be interested in:

- Virtual race: Competing in Brain Computer Interface at Cybathlon

- Brain Computer Interface used to control the movement and actions of an android robot

- Can a brain-computer interface convert your thoughts to text?

See all the latest robotics news on Robohub, or sign up for our weekly newsletter.

tags: Actuation, Algorithm Controls, Annoincement, BCI, brain-computer interface, c-Research-Innovation, Research, Sensing, software