Robohub.org

313

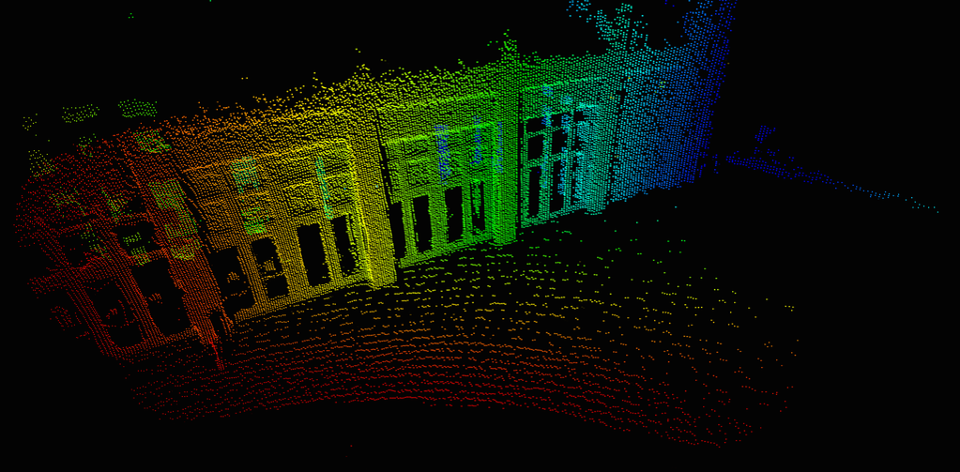

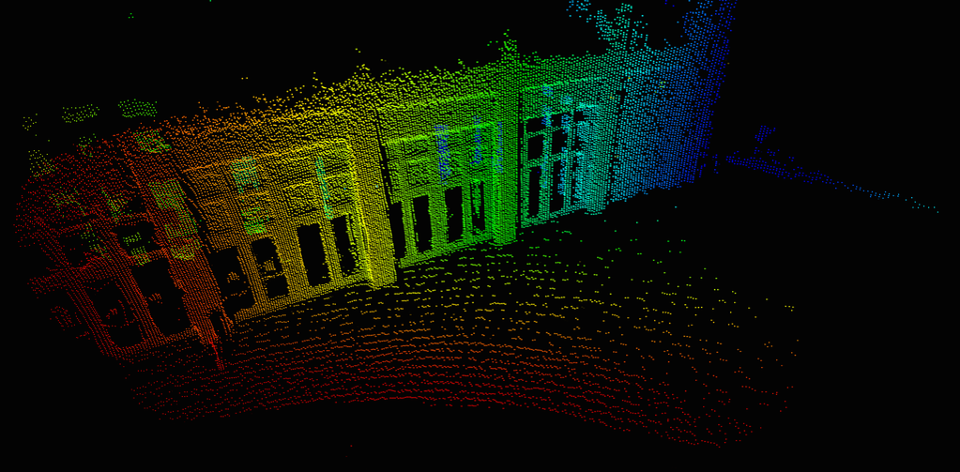

Solid State Lidar – the 3D Camera with Erin Bishop

In this episode, Abate interviews Erin Bishop from Sense Photonics about the technology in their “Solid State” LiDAR sensors that allows them to detect objects more accurately and over a larger field of view than traditional scanning LiDAR. Erin dives into the technical details of Solid State Lidar, discusses the applications and industries of the technology.

Erin Bishop

Erin operates at the intersection of product management, project engineering, customer development, and product-market fit within 3D camera company Sense Photonics. Over the past several years, she has worked to initiate the market appetite for telepresence robots, indoor mobile robots, and robotic picking in warehouses. Erin has made appearances at CES, UX Week, RoboBusiness, HardwareCon, and Mobile Future Forward.

Erin creates partnerships that result in large-scale deployment of the company’s revolutionary solid-state FLASH architecture. Prior to joining Sense, she held product manager, marketing, and research roles with several robotics companies, including Industrial Perception, Inc. – which was acquired by Google in 2013 – Adept Technology, iRobot, and DEKA. Erin earned her Master’s in Mechanical Engineering from UMass Lowell, where she performed extensive research in Human-Robot Interaction.

Links

- Video of Solid State Lidar in action

- Download mp3 (23.2 MB)

- Subscribe to Robots using iTunes

- Subscribe to Robots using RSS

- Support us on Patreon

tags: c-Industrial-Automation, cx-Industrial-Automation, Industrial Automation, podcast, robot, Sensing