Robohub.org

334

Intel RealSense Enabling Computer Vision and Machine Learning At The Edge with Joel Hagberg

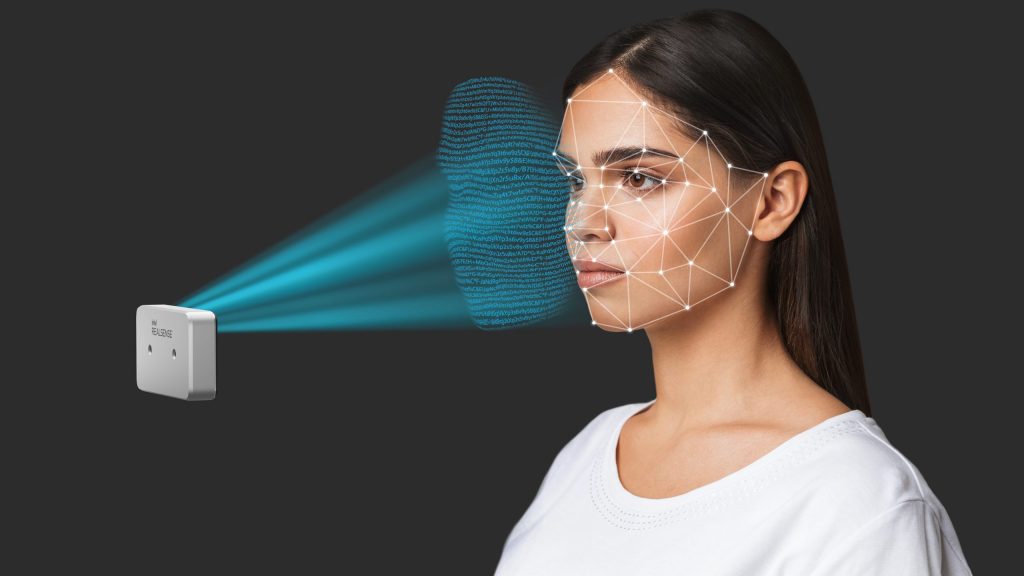

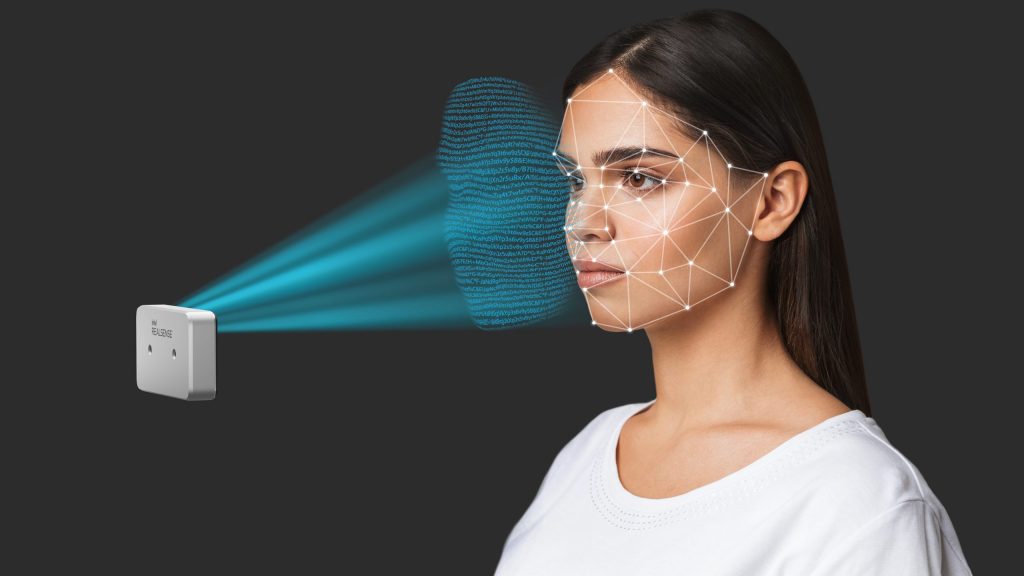

Intel RealSense ID was designed with privacy as a top priority. Purpose-built for user protection, Intel RealSense ID processes all facial images locally and encrypts all user data. (Credit: Intel Corporation)

Intel RealSense is known in the robotics community for its plug-and-play stereo cameras. These cameras make gathering 3D depth data a seamless process, with easy integrations into ROS to simplify the software development for your robots. From the RealSense team, Joel Hagberg talks about how they built this product, which allows roboticists to perform computer vision and machine learning at the edge.

Joel Hagberg

Joel Hagberg leads the Intel® RealSense™ Marketing. Product Management and Customer Support teams. He joined Intel in 2018 after a few years as an Executive Advisor working with startups in the IoT, AI, Flash Array, and SaaS markets. Before his Executive Advisor role, Joel spent two years as Vice President of Product Line Management at Seagate Technology with responsibility for their $13B product portfolio. He joined Seagate from Toshiba, where Joel spent 4 years as Vice President of Marketing and Product Management for Toshiba’s HDD and SSD product lines. Joel joined Toshiba with Fujitsu’s Storage Business acquisition, where Joel spent 12 years as Vice President of Marketing, Product Management, and Business Development. Joel’s Business Development efforts at Fujitsu focused on building emerging market business units in Security, Biometric Sensors, H.264 HD Video Encoders, 10GbE chips, and Digital Signage. Joel earned his bachelor’s degree in Electrical Engineering and Math from the University of Maryland. Joel also graduated from Fujitsu’s Global Knowledge Institute Executive MBA leadership program.

Links

- Download mp3 (14.6 MB)

- Subscribe to Robohub using iTunes, RSS, or Spotify

- Support us on Patreon

tags: Business, podcast, Robotics technology, Sensing